The proposition that performance and user experience are in tension has intuitive appeal. Adding features makes a product richer and more useful - but adding features adds code, which adds bytes, which slows loading. Removing features speeds loading but strips capability. It sounds like a zero-sum game where every improvement in one dimension costs in another.

This framing is wrong, and the wrongness matters because it leads to poor decisions. Teams that accept the false dichotomy routinely sacrifice performance for features they believe improve user experience, without measuring whether those features actually improve the experience for the users who are most affected by slow loading.

The research is clear on what actually happens: 53% of mobile users abandon sites that take over 3 seconds to load, according to Google's 2018 analysis. By the time the rich feature a team spent weeks building renders on a 3G connection, more than half the audience has left. The feature improved the experience for no one, because the users who mattered most never reached it.

Performance is not the opposite of user experience. Failure to load is the worst user experience possible. Speed is a feature that serves every user, on every page, on every visit. The question is not whether to prioritize performance or features - it is how to deliver both, which requires understanding the actual costs and benefits involved in specific decisions.

What Performance Actually Affects

The Quantified Cost of Delay

The research on performance and user behavior has become extensive enough to support precise estimates. Several findings have held up across companies and contexts:

Abandonment before interaction. Google's 2018 mobile benchmarking study found that as page load time increases from 1 second to 3 seconds, the probability of a mobile user bouncing increases by 32%. From 1 second to 5 seconds, it increases by 90%. From 1 second to 10 seconds, it increases by 123%. Most users who would have engaged with a slow page are gone before engaging.

Conversion rate impact. Deloitte's 2020 study "Milliseconds Make Millions," conducted across retail, travel, luxury, and lead generation sites, found that a 0.1-second improvement in mobile site speed increased retail consumer spending by nearly 10%. A 100-millisecond reduction in load time - imperceptible as a difference users could consciously detect - produced measurable revenue effects.

Return visit behavior. Akamai's research found that 79% of shoppers who experience poor site performance say they will not return to that site to purchase. A slow experience does not just cost the current visit; it damages the relationship with the user going forward.

Perception and trust. Users rate slower sites as less trustworthy and less professional, even when they cannot articulate why. This is a direct consequence of Jakob Nielsen's response time research from the 1990s (still validated in modern contexts): response times under 100ms feel immediate, under 1 second feel "flowing," under 10 seconds is the limit for maintaining attention. Above that limit, the user's thought process is disrupted.

Who Slow Pages Hurt Most

The users who are most harmed by poor performance are often the users who are most valuable to reach:

Mobile users on cellular connections. A page that loads in 1.5 seconds on a fiber-connected desktop may take 6-8 seconds on a 3G mobile connection. Mobile users represent more than 60% of web traffic globally. In emerging markets and for many demographics in developed markets, mobile on cellular is the primary (or only) way people access the web.

First-time visitors. Returning visitors benefit from browser cache, which stores previously downloaded resources. A repeat visitor to a well-cached site may load it 80% faster than on the first visit. First-time visitors experience the full cold-load time. Since user acquisition campaigns, search traffic, and word-of-mouth referrals bring predominantly first-time visitors, acquisition channel effectiveness is directly affected by first-load performance.

Users in high-latency environments. Corporate VPNs, satellite internet, congested public WiFi, and shared mobile connections all increase latency even when bandwidth is adequate. Round-trip times of 300-500ms on high-latency connections add to every HTTP request and compound through the page loading sequence.

"Performance is not the opposite of user experience. Failure to load is the worst user experience possible. Speed is a feature that serves every user, on every page, on every visit. The false dichotomy between performance and features leads teams to sacrifice speed for features that the users who matter most never reach." - Google Web Performance team

| Performance Decision | Performance Cost | UX Benefit | Better Alternative |

|---|---|---|---|

| Full-page background video | Very high (100-500KB+) | Visual impact | Compressed WebP loop, poster image |

| Third-party chat widget (always-on) | High (50-200KB) | Support accessibility | Lazy load on scroll or click |

| Custom web font (full set) | Medium (100-400KB) | Brand expression | Subset font, system font fallback |

| Embedded social feeds | High (API calls + JS) | Social proof | Static screenshots, lazy load |

| Full-resolution hero images | High (500KB-2MB) | Visual quality | WebP, srcset, responsive images |

| Analytics + tag manager stack | Medium (50-150KB) | Measurement | Consolidate tags, defer non-critical |

The Genuine Tensions

Despite the argument that performance is part of user experience rather than opposed to it, genuine tensions do exist. Understanding them precisely is necessary for making good decisions.

Feature Richness vs. Initial Load Time

Some features genuinely require substantial code or data to function. A data visualization that allows users to explore complex datasets, an interactive map with real-time overlay data, a collaborative document editor with offline capability - these provide real value and require meaningful resources to deliver.

The tension is not imaginary: adding these features does slow initial load times. The question is whether the feature is valuable enough to the users who reach the page to justify the cost to users who leave because of the additional delay.

The key insight: this is not a binary choice between the feature and its absence. It is a design question about when and how the feature loads. A data visualization can be designed to load its visualization component asynchronously, after the surrounding content is visible and readable. The user sees the article immediately, and the visualization loads a moment later. This is meaningfully better than blocking the entire page on the visualization download.

The facade pattern generalizes this approach: show a lightweight placeholder (a thumbnail, a loading state, a static preview) that loads immediately, and load the expensive component only when the user indicates intent to interact with it. A YouTube embed that would add 500 KB and significant render time can be replaced with a thumbnail image and a play button; the actual iframe loads only when clicked. Users who scroll past the embed never pay the performance cost.

Visual Richness vs. Asset Size

Modern design practices often involve large, high-quality images, custom web fonts in multiple weights, video backgrounds, and complex CSS animations. These contribute to visual richness and brand expression, but they also contribute to page weight and load time.

The tension is real but manageable through optimization. WebP and AVIF image formats provide photographic quality at half the size of JPEG. Subsetting web fonts - including only the character sets actually used on the page - reduces font files from hundreds of kilobytes to tens of kilobytes. System fonts (fonts already on the user's device) eliminate web font download entirely. CSS animations, done properly, run on the GPU and do not affect JavaScript performance.

The design-performance conflict in practice is often not between design quality and performance, but between unoptimized assets and performance. A hero image that is 800 KB as a JPEG might be 150 KB as WebP - same visual quality, dramatically lower cost. The conflict disappears with proper optimization.

Third-Party Script Accumulation

The most common source of performance problems that teams are surprised to discover is third-party scripts: analytics platforms, advertising pixels, marketing automation tags, customer chat widgets, heat mapping tools, A/B testing platforms, social media share buttons, and embedded reviews.

Each of these is added by a different team for a different business purpose. Each seems small and justified in isolation. Collectively, they can add 1-2 MB of JavaScript and several seconds of load time, and they run on third-party infrastructure the development team does not control. A slow response from a third-party script server can delay the entire page rendering even for users on fast connections.

The genuine tension: these tools provide business value that is real and measurable. Analytics inform product decisions. Marketing pixels enable attribution. Chat widgets provide customer support. Removing them has costs.

The resolution requires measurement and prioritization. Audit every third-party script and quantify its performance cost (using WebPageTest's blocking test or Chrome's third-party coverage report). Quantify its business value. Some scripts will clearly not justify their performance cost; remove them. Others are essential; load them asynchronously and defer their initialization until after the critical rendering path is complete.

Frameworks for Making the Tradeoff

Performance Budgets

A performance budget is a policy that specifies limits on performance metrics or resource sizes, enforced as part of the development process. When a budget exists, feature additions and performance are not in conflict - they are both constrained by the same policy, and the policy requires that adding weight must be accompanied by removing weight elsewhere.

Budget dimensions commonly defined:

JavaScript bundle size (compressed). A budget of 200 KB of JavaScript forces teams to consider the cost of every dependency addition. When a library that provides a feature would push the bundle over budget, the team must either optimize existing JavaScript to make room or find a lighter alternative. This is productive friction.

Total page weight. A budget of 1.5 MB for initial page load encompasses all resources: HTML, CSS, JavaScript, images loaded above the fold, and fonts. This creates a shared constraint across design and engineering.

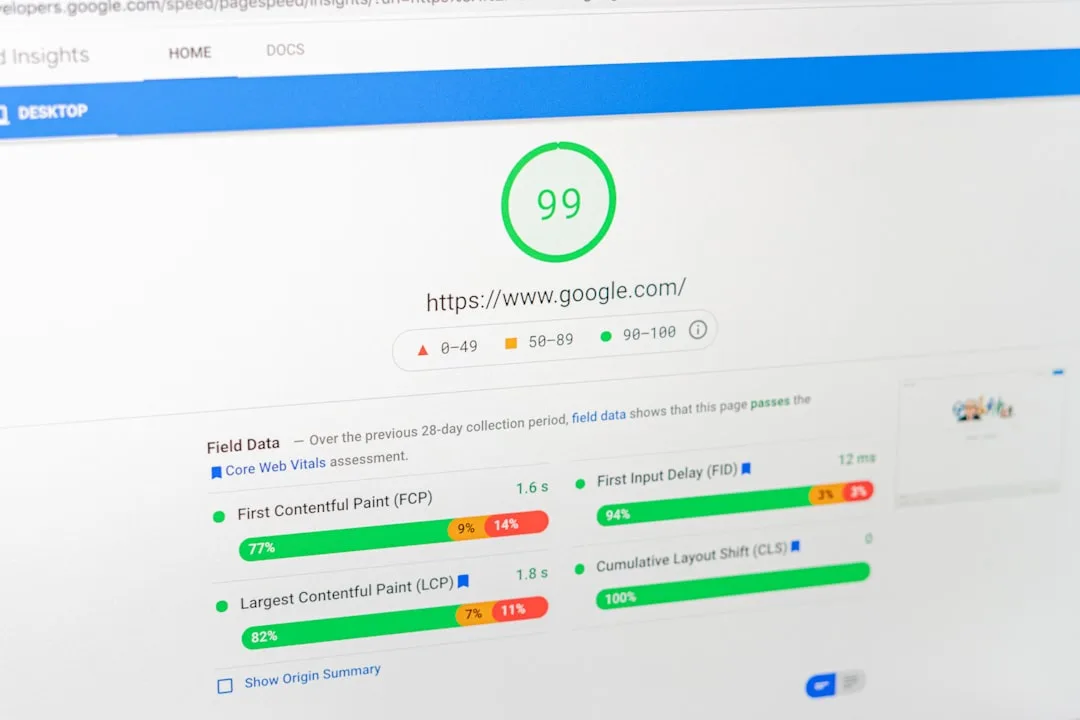

Core Web Vitals targets. Treating the "good" thresholds - LCP under 2.5 seconds, CLS under 0.1, INP under 200ms - as requirements rather than aspirations creates accountability for maintaining them. Automated tests can verify these in CI/CD pipelines and block deployments that would violate them.

The budget model is effective because it makes the tradeoff explicit and routine rather than exceptional. Teams that have budgets tend to evaluate feature-performance tradeoffs continuously; teams without budgets tend to accumulate performance debt and address it in infrequent firefighting efforts.

Progressive Enhancement

Progressive enhancement is a development philosophy that structures sites in capability layers:

The base layer is semantic HTML that conveys content and structure without requiring CSS or JavaScript. This layer works in any browser, on any device, with any network condition. It is fast because it has minimal dependencies.

The second layer is CSS that applies visual presentation. It is loaded separately from HTML, allowing the HTML content to be accessible before styles apply.

The third layer is JavaScript that adds interactivity and dynamic behavior. It is loaded asynchronously after the core content is available. If JavaScript fails to load or executes slowly, the content is still accessible in its base form.

This architecture means that performance problems in the JavaScript layer do not prevent content from being usable - they degrade from a richer experience to a more basic one, rather than producing a blank page or spinner. Users on slow connections or older devices get a functional experience; users on fast connections and modern devices get the full experience.

Progressive enhancement is not about building sites that must work without JavaScript. It is about ensuring that the foundational content value is delivered quickly and reliably, with enhancements layered on top for users whose environment supports them.

The Measure-First Principle

The fundamental error in performance-feature tradeoff discussions is making decisions based on assumptions about user behavior rather than measurements. Assumptions like "this feature will increase engagement significantly" or "that performance impact is too small to matter" may both be wrong, but without measurement, the decision is made in the dark.

A/B testing for feature-performance tradeoffs enables evidence-based decisions. The test design: serve the feature-added version to some percentage of users, serve the original to the rest, and measure both the feature's intended effect (conversion rate, engagement, order value) and the performance-related effects (bounce rate, pages per session, return visits). The comparison reveals the net effect.

Example: An e-commerce team proposes adding a rich product video autoplay feature on product pages. They estimate it will increase purchase intent by 10% based on user research. The engineering team estimates it will add 1.2 seconds to mobile LCP.

Rather than arguing about the tradeoff in the abstract, they run an A/B test. The result: mobile bounce rate on product pages increases 8% (users leaving before interacting), while purchase rate for users who stay increases 9%. Net effect on mobile conversions: approximately 0.

On desktop, where the performance impact is minimal, the feature increases conversions. They implement the feature for desktop and use a static thumbnail for mobile.

This outcome could not have been determined without measuring both effects simultaneously in a controlled test.

Common Decision Errors

Optimizing for Developer Experience

Developer machines are typically recent laptops or desktops with 8-16 GB RAM, fast SSDs, and high-speed broadband connections. Pages that load quickly in local development may load slowly for users on older hardware and cellular connections.

The corrective practice: regularly test on representative user hardware. Chrome DevTools' Network and CPU throttling settings simulate mobile network conditions and slower processors. Google's PageSpeed Insights provides field data from actual Chrome users - real devices, real connections, real geographic locations. This field data is the ground truth; lab testing on developer hardware is an approximation.

Treating All Performance Budget Items as Equal

Not all page weight has equal performance impact. 100 KB of render-blocking JavaScript in the <head> is far more damaging to perceived performance than 100 KB of lazy-loaded images below the fold. The former blocks rendering entirely; the latter is loaded after the page is visually complete.

The performance budget should distinguish between critical-path resources (HTML, critical CSS, above-fold images, essential JavaScript) and non-critical resources (below-fold images, deferred scripts, third-party tools). Strict limits on critical-path resources have high payoff; limits on non-critical resources matter less.

Ignoring Cumulative Effects

Each individual feature addition may seem to have acceptable performance impact. The cumulative effect of 15 modest additions over 18 months may be a site that is 3x slower than when it launched.

The cumulative problem is only visible when you compare current performance to baseline performance over time. Teams that track Core Web Vitals trends - not just current scores but how they have changed over quarters - can identify when incremental additions have crossed a threshold.

Under-Weighting the Performance of Core Pages

Performance budgets and optimization efforts naturally concentrate on the homepage (the most-visited page and the first impression for many users). But for most sites, the pages that drive the most business value are not the homepage: they are category pages, product pages, article pages, or landing pages where users make decisions.

The users who reach product pages or landing pages are at a more advanced stage in their journey - they have higher intent and lower tolerance for friction. Performance degradation on these pages is disproportionately costly to business outcomes.

The Sustainable Balance

The sites that sustain good performance over years - The Guardian, Shopify's merchant storefronts, major e-commerce platforms, leading content sites - share practices that make sustainable balance possible rather than requiring constant remediation:

Performance is treated as a product requirement. In product prioritization, performance improvements compete for resources alongside feature development, with the same rigor of evidence (what business outcome does this improvement achieve?) rather than being treated as technical debt to address when time allows.

Monitoring is continuous. Real User Monitoring (RUM) captures actual Core Web Vitals from real users on real devices, aggregated and tracked over time. Performance changes that are not immediately visible in synthetic testing show up in RUM data as they affect actual users. Anomalies trigger investigation.

Third-party scripts are governed. A policy exists for what third-party scripts may be added, who approves additions, and how their performance costs are evaluated against their business value. The governance prevents uncontrolled accumulation.

New features are evaluated for performance impact before launch. Performance testing is part of the definition of done for new features - not an afterthought after launch, when the political cost of reverting is higher.

The frame that makes this sustainable is treating performance not as a sacrifice for quality but as a component of quality. Users who never see a feature because they left before it loaded did not benefit from it. The performance cost of every feature is a direct cost to the experience of some users; that cost belongs in the evaluation of whether the feature is worth building.

See also: Page Speed Optimization Explained, Technical SEO Explained, and How Search Engines Work.

What Researchers and Industry Studies Reveal About Performance-UX Tradeoffs

The performance-versus-user-experience debate is grounded in a body of research that spans academic human-computer interaction, commercial experimentation, and large-scale observational studies. The most useful findings come from named researchers with access to real user behavior data at scale.

Jakob Nielsen's Response Time Research (1993, Updated 2014): Jakob Nielsen, co-founder of the Nielsen Norman Group and one of the foremost usability researchers in human-computer interaction, established the foundational thresholds for response time perception in his 1993 book "Usability Engineering." Nielsen identified three key thresholds: 0.1 seconds (the limit at which a response feels instantaneous), 1.0 second (the limit at which users' flow of thought is uninterrupted), and 10 seconds (the limit of users' attention before they abandon the task).

These thresholds, derived from laboratory experiments with users performing specific tasks, remained empirically valid when Nielsen's group updated them in a 2014 article for modern web contexts. The critical implication for performance-UX tradeoffs: features that push perceived response time past the 1-second threshold do not feel like enhanced experiences - they feel like broken ones.

Nielsen's research provides the theoretical basis for why features that add even 200-300ms of perceptible delay represent net negative user experience regardless of their functional value.

Google's 2018 Mobile Page Speed Study: Google's research team, working with data from the Chrome User Experience Report, published findings in 2018 (summarized on the Think with Google platform by researcher Daniel An and Pat Meenan of WebPageTest) analyzing the relationship between mobile page load time and user abandonment. The study analyzed millions of real mobile sessions and found that as page load time increased from 1 to 3 seconds, bounce probability increased 32%; from 1 to 5 seconds, bounce probability increased 90%; from 1 to 6 seconds, bounce probability increased 106%; from 1 to 10 seconds, bounce probability increased 123%.

These findings were significant for the performance-UX tradeoff framing because they quantified the user population lost before any feature could be evaluated: a feature that adds 4 seconds of load time on mobile has already eliminated over 90% of its potential audience before the feature renders.

Deloitte's "Milliseconds Make Millions" Study (2020): Deloitte Digital, led by a research team including Damian Radcliffe (Research Professor, University of Oregon School of Journalism) as an external collaborator, conducted a multi-sector commercial study analyzing performance improvements across 37 brands in retail, travel, luxury goods, and lead generation. The study, commissioned by Google, analyzed real transaction data rather than survey responses.

Key findings: a 0.1-second improvement in mobile site load time increased retail conversion rates by 8.4% and average order value by 9.2%. For travel booking sites, the same 0.1-second improvement increased conversion by 10.1%. The study's significance for the performance-UX tradeoff is its sub-perceptual finding: users cannot consciously detect a 100-millisecond difference, yet their commercial behavior changes measurably.

This suggests that performance optimization operates partly below the threshold of conscious user experience, making it impossible to evaluate performance-UX tradeoffs through user surveys or usability studies - only behavioral measurement captures the effect.

Tim Kadlec's Analysis of Feature Adoption Versus Performance Cost: Tim Kadlec, a web performance researcher and consultant who has served on the HTTP Archive advisory committee, published research in 2020 analyzing the relationship between feature adoption rates and performance costs across a sample of 5 million websites from the HTTP Archive. Kadlec's analysis found that the most commonly adopted third-party scripts - Google Analytics, Facebook Pixel, and advertising platforms - collectively added a median of 800ms to page load times on mobile, yet were present on over 60% of analyzed sites.

The research framed the performance-UX tradeoff as an asymmetric information problem: teams that add features see their direct business benefit clearly (analytics data, advertising attribution, conversion tracking) but do not see the distributed cost paid by every user who loads the page. Kadlec's framework suggests that performance budgets are necessary precisely because without them, the visibility of individual feature value systematically outweighs the invisibility of cumulative performance cost.

Annie Sullivan and the Core Web Vitals User Research: Annie Sullivan, a software engineer on Google's Chrome Speed Metrics team, documented the user research methodology behind Core Web Vitals threshold selection in a 2020 web.dev post co-authored with Hongbo Song. Sullivan's team analyzed the Chrome User Experience Report dataset to identify the performance thresholds at which measured user satisfaction (as proxied by session abandonment and engagement signals) showed statistically significant changes.

The research found that the relationship between load time and user abandonment was not linear but showed inflection points - thresholds beyond which abandonment rates increased sharply rather than gradually. These inflection points, identified empirically from real user data across billions of sessions, became the "Good" and "Poor" thresholds for Core Web Vitals metrics.

Sullivan's research methodology is directly relevant to performance-UX tradeoff decisions: it demonstrates that the relationship between performance and user behavior is non-linear, with specific threshold effects that make the cost of being just above a threshold disproportionately higher than the cost of being just below it.

Real-World Performance-UX Tradeoff Case Studies with Measured Outcomes

Abstract principles about performance-feature tradeoffs become actionable when examined through documented cases where organizations measured the actual effects of specific decisions.

Walmart's 1-Second Conversion Study (2012): Walmart Labs, led by performance engineering teams documented in several engineering blog posts on walmart.io, conducted one of the earliest large-scale commercial studies of performance-conversion relationships. Walmart found that each 1-second improvement in page load time increased conversion rates by 2% and reduced bounce rates by measurable amounts.

More significantly for the performance-UX tradeoff framing, Walmart tested specific feature additions alongside performance measurement. A product page recommendation widget that the UX team estimated would increase cross-selling conversions by 5% was added and measured: the widget added 1.3 seconds of load time on mobile, which reduced overall conversion by 2.6% (1.3 x 2% per second).

The net effect was negative. The recommendation engine was redesigned to load asynchronously, eliminating the load-time penalty while preserving the cross-selling functionality. This case is significant because it documents an actual A/B-tested feature where the performance cost exceeded the UX benefit, and where redesigning the loading strategy resolved the tradeoff without sacrificing either goal.

The Guardian's Progressive Enhancement Success (2018): The Guardian, the UK news publication with over 180 million monthly visitors, published a detailed engineering case study in 2018 describing their approach to managing performance-feature tradeoffs at scale. Guardian's frontend engineering team, led by developer Phil Wills, implemented a strict progressive enhancement architecture: core article content was server-rendered HTML requiring no JavaScript, with interactive features (commenting, social sharing, recommendation widgets) loaded as enhancements after the core content was available.

The team measured the effect of this architecture by comparing user sessions on pages where JavaScript enhancement loaded successfully versus sessions where it did not (network conditions or older devices preventing enhancement). Guardian found that users who received only the server-rendered baseline content had time-on-page within 8% of users who received the full enhanced experience - suggesting that the core content value was being delivered effectively without the enhancements.

This finding informed their strategy: enhancements were evaluated against whether they produced behavioral improvements exceeding 8%, setting a practical threshold for which features justified their loading cost.

Etsy's Feature Flag Performance Framework: Etsy, the e-commerce marketplace, documented their approach to performance-feature tradeoffs in a 2014 engineering blog post authored by Lara Hogan (then Senior Engineering Manager, now author of "Demystifying Public Speaking" and a prominent engineering management author). Etsy implemented a system of performance budgets enforced through their continuous integration pipeline: any code change that caused the homepage to exceed performance budget thresholds triggered an automated warning requiring engineer approval before deployment.

Hogan reported that the discipline of performance budgets changed the organizational dynamic around feature additions: instead of performance being a concern raised by the engineering team after a feature was designed, the budget made performance a constraint in the design conversation. Over two years, Etsy's homepage load time (measured at the 95th percentile for real users) improved from 8 seconds to 3.7 seconds despite dozens of new feature additions, demonstrating that performance budgets can enable feature growth without performance degradation.

Google's Image Search Lazy Loading Experiment (2015): Google's own image search team documented a performance-feature tradeoff experiment in an internal case study summarized by Ilya Grigorik in a Google I/O talk. Google's image search results page had traditionally loaded all result thumbnails immediately on page load, on the theory that users wanted instant access to all results.

An experiment tested lazy loading thumbnails below the visible viewport, loading them only as users scrolled. The hypothesis was that this would improve initial page load performance (a measurable UX improvement for the loading experience) at the possible cost of slower image availability while scrolling (a potential UX degradation for the browsing experience). Results: initial page load time improved 20-35% on mobile.

User satisfaction with search results did not decline measurably. The scroll experience showed no detectable degradation because thumbnails loaded faster than users scrolled past them on typical mobile connections. This experiment was significant because Google's own product team discovered that their assumption (all thumbnails immediately available improves UX) was contradicted by measured user behavior - the loading experience mattered more than the immediate availability assumption.

Booking.com's A/B Testing Infrastructure for Performance: Booking.com, which processes over 1.5 million room nights booked daily, has described their approach to performance-UX tradeoffs in engineering talks at Velocity Conference (2016, 2018). Booking.com's performance team, led by engineering researchers including Daan Verbaarschot, implemented a framework for measuring both the performance impact and the business impact of every significant frontend change through simultaneous A/B testing.

Their data, presented at Velocity 2016, showed that a 100ms improvement in load time increased conversion rates by approximately 0.5% across their booking flow - consistent with the Amazon and Deloitte research. More practically, Booking.com's A/B testing framework revealed several cases where features that product managers assumed improved conversion actually degraded it when performance impact was included in the measurement.

A promotional banner system that product management estimated would increase bookings 3% showed net-zero effect in A/B testing once the 300ms load time penalty on mobile was included in the conversion calculation. The framework enabled data-driven resolution of performance-UX tradeoffs that had previously been resolved through organizational hierarchy rather than evidence.

References

- Google. "Why Does Speed Matter?" web.dev. https://web.dev/why-speed-matters/

- Google. "Find Out How You Stack Up to New Industry Benchmarks for Mobile Page Speed." Think with Google, 2018. https://www.thinkwithgoogle.com/marketing-strategies/app-and-mobile/mobile-page-speed-new-industry-benchmarks/

- Deloitte. "Milliseconds Make Millions: How Mobile Speed Helps Drive Business Success." deloitte.com, 2020. https://www2.deloitte.com/ie/en/pages/consulting/articles/milliseconds-make-millions.html

- Nielsen, Jakob. "Response Times: The 3 Important Limits." Nielsen Norman Group. https://www.nngroup.com/articles/response-times-3-important-limits/

- Google. "Core Web Vitals." web.dev. https://web.dev/vitals/

- Grigorik, Ilya. High Performance Browser Networking. O'Reilly Media, 2013. https://hpbn.co/

- Shopify Engineering. "Storefronts Performance Team." shopify.engineering. https://shopify.engineering/

- HTTP Archive. "State of the Web 2024." httparchive.org. https://httparchive.org/reports/state-of-the-web

- Akamai. "Retail Web Performance Expectations." akamai.com. https://www.akamai.com/our-thinking/the-akamai-security-intelligence-group/online-retail-performance-report-apdex-data

- WebPageTest. "Website Performance Testing." webpagetest.org. https://www.webpagetest.org/

Frequently Asked Questions

What is the relationship between performance and user experience?

Performance and user experience are deeply interconnected—performance IS a core component of UX, not separate from it. Performance as UX: Speed affects user perception, satisfaction, and behavior. Research shows: 53% of mobile users abandon sites that take over 3 seconds to load. Every 100ms of delay can reduce conversion rates by up to 7%. Fast sites have lower bounce rates, higher engagement, and better conversion. From this perspective, performance optimization IS UX optimization. A beautiful, feature-rich site that loads slowly provides a poor experience. But performance isn’t the only UX dimension: User experience also includes: Functionality (can users accomplish their goals?). Visual design (is it appealing and clear?). Content quality (is it valuable and understandable?). Interactivity (are interfaces responsive and intuitive?). Accessibility (can all users access and use it?). The tension: Features that enhance UX often hurt performance. Rich animations, high-resolution images, interactive elements, third-party tools (chat, analytics, social), and personalized content all add code, assets, and processing that slow sites down.The tradeoff spectrum: Pure performance: Minimal HTML, no images, no JavaScript, zero third-party code. Lightning fast but lacks features, visual appeal, and interactivity users expect. Think 1990s text-only websites. Pure features: Every possible interactive element, high-res media, complex animations, multiple third-party integrations. Rich functionality but potentially slow, frustrating to use on slower connections or devices. The balance: Most successful sites find a middle ground: Core performance standards met (Core Web Vitals in ‘good’ range). Features that genuinely improve UX are included. Features that provide marginal value but significant performance cost are excluded or deferred. Continuous optimization to maintain balance as features grow. The key insight: Performance and UX aren’t opposing forces—they’re both components of holistic user experience. The question isn’t ‘performance or UX?’ but ‘which features provide enough UX value to justify their performance cost?’ Context matters: The right balance depends on your audience, use case, and business model: Content sites (blogs, news): Performance heavily weighted—users come for information, expect speed. E-commerce: Balance—visuals and features matter for conversion, but slow sites lose sales. SaaS applications: Features weighted higher—users expect rich functionality, tolerate some loading for powerful tools. Mobile-first audiences: Performance critical—mobile users often have slower connections and devices. Understanding the tradeoffs: Every feature decision is a tradeoff. Adding a feature improves one dimension of UX (functionality, aesthetics) but potentially harms another (speed, simplicity). The art is determining which tradeoffs are worth making for your specific context and users.

When should you prioritize performance over features?

Certain contexts demand prioritizing speed over functionality richness. Prioritize performance when: 1) Your audience has limited connectivity or devices: Users on slow mobile connections or older devices suffer disproportionately from heavy sites. If analytics show: High percentage of mobile traffic (especially from regions with slower infrastructure). Users on 3G or slower connections. Older devices with limited processing power. Then performance should be a top priority. Heavy JavaScript, large images, and complex rendering hit these users hardest. 2) Content consumption is the primary goal: If users come to read, watch, learn, or consume information, speed is critical. They’re focused on accessing content quickly, not exploring features. Examples: News sites, blogs, documentation, educational content, recipe sites. Users have high expectations for speed—they just want the article or recipe, not interactive features. 3) You’re in a competitive, commoditized market: If competitors offer similar content or products, speed becomes a differentiator. Users will choose the faster option when functionality is equivalent. Example: Search for a common question (how to boil eggs). The first page result loads in 1 second, the second in 4 seconds. Users will consume the first result even if the second is slightly more comprehensive. Speed wins when value is similar. 4) Conversion is time-sensitive: For transactional flows (checkout, forms, bookings), speed directly impacts conversion. Users in ‘purchasing mode’ have high intent but low patience. Slow checkout flows cause cart abandonment. Slow forms reduce submissions. Fast, frictionless experiences maximize conversion. 5) You’re targeting broad, unknown audiences: If you don’t control your user base (not a logged-in app with known users), assume diversity in devices, connections, and contexts. Optimize for the worst reasonable case, not just your development environment. 6) Performance is currently poor: If your site fails Core Web Vitals (LCP >4s, FID/INP >300ms/500ms, CLS >0.25), performance should be priority #1. You’re in the ‘actively harming UX’ range—no features justify that.How to prioritize performance: Set performance budgets: Define maximum sizes for pages, JavaScript bundles, images. Enforce these budgets in development (build fails if exceeded). Ruthlessly cut low-value features: Audit every script, widget, and feature. Ask: Does this provide significant user value? If not, remove it. Defer non-critical resources: Load only what’s needed for initial page render. Lazy-load images below the fold. Defer third-party scripts until after page interaction. Load features on-demand (click to activate chat widget, not auto-load). Optimize what you keep: Compress images, use modern formats (WebP, AVIF). Minimize and bundle JavaScript/CSS. Use CDNs for faster delivery. Implement caching aggressively. Test on real devices and connections: Use Chrome DevTools throttling or real device testing labs. Don’t optimize for just your fast laptop on office wifi. Measure and monitor: Track Core Web Vitals in the field (real user data). Set alerts for performance regressions. Review performance in every deploy. The mindset shift: Treat performance as a feature, not an afterthought. When proposing new features, include performance impact in the decision. Default to saying ‘no’ to features unless value clearly exceeds performance cost. When performance priority makes sense: You’re building a minimum viable product (lean and fast beats feature-rich and slow for validation). You’ve accumulated technical debt (performance has degraded over time from feature accretion). Users are complaining about speed (direct signal they value speed over current features). You’re launching in new markets with different infrastructure (expanding to regions with slower mobile networks). Prioritizing performance often means building less, but what you build works better for more people. That’s a worthy tradeoff.

When should you prioritize features or functionality over pure performance?

Features sometimes justify performance costs when they provide significant UX or business value. Prioritize features when: 1) Core functionality requires it: Some features are central to the product’s value proposition. Removing or degrading them undermines the product’s purpose. Examples: Video conferencing tools need real-time video/audio (heavy bandwidth and processing). Design tools need complex rendering and interactions. E-commerce needs product images (optimized, but you can’t remove them). These features define the product. Performance must be optimized within the constraint of delivering core functionality, but the features can’t be sacrificed. 2) Differentiation depends on superior features: If competitors have fast but basic offerings, rich features can be your competitive advantage—if executed well. Example: Notion vs plain text editors. Notion is heavier but offers richer functionality (databases, collaboration, embedding). Users accept some performance cost for significantly more capability. The key: features must provide enough value that users willingly trade some speed. 3) Your audience expects and values richness: Sophisticated users or specific contexts value features over speed. Examples: Professional tools (Photoshop, CAD software, IDEs): Users expect power and depth. Gaming and entertainment: Visual fidelity and interactivity are the product. Luxury e-commerce: High-end product presentation justifies heavier imagery and interactivity. These audiences tolerate (or don’t notice on their high-powered devices) performance costs in exchange for rich experiences. 4) Features directly drive revenue: If a feature demonstrably increases conversion, engagement, or retention, some performance cost may be justified. Example: Adding a ‘complete the look’ recommendation engine to product pages. This adds JavaScript and API calls (performance cost). But if it increases average order value by 15%, the tradeoff is worth it. Measure ruthlessly: if the feature doesn’t deliver business value, cut it.5) You’re in the optimization phase, not launch phase: Early products benefit from being lean and fast. Once you have product-market fit and understand user needs, strategic feature additions make sense. Progression: MVP: Minimal, fast, validate core value. Growth: Add features users request and that drive engagement/conversion. Maturity: Balance feature richness with performance through ongoing optimization. 6) Removing the feature would significantly harm UX: Some features, while heavy, prevent worse UX problems. Examples: Form validation: JavaScript for real-time validation adds weight but prevents frustrating submission errors. Search autocomplete: Improves navigation and reduces user effort finding content. Error handling and user feedback: Ensures users understand what’s happening. These features aren’t optional—they’re core UX that happens to require code. How to prioritize features responsibly: Measure feature value: Track engagement with features (how many users use it? how often?). Measure business impact (does it increase conversion, retention, revenue?). Gather qualitative feedback (do users request it, praise it, or ignore it?). Optimize the features you keep: Don’t accept the naive implementation. Use code splitting (load feature code only when needed). Lazy load below the fold. Optimize assets (compress images, use efficient formats). Remove unused code and dependencies. Use efficient algorithms and rendering. Implement progressive enhancement: Deliver core functionality fast (basic HTML/CSS). Enhance with JavaScript for richer interactions once loaded. Ensure the site works (even if not ideally) if JavaScript fails or loads slowly. Set performance budgets even when adding features: Allocate performance budget to high-value features. When adding new features, optimize or remove old features to stay within budget. Test feature impact: Before launching a feature, measure performance impact in staging. Use A/B testing to validate business impact justifies performance cost. Monitor after launch and roll back if impact is negative.The balance: Features should enhance UX, not merely add complexity. Every feature must justify its existence through user value or business impact. If a feature isn’t used, doesn’t drive behavior you want, or doesn’t clearly improve the experience, remove it regardless of how ‘cool’ it is. When feature priority makes sense: You’re building a competitive product where functionality is the differentiator. User research shows clear demand for specific features. Business metrics prove features drive conversions or engagement. You have the engineering resources to optimize features properly. Your audience uses high-end devices and connections (not relying on mobile with spotty connectivity). The right approach isn’t ‘always prioritize performance’ or ‘always add features’—it’s ‘add features that provide clear user or business value and optimize them to minimize performance impact.’ Then measure whether the tradeoff was worth it.

How do you balance performance and UX in practice?

Balancing performance and UX requires systematic decision-making, measurement, and ongoing optimization. Practical frameworks: 1) Performance budgets: Set concrete limits on page weight, load time, and Core Web Vitals scores. Example budget: Total page weight: <1MB. JavaScript: <200KB. LCP: <2.5s. CLS: <0.1. Treat these as constraints, like financial budgets. New features must fit within budget or require ‘paying down debt’ elsewhere. Enforcement: Integrate budget checks into CI/CD. Builds fail if budgets are exceeded. Requires optimizing existing code or removing features to make room for new ones. 2) Value-cost analysis for features: For each proposed feature, evaluate: User value: How many users benefit? How much does it improve their experience? (High/Medium/Low). Business value: Does it drive conversions, engagement, retention? (High/Medium/Low). Performance cost: How much does it slow the page? How much code/assets does it add? (High/Medium/Low). Decision matrix: High value + Low cost = Add immediately. High value + High cost = Optimize heavily, then add. Low value + High cost = Never add. Low value + Low cost = Consider if it aligns with brand or user expectations, but not priority. Medium cases require judgment and testing. 3) Progressive enhancement: Build in layers: Layer 1 (Core): HTML content that works without CSS or JavaScript. Fast, accessible baseline. Layer 2 (Enhancement): CSS for visual presentation. Loads quickly, enhances appearance without blocking content. Layer 3 (Richness): JavaScript for interactivity. Loads asynchronously, enhances UX without preventing content access. Benefits: Fast core experience for all users. Progressive improvements for users with modern browsers and connections. Graceful degradation when resources fail to load. 4) Lazy loading and code splitting: Don’t load everything upfront—defer what isn’t immediately needed. Strategies: Lazy load images below the fold. Load JavaScript modules on-demand (import() for code splitting). Defer third-party scripts until after page interaction. Load features on interaction (click to load map, chat widget, etc.). 5) Critical rendering path optimization: Identify and optimize the critical path—resources required for initial page render. Focus areas: Inline critical CSS (styles needed for above-the-fold content). Defer non-critical CSS. Minimize JavaScript blocking HTML parsing (use async/defer attributes). Preload critical resources (fonts, hero images). Result: Fast initial render even if full page weight is substantial.6) Set UX principles and prioritize: Define what matters most for your users. Example principles: Content accessibility: Users must be able to access content within 2 seconds. Core functionality: Primary user tasks must be performant. Secondary features: Can be lazily loaded or deferred. Visual polish: Important but not at the expense of speed. These principles guide tradeoff decisions. 7) Continuous measurement and optimization: Track both sides of the balance: Performance metrics: Core Web Vitals, page load time, bundle sizes. UX metrics: Task completion rates, engagement, conversion, user satisfaction. Business metrics: Revenue, retention, customer lifetime value. Identify degradation: Monitor for performance regression as features are added. Set alerts for metrics falling below thresholds. Iterate: When metrics decline, investigate and optimize. A/B test to validate optimizations improve outcomes. Real-world examples: Example 1: E-commerce product page: Must have (core UX + performance): Product image (optimized, WebP format, responsive). Product title, price, description (HTML, fast). Add to cart button (critical conversion element). Should have (valuable features, performance-optimized): Multiple product images (lazy-load thumbnails). Reviews (load asynchronously after above-the-fold renders). Related products (lazy-load below the fold). Could have (nice but not essential): 360° product view (load on interaction—click to activate). Live chat widget (defer until user scrolls or shows exit intent). Social sharing buttons (defer or remove if analytics show low use). Example 2: News article: Must have: Article text (HTML, fast, readable). Headline and author (HTML). Featured image (optimized, priority-loaded). Should have: Related articles (load asynchronously). Social sharing (lightweight implementation). Comments (lazy-load far below content). Could have: Auto-playing video (generally avoid or require user interaction). Infinite scroll (adds complexity, often harms UX). Pop-up newsletter signup (use exit-intent or scroll-based trigger, not immediate). Example 3: SaaS dashboard: Must have: Core data visualizations (optimized charts, lazy-render off-screen). Key metrics and KPIs (server-rendered or cached). Navigation (HTML-first, enhanced with JS). Should have: Interactive filters and controls (lazy-load complex libraries). Detailed reports (paginate or lazy-load sections). Notifications (WebSocket or polling, optimized). Could have: Real-time collaboration features (high value for some users, add if needed). Customizable dashboards (complex, defer unless high engagement). AI-powered insights (expensive to compute, offer as separate feature).Making tradeoff decisions: For every feature: Define the problem it solves. Estimate performance impact (test in staging). Measure user/business value (via testing or user research). Decide if value exceeds cost. If yes, optimize heavily and add. If no, defer or reject. Ongoing optimization: Weekly: Monitor performance metrics. Identify regressions. Monthly: Review feature usage analytics. Cut underused features. Optimize heavily-used features. Quarterly: Comprehensive performance audit. Review and adjust performance budgets. Evaluate whether UX principles need updating based on user feedback and business goals. The mindset: Performance and UX are both investments in user satisfaction and business outcomes. Neither should be sacrificed thoughtlessly. Every decision is a tradeoff—make it consciously, measure it carefully, and optimize continuously. The goal isn’t perfection on a single dimension but finding the right balance for your specific users, product, and business.

What are common mistakes when balancing performance and UX?

Several patterns lead to poor balance between performance and user experience. Common mistakes: 1) Assuming performance doesn’t matter because features do: Belief: ‘Users care about features, not speed. Load time doesn’t matter if functionality is great.’ Reality: Users notice speed even if they don’t articulate it. Slow sites frustrate users, who then don’t appreciate features. Bounce rates increase, engagement decreases. Features don’t matter if users leave before experiencing them. Example: A beautifully designed, feature-rich e-commerce site with 8-second load times. Users abandon before seeing the great UX. Competitors with simpler but faster sites convert better. Fix: Recognize performance as a foundational UX component, not separate from it. 2) Optimizing for the wrong users/devices: Testing only on developer machines (fast laptops, office wifi) and assuming that’s representative. Reality: Users have diverse devices (old phones, budget laptops) and connections (3G, spotty home wifi, mobile data). What feels fast in your development environment may be unusable for real users. Example: Site performs great on your MacBook Pro over fiber internet. Analytics show 60% mobile users with 4-second average LCP. Fix: Test on real devices and throttled connections. Use field data (CrUX, RUM) to understand actual user experience. Optimize for 75th percentile user, not best case. 3) Adding features without removing or optimizing old ones: The ‘more is better’ mentality—features accumulate, page weight grows, performance degrades. Reality: Technical debt accumulates. Old features may be unused but still add weight. Each feature seemed justified individually, but collectively they’ve made the site slow. Example: Site started fast at launch. Two years later, it’s slow despite no major changes—just incremental feature additions. Fix: Regularly audit feature usage. Remove unused features. Optimize or consolidate before adding new ones. Enforce performance budgets to prevent unbounded growth.4) Deferring performance ‘until later’: Belief: ‘We’ll launch with all the features, then optimize for speed later.’ Reality: Performance debt is harder to fix retroactively than to build correctly initially. Pressure to ship new features means ‘later’ never comes. Users experience poor performance in the meantime, harming retention and reputation. Example: MVP launches with slow performance. Team plans to optimize but immediately starts on feature 2.0. Six months later, performance is worse, and backlog is too full to prioritize optimization. Fix: Build performance in from the start. Set performance budgets before first feature is added. Treat performance as a feature with equal priority to functionality. 5) Ignoring user feedback on speed: Users complain about slowness, but data shows ‘acceptable’ load times on internal metrics. Reality: Perception of speed includes factors beyond load time (perceived performance). Users on the margin (slow devices, poor connections) feel the pain most. Aggregate data hides the worst experiences. Example: Average LCP is 2.8s (good), but 75th percentile is 5.5s (poor). Half your users have bad experiences, but average looks fine. Fix: Look at percentile distributions (50th, 75th, 90th), not just averages. Listen to qualitative user feedback. Prioritize fixing the worst experiences. 6) Over-relying on synthetic testing: Lab tests (Lighthouse, WebPageTest) show great scores, but users report slowness. Reality: Synthetic tests use consistent devices and connections. Real users have diverse, often worse, conditions. Synthetic tests don’t capture dynamic content, personalization, or A/B testing overhead. Example: Lighthouse scores 95⁄100. Real user LCP (from CrUX) is 4.5s. Fix: Use field data (real user monitoring) as source of truth. Synthetic tests are useful for diagnosis but don’t reflect real experience. 7) Treating all features equally: Every feature gets the same level of performance optimization effort. Reality: Some features are core, high-value, and justify optimization effort. Others are peripheral, low-value, and should be deprioritized or removed rather than optimized. Optimizing everything equally wastes resources. Example: Spending days optimizing a rarely-used admin feature while the main conversion flow is slow. Fix: Prioritize optimization on high-impact pages and features (homepage, conversion flows, popular content). Cut or defer low-impact features rather than optimizing them. 8) Forgetting mobile-first reality: Designing and optimizing for desktop, then adapting to mobile as an afterthought. Reality: Mobile is often the majority traffic source. Google uses mobile-first indexing. Mobile has tighter performance constraints (slower devices, connections, smaller screens). Desktop-first design often translates poorly to mobile (heavy assets, complex layouts). Example: Desktop site is fast. Mobile site is the same code and assets, resulting in slow, bloated mobile experience. Fix: Design and optimize mobile-first. Desktop can scale up, but mobile can’t scale down easily. Test and measure mobile performance as primary metric.9) Ignoring third-party script costs: Adding marketing pixels, analytics, chat widgets, social media embeds, ad networks without considering cumulative impact. Reality: Third-party scripts are often unoptimized and heavy. You don’t control their performance. They can block rendering, cause layout shifts, and slow pages dramatically. Example: Site code is lean and fast, but 10 third-party scripts (analytics, ads, social, marketing) add 2MB and 4 seconds to load time. Fix: Audit all third-party scripts. Remove what isn’t essential. Defer loading until after primary content renders. Use facade patterns (lightweight placeholder until user interacts). Monitor third-party performance impact. 10) Not measuring business impact of performance: Treating performance as a technical metric without tying it to business outcomes. Reality: Performance impacts bounce rate, conversion, revenue, SEO, and user satisfaction. Without showing business impact, it’s hard to prioritize performance work. Example: Engineers know the site is slow but can’t get resources to fix it because leadership sees features as more important. Fix: Measure and communicate business impact: ‘Improving LCP from 4s to 2s increased conversion by X%.’ Tie performance work to revenue, retention, or user satisfaction. Make the business case for performance. Avoiding these mistakes: Treat performance as a first-class feature, not an afterthought. Measure both performance (Core Web Vitals, load times) and UX (conversion, engagement, satisfaction). Test on real devices and connections, not just development environments. Regularly audit and remove underused features. Enforce performance budgets to prevent unbounded growth. Prioritize optimization effort on high-impact areas. Measure and communicate business value of performance work. Balance is possible when both sides—performance and features—are measured, valued, and optimized continuously. Extremes in either direction harm users and business outcomes.