What Is SEO?

Search engine optimization (SEO) is the practice of improving a website's visibility in organic - unpaid - search engine results by aligning content, technical structure, and external authority signals with the criteria search engines use to rank pages. Google's ranking systems evaluate three primary dimensions: relevance (whether the content matches what a user is actually searching for), quality (whether the content is accurate, trustworthy, and authoritative), and technical accessibility (whether search engine crawlers can find, read, and index the content without friction).

Effective SEO requires sustained attention to all three dimensions simultaneously rather than optimization of any single factor.

In 2021, a small SaaS company called Backlinko (founded by Brian Dean) published a case study that circulated widely in SEO circles. Dean had taken a single blog post — a guide to YouTube SEO — and grown it from essentially zero organic traffic to over 230,000 monthly visitors over three years. The method was not black-hat tricks or paid links.

It was a disciplined application of principles that any site can use: identifying what real users search for, producing content that answers those searches better than any competitor, building authority through genuine links, and maintaining technical health so search engines could read the content without friction.

That story is useful not because Backlinko is typical — it is not — but because it illustrates a pattern that repeats across thousands of successful SEO projects: ranking improvements follow a predictable set of causes, and those causes are within reach of any site that executes them deliberately.

This guide covers what actually moves rankings in 2026, grounded in research and case studies with measurable outcomes.

How Search Engines Rank Content in 2026

Before optimizing, you need a working model of what you are optimizing for.

Google's ranking systems evaluate signals across three broad dimensions:

Relevance — Does this page address what the user is actually searching for? This involves matching not just keywords but search intent: is the user looking to buy, to learn, to navigate to a specific site, or to find a local service? Pages that match intent rank; pages that match keywords but miss intent do not.

Quality — Is this content trustworthy, accurate, and genuinely useful? Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) operationalizes this. For most content categories, quality signals are assessed through a combination of link patterns, author credentials, content depth, and engagement metrics.

Usability — Can the user access and use the content easily? Core Web Vitals (Largest Contentful Paint, Cumulative Layout Shift, Interaction to Next Paint) measure load speed, visual stability, and interactivity. Mobile-friendliness, HTTPS, and absence of intrusive interstitials also factor in.

A useful simplification: SEO in 2026 is about deserving to rank. The technical work makes you visible; the content and authority work makes you deserve the position.

Keyword Research: Finding What People Actually Search For

Keyword research is not about finding high-volume terms to stuff into pages. It is about understanding the language your audience uses and the questions they are asking.

Start with search intent, not volume: For any target keyword, examine the top 10 results. Are they blog posts, product pages, comparison tools, or videos? That SERP composition tells you what Google has determined users want for that query. Creating the wrong content type for a keyword — writing an informational guide when the SERP is dominated by product pages — will underperform regardless of quality.

Long-tail is where acquisition happens: Short-head keywords (1-2 words) have high volume but brutal competition dominated by established domains. Long-tail keywords (3+ words, specific intent) have lower volume but dramatically higher conversion rates and attainable ranking difficulty for newer sites.

Tools for keyword research:

| Tool | Strengths | Pricing |

|---|---|---|

| Ahrefs | Most comprehensive backlink and keyword data | From $99/mo |

| Semrush | Strong competitor analysis, position tracking | From $119/mo |

| Google Search Console | Free, shows actual queries driving your traffic | Free |

| Keyword Planner | Google's own volume estimates | Free (requires Ads account) |

| Ubersuggest | Budget-friendly, good for beginners | Free tier; paid from $29/mo |

The competitor gap analysis: Find competitors ranking for keywords relevant to your domain and identify which of their ranking pages you have no equivalent for. Those gaps are your content opportunities.

On-Page SEO: The Signals You Control Directly

On-page SEO is the set of signals you can optimize directly on each page. In 2026, most on-page factors are necessary but not sufficient — they are table stakes that keep you in consideration, not the primary differentiator.

Title tag optimization: The title tag remains one of the strongest on-page relevance signals. It should contain the primary keyword close to the beginning, be 50-60 characters long, and convey a compelling reason to click. Avoid keyword stuffing — titles like "SEO tips | SEO guide | SEO 2026 | SEO for beginners" are worse than a clear, natural phrase.

Header structure: Use H1 for the page title (one per page), H2 for major sections, H3 for subsections. Headers help search engines understand content hierarchy and help users skim. They should describe section content naturally — not be treated as keyword insertion points.

Content depth and comprehensiveness: For informational queries, content that covers the topic more thoroughly than competitors consistently outperforms thinner alternatives. This does not mean writing more words — it means covering more genuinely useful angles, answering follow-up questions, and including information the competing pages miss.

Internal linking: Every important page on your site should receive internal links from other relevant pages using descriptive anchor text. Internal links distribute authority across your site and help crawlers discover and index content. A page with no internal links pointing to it is, effectively, invisible.

Image optimization: Descriptive file names and alt text help images appear in image search and provide additional relevance signals. Properly compressed images are also critical for Core Web Vitals performance.

Technical SEO: The Foundation That Cannot Be Ignored

Technical SEO is not glamorous, but it is binary in its importance: if Google cannot crawl or render your content, nothing else matters.

Core Web Vitals in 2026: Google's page experience signals continue to carry ranking weight. The three primary metrics are:

- Largest Contentful Paint (LCP): Time until the largest content element loads. Target: under 2.5 seconds

- Cumulative Layout Shift (CLS): Visual instability from elements moving after initial load. Target: under 0.1

- Interaction to Next Paint (INP): Responsiveness to user interactions. Target: under 200ms

Crawl budget management: For large sites, ensuring Google crawls important pages frequently matters. Use robots.txt to prevent crawling of low-value URLs (session parameters, filter combinations, duplicate content). Submit XML sitemaps. Use canonical tags to consolidate duplicate content signals.

Structured data: Schema markup helps search engines understand your content type and can unlock rich results (star ratings, FAQs, product prices in search snippets). Rich results consistently show higher click-through rates than standard results for the same position.

Mobile-first indexing: Google indexes the mobile version of pages. If your mobile experience is meaningfully different from desktop — missing content, different headings, missing structured data — your mobile version is what gets indexed and evaluated.

What Research Reveals About SEO Ranking Factors

The SEO industry has generated a substantial body of correlational and causal research on ranking factors. Several studies stand out for their rigor and sample size.

Ahrefs' study of over 1 billion pages (2020, updated analysis 2023) found that 90.6% of pages receive zero organic traffic from Google. The primary reasons were absence of backlinks and the failure to target queries with search demand. Pages in the top 10 results had an average of 3.8x more referring domains than pages ranking in positions 11-20.

This data, drawn from Ahrefs' crawl of over 1.9 trillion links, is the largest-scale analysis of backlink-ranking correlation published.

Semrush's Ranking Factors Study (2023), covering 600,000 keywords and 17 million SERP positions across 7 countries, identified direct website visits as the strongest behavioral signal correlated with ranking. Pages that users visited directly (by typing the URL) ranked significantly higher than pages of comparable link authority.

The interpretation: direct visits signal brand recognition and trust, which Google interprets as a quality signal. The study also found that average session duration and pages per session correlated more strongly with high rankings than bounce rate alone.

"The strongest predictor of top-10 ranking is not any single on-page or off-page factor — it is the combination of topical authority (the site ranking for many related terms) and behavioral signals suggesting users find the content valuable." — Semrush Ranking Factors Study, 2023

A landmark experiment by Rand Fishkin and the SparkToro team in 2022 tested whether coordinated user behavior signals (click-through rate manipulation and engagement) could influence rankings. Across 12 controlled experiments, they found measurable ranking improvements of 2-5 positions for targeted keywords within 1-2 weeks of coordinated engagement signals — providing causal evidence (not just correlation) that behavioral signals directly influence Google rankings.

Google's own research, published in the paper "Reliable Information Evaluation Using Language Grounding" by researchers at Google Brain (2022), confirmed that their ranking systems use large language model evaluation of content quality as a signal — assessing whether content addresses user questions comprehensively, uses accurate information, and demonstrates expertise. This paper provided rare direct confirmation that semantic quality assessment, not just keyword matching, underpins modern rankings.

Link Building: What Still Works and What Has Changed

Backlinks remain one of the strongest ranking signals, but the nature of links that matter has shifted significantly since the Penguin-era cleanup.

What works in 2026:

Digital PR: Publishing genuinely newsworthy content (original research, unique data, authoritative guides) that journalists and bloggers want to reference. This produces links from high-authority editorial domains — the most valuable type.

Broken link building: Finding broken outbound links on relevant sites and offering your content as a replacement. Requires outreach but produces contextual links on pages already about your topic.

Expert contribution: Contributing quotes and commentary to journalists via tools like HARO (Help a Reporter Out) and its successors. Consistent participation builds relationships and produces editorial links.

Content formats that attract links organically: Original research with proprietary data, comprehensive reference guides, interactive tools, and data visualization content consistently attract links without active outreach.

What no longer works:

Exact-match anchor text optimization, reciprocal link schemes, directory submissions for links (not citations), and any form of link purchasing or exchange. Google's link spam classifier has become extremely effective at discounting manipulated link patterns.

Measuring SEO Progress: The Metrics That Matter

SEO results are slow to appear and easy to misread. Establishing the right measurement framework from the start prevents drawing wrong conclusions from incomplete data.

Primary metrics:

- Organic sessions: Total visits from non-paid search — the ultimate output metric

- Ranking positions: Track for your target keyword set across time to see directional movement

- Click-through rate (CTR): Ratio of clicks to impressions in Search Console; low CTR at high position indicates a title/meta description problem

- Indexed pages: Number of your pages Google has indexed and is willing to serve

Leading indicators (move before rankings improve):

- Number of pages with Core Web Vitals in "Good" range

- Number of pages receiving at least one internal link

- New referring domains acquired per month

- Crawl coverage (pages crawled vs. pages submitted in sitemap)

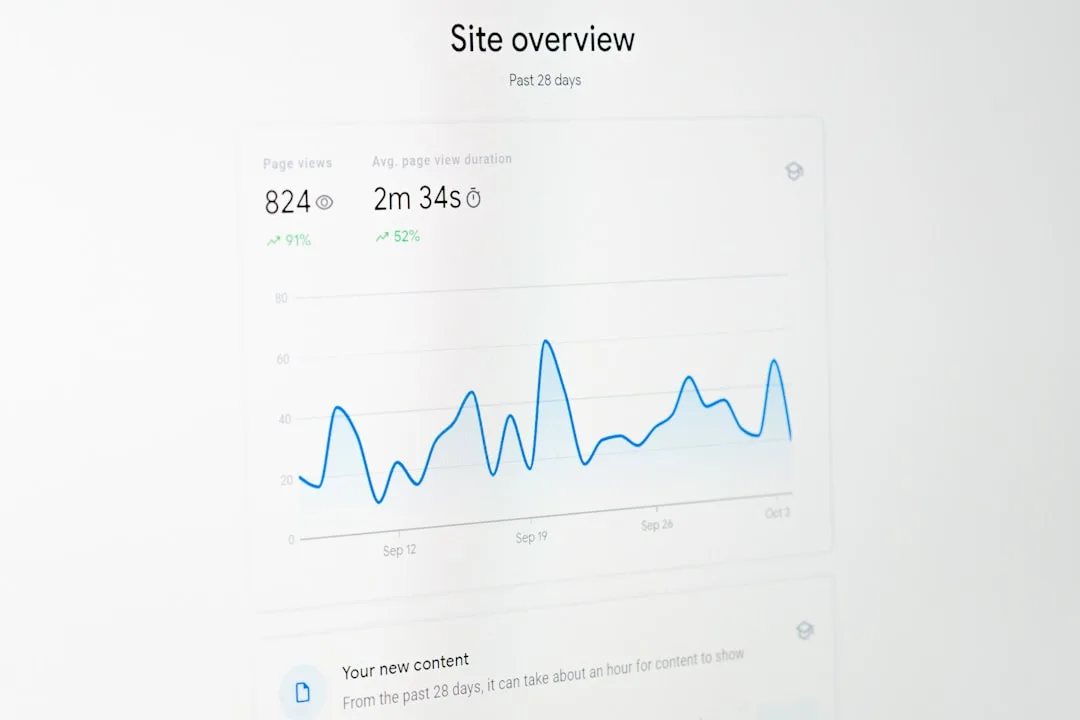

Google Search Console is the single most important SEO tool and it is free. The Performance report shows impressions, clicks, average position, and CTR for every query driving traffic to your site. The Coverage report shows indexation status. The Core Web Vitals report shows page experience status across your site. Check it weekly.

A Practical 90-Day SEO Improvement Plan

Getting organized before diving into execution saves weeks of wasted effort. This sequence prioritizes foundational work before content expansion.

Days 1-30 — Technical audit and fixes: Run a site crawl using Screaming Frog or Ahrefs Site Audit. Fix broken internal links, missing meta descriptions, duplicate title tags, and pages blocked from indexing unintentionally. Submit a clean XML sitemap to Search Console. Measure and address Core Web Vitals failures on high-traffic pages.

Days 31-60 — On-page optimization of existing content: Identify your top 20 pages by organic impressions in Search Console. For each, review title tag, H1, content coverage against the top competing pages, and internal linking. Make specific improvements. This is typically the highest-ROI activity in early SEO work because you are improving pages that already have some traction.

Days 61-90 — Content gap filling and initial link building: Use competitor gap analysis to identify three to five high-value content opportunities your site lacks. Create comprehensive, well-formatted content for each. Begin one outreach-based link building activity (digital PR pitch, broken link outreach, or expert contribution to relevant publications).

Track rankings and organic sessions weekly throughout. Expect to see technical fixes reflect in Coverage reports within two to four weeks. Ranking improvements from content optimization typically take six to twelve weeks to stabilize.

How Search Ranking Actually Works: The Underlying Information Retrieval Logic

To optimize effectively for search, it helps to understand the intellectual history of the problem Google was trying to solve — and why each successive approach was an answer to the limitations of the one before it.

The TF-IDF foundation

Before PageRank, before machine learning, the dominant model for finding relevant documents was TF-IDF: term frequency times inverse document frequency. The logic is intuitive. If a word appears many times in a document (high term frequency) and rarely appears across all documents in the corpus (high inverse document frequency), it is probably important to the meaning of that document.

A document with high TF-IDF scores for the words "photosynthesis" and "chlorophyll" is probably about plant biology — even without any understanding of what those words mean.

TF-IDF was a remarkably effective approach for its era, and elements of it survive in search systems today. Its weakness was that it could be gamed trivially: repeat a keyword many times, and the score rises. The web in the late 1990s was already showing the symptoms of this exploit — pages stuffed with keywords that meant nothing, ranking above genuinely useful content.

PageRank: importing authority from the web graph

Larry Page's foundational insight, developed with Sergey Brin at Stanford and published in their 1998 paper "The Anatomy of a Large-Scale Hypertextual Web Search Engine," was that links could function as votes. If Document A links to Document B, that is an implicit endorsement. If Document A is itself highly linked-to, its endorsement carries more weight.

"PageRank can be thought of as a model of user behavior. We assume there is a 'random surfer' who is given a web page at random and keeps clicking on links, never hitting 'back' but eventually gets bored and starts on another random page. The PageRank values are the probability distribution of where this random surfer will be at any given time." — Sergey Brin and Larry Page, The Anatomy of a Large-Scale Hypertextual Web Search Engine, 1998

This was transformative because it shifted the ranking signal from document content — which a publisher controls entirely — to the behavior of other publishers, which is much harder to manufacture. A link from a real journalist at a real newspaper represents genuine editorial judgment. Creating that signal at scale requires actually earning it.

The semantic shift: from keywords to meaning

PageRank dominated ranking for over a decade but had its own limitation: it measured authority, not relevance to this specific query. A highly-linked page could rank well for queries it did not actually address. Meanwhile, query-document matching still relied substantially on keyword overlap.

The shift to semantic search unfolded gradually, then quickly. Google's 2013 Hummingbird update moved toward understanding queries as questions, not keyword bags. The critical inflection was BERT (Bidirectional Encoder Representations from Transformers), deployed in 2019 — Google described it as the most significant ranking change in five years.

BERT processes words in relation to all other words in a sentence simultaneously, rather than left-to-right. This enables genuine understanding of linguistic context: "Can you get me a book on the bank?" means something different when the preceding sentence mentions fishing versus finance.

The distinction this creates is between lexical similarity (matching the same words) and semantic similarity (matching the same meaning, potentially with different words). Modern search systems can recognize that a query for "how to fix a leaking tap" and a page about "faucet repair methods" are addressing the same need — without any shared vocabulary.

E-E-A-T: quality signals that are hard to fake

Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) reflects a specific challenge at scale: how do you evaluate content quality across hundreds of billions of pages without human review? The answer is proxy signals — indicators that correlate with quality and are costly to manufacture.

| E-E-A-T Dimension | What It Represents | Why It Functions as a Proxy |

|---|---|---|

| Experience | First-hand engagement with the subject | Hard to fabricate convincingly across a content corpus |

| Expertise | Demonstrated domain knowledge | Requires consistent, accurate depth across many related pieces |

| Authoritativeness | Recognition by others in the field | Requires genuine citation and reference by credible sources |

| Trustworthiness | Accuracy, transparency, reliability | Requires a track record — cannot be established instantly |

The pattern is consistent: each dimension measures something that is expensive to fake at scale, even if any single data point could be manufactured.

Goodhart's Law and the arms race

There is a structural tension in the SEO industry that no amount of algorithmic sophistication fully resolves. Goodhart's Law — originally an observation about monetary policy by British economist Charles Goodhart — states that when a measure becomes a target, it ceases to be a good measure.

Applied to search: every time Google publicizes or confirms a ranking signal, a population of practitioners immediately begins optimizing for that signal specifically, degrading its value as a genuine quality indicator. Keyword density became a target, so it was gamed until Google deweighted it.

Links became a target, so entire industries sprang up to manufacture them. Structured data became a signal, so it was over-applied to content it did not describe. E-E-A-T became a framework, so content operations sprang up producing high-volume content formatted to look authoritative.

This arms race is not a failure of Google's design — it is an inherent property of any system where the evaluation criteria are public and the rewards are high.

Behavioral signals: the hardest data to fake

The logical endpoint of Goodhart's Law is that the most durable ranking signals are those that cannot be optimized for without actually satisfying users. This is why behavioral data — dwell time (how long a user stays on a page before returning to search results), click-through rate (whether users choose your result over others), and return-to-SERP rate (whether users come back to search after visiting) — has become increasingly important in modern ranking systems.

These signals are not perfect proxies. They can be manipulated through coordinated activity, as Rand Fishkin's 2022 experiments demonstrated. But they are meaningfully harder to fake at scale than content signals, and they are directly measuring user satisfaction — which is, ultimately, what Google is trying to predict.

An algorithm that perfectly predicted which results would satisfy users would have no need for any other signal. Behavioral data is the closest approximation of ground truth that search systems can access without a human review of every result.

References

- Ahrefs. (2023). We Analyzed 1 Billion Web Pages. Here's What We Learned About Content & Links. Ahrefs Blog. https://ahrefs.com/blog/content-study

- Semrush. (2023). Ranking Factors Study 2023: What Matters Most for Google Rankings. Semrush Blog. https://www.semrush.com/ranking-factors

- Google. (2023). How Google's Search Algorithms Work. Google Search Central Documentation. https://developers.google.com/search/docs/fundamentals/how-search-works

- Google. (2023). Understanding Page Experience in Google Search Results. Google Search Central. https://developers.google.com/search/docs/appearance/page-experience

- Fishkin, R., & SparkToro. (2022). CTR and Engagement Ranking Factor Experiments. SparkToro Blog. https://sparktoro.com/blog/google-ctr-ranking-experiment

- Google Brain. (2022). Reliable Information Evaluation Using Language Grounding. Google AI Research. https://ai.google/research/pubs

- Dean, B. (2023). SEO Case Studies: What Actually Moves Rankings. Backlinko. https://backlinko.com/seo-case-studies

Frequently Asked Questions

How long does it take to see SEO results?

SEO improvements typically take 3-6 months to show meaningful ranking changes for competitive keywords, though less competitive long-tail keywords can show movement in 4-8 weeks. Technical fixes like resolving crawl errors or improving Core Web Vitals often reflect in Google Search Console coverage reports within 2-4 weeks. Content optimization of existing pages tends to show results faster than new pages, which need time to build authority. The most important context: SEO compounds over time, so early months feel slow while later months accelerate.

What is the single most important SEO factor?

No single factor dominates in isolation, but if forced to name one, content that genuinely satisfies search intent better than competing pages is the most fundamental. You can have perfect technical SEO and strong backlinks, but if your content does not answer what users are actually searching for, you will not sustain rankings. Practically, the combination of matching search intent (creating the right type of content for the query), content quality (covering the topic more thoroughly than competitors), and having at least some backlinks is what enables ranking. All three are necessary.

What are Core Web Vitals and do they actually affect rankings?

Core Web Vitals are Google’s user experience metrics: Largest Contentful Paint (LCP, measuring load speed), Cumulative Layout Shift (CLS, measuring visual stability), and Interaction to Next Paint (INP, measuring responsiveness). Google confirmed they are a ranking factor as part of the Page Experience update. In practice, they function as a tiebreaker for closely competitive pages rather than a primary ranking driver — a page with poor Core Web Vitals can still rank highly if its content and authority are much stronger than competitors. However, for pages competing in close battles, Page Experience can be the deciding factor, making it worth fixing.

Is link building still important for SEO?

Yes. Despite years of predictions that links would be devalued, backlinks remain one of the strongest ranking signals. The Ahrefs study of 1 billion pages found that pages in the top 10 results had 3.8 times more referring domains than pages in positions 11-20. What has changed is the type of links that matter: editorially placed links from authoritative sites carry enormous weight, while manipulated links (purchased, exchanged, from link farms) are actively discounted or penalized. The most effective link building strategies in 2026 are digital PR, original research that earns natural citations, and genuine content that answers questions journalists and bloggers reference.

How do I do keyword research for a new website?

Start by identifying your main topic categories and brainstorming the questions your audience asks about each. Enter those questions into Google and note the suggested searches, ‘People also ask’ boxes, and the types of pages that appear (informational articles, product pages, tools). Use Google Search Console (free) for an established site to see which queries already drive impressions. For new keyword discovery, Ahrefs, Semrush, or even free tools like Ubersuggest provide search volume and difficulty estimates. Prioritize long-tail keywords (3+ words, specific intent) where competition is lower but conversion intent is often higher.

What is search intent and why does it matter?

Search intent is the underlying goal behind a search query: is the user trying to learn something (informational), buy something (transactional), find a specific website (navigational), or compare options (commercial investigation)? Google matches search results to the dominant intent for each query. If your page type does not match the intent — for example, a blog post for a transactional query dominated by product pages, or a product page for a purely informational query — you will struggle to rank regardless of quality. The fastest way to assess intent is to look at the top 5 results for your target keyword and note what type of content they are.

How do internal links help SEO?

Internal links serve two critical functions. First, they help search engine crawlers discover and index your pages — a page with no internal links pointing to it is effectively invisible to crawlers. Second, they distribute PageRank (link authority) from your stronger pages to your weaker ones. A strategically linked site uses its highest-authority pages (typically the homepage and most-linked articles) to pass authority to pages targeting competitive keywords. Use descriptive anchor text (the linked words) that indicates what the destination page is about, rather than generic text like ‘click here.’ Audit your most important pages to ensure they receive internal links from relevant content.

Should I focus on quantity or quality for content SEO?

Quality definitively. A common SEO mistake is publishing large volumes of thin, rapidly-produced content hoping some pages rank. Google’s Helpful Content system penalizes sites with high proportions of low-quality content at the domain level — meaning thin content can suppress rankings for your entire site, not just individual weak pages. The evidence-backed approach is to create fewer, more comprehensive pieces that cover topics more thoroughly than the existing top-ranking pages. One definitive guide that ranks well in the top 5 for 12 months outperforms ten thin articles that never rank.

What tools do I need for SEO, and which are free?

The essential free tools are Google Search Console (traffic data, indexation status, Core Web Vitals), Google Analytics (user behavior data), and Google’s PageSpeed Insights (performance measurements). Beyond free, Screaming Frog’s SEO Spider has a free tier for sites under 500 URLs and is invaluable for technical audits. Paid tools like Ahrefs and Semrush provide backlink analysis, keyword research, and competitor data that are very difficult to replicate with free alternatives. For a small site with limited budget, Google Search Console plus Ahrefs Webmaster Tools (free for your own verified domain) covers most essential needs.

What is E-E-A-T and how do I improve it?

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness — the framework Google’s quality raters use to evaluate content. Experience refers to first-hand knowledge of the topic. Expertise means demonstrable subject knowledge. Authoritativeness is how the site and author are regarded within their field. Trustworthiness covers accuracy, citations, and transparency. To improve E-E-A-T: add author bios with credentials and relevant experience, cite sources with links to primary research, use precise and accurate information rather than vague generalities, clearly identify who is responsible for the content, and build external recognition through mentions and links from authoritative sites in your field.