You are standing in a line at a government office. It is long, the air is stale, and everyone around you looks as though they would rather be anywhere else. A man in a neat suit walks directly to the front of the line, says something briefly to the clerk, and is seen to immediately. Nobody says a word. Later, thinking back on this, you feel certain that you would have objected if the situation had been slightly different: if he had been less well dressed, perhaps, or more obviously cutting rather than being apparently exempted.

This mundane scenario contains most of what social psychology studies. Whether you speak up is not simply a function of your personality or values. It depends on the signals you receive from other bystanders, your interpretation of the suited man's authority, your desire to avoid conflict or seem rude, and the ease with which your mind attributes his behavior to personal importance rather than simple queue-jumping. The same person behaves quite differently in different social situations, and social psychology is the science of why.

"The most important lesson from social psychology is that situations and context matter far more than we intuitively believe. We should be more humble about predicting our own behavior."

- Philip Zimbardo, The Lucifer Effect, 2007

Key Definitions

Social psychology is the scientific study of how people's thoughts, feelings, and behaviors are influenced by the actual, imagined, or implied presence of others.

Conformity is the tendency to adjust one's behavior or beliefs to match a perceived group norm, either because the group's judgment is believed to be correct (informational influence) or to gain social acceptance (normative influence).

| Social Psychology Topic | Key Finding | Classic Study |

|---|---|---|

| Conformity | People conform to group judgment even when clearly wrong | Asch line studies (1951) |

| Obedience to authority | Most people will obey authority figures in harmful ways | Milgram shock experiments (1963) |

| Bystander effect | Responsibility diffuses in crowds; less help when more witnesses | Darley & Latane (1968) |

| Cognitive dissonance | Behavior changes beliefs more than beliefs change behavior | Festinger & Carlsmith (1959) |

| Social identity | Group membership shapes self-concept and discrimination | Tajfel & Turner (1979) |

Attribution is the process by which people explain the causes of behavior, either to situational factors (the context) or dispositional factors (the actor's character).

Cognitive dissonance is the psychological discomfort arising from holding simultaneously two or more inconsistent cognitions, motivating attitude change or behavioral rationalization to reduce the inconsistency.

The Asch Conformity Experiments

Solomon Asch was a Gestalt psychologist at Swarthmore College who had studied with Max Wertheimer and believed deeply in human rationality and independence. His conformity experiments, conducted between 1951 and 1956, were designed partly to refute what he considered the overly pessimistic view of human suggestibility advanced by the earlier studies of Muzafer Sherif, who had used a more ambiguous perceptual task (the autokinetic effect) to demonstrate that people adopt others' judgments as anchors.

Asch's innovation was to make the task unambiguous. The line-length judgment required no inference, no expertise, and no guesswork. The correct answer was plain. Could group pressure cause intelligent adults to deny what their eyes told them? Asch expected the answer to be no.

The results gave him a different answer. When the confederates unanimously gave a wrong response, naive participants conformed on roughly 37 percent of all critical trials. About three-quarters conformed at least once across the series of trials. Subsequent variations showed that the effect was sensitive to group size up to three or four people, that unanimity was crucial, that a single dissenter drastically reduced conformity even if that dissenter gave a different wrong answer, and that public response increased conformity while written private response reduced it.

Two Roads to Conformity

Post-experiment interviews revealed two psychologically distinct processes underlying the apparent compliance. Some participants said they had genuinely become uncertain about their own perception when the entire group disagreed; the group's consistent alternative response caused them to doubt their vision or interpretation. This is informational social influence: the group is used as a source of information about ambiguous or difficult judgments.

Others said they had been quite confident in their answer but had gone along to avoid standing out, seeming uncooperative, or disrupting the group dynamic. They knew their answer was right but performed the wrong answer publicly. This is normative social influence: changing behavior without changing belief, driven by the desire for social acceptance and the discomfort of conspicuous deviance.

Both mechanisms operate in everyday life, often together. Most social conformity involves some informational uncertainty (perhaps others know something you do not) combined with some normative pressure (deviance is uncomfortable and has social costs).

Milgram's Obedience Studies

Stanley Milgram began designing his obedience studies at Yale in 1961, the year Adolf Eichmann's trial began in Jerusalem. Hannah Arendt's phrase "the banality of evil" captured the puzzle that motivated Milgram: how had ordinary men and women participated in systematic atrocities? Was it that they were uniquely brutal, or was there something in the structure of authority and obedience that could produce harmful behavior from normal people?

Milgram's procedure placed participants in the role of teacher in an apparent learning experiment. The learner (a 47-year-old accountant who was a confederate) was seated in an adjacent room and attached to a fake shock generator with electrodes. For each wrong answer, the teacher was instructed to deliver an increasing shock, from 15 to 450 volts in 15-volt increments, reading aloud the label at each level: Slight Shock, Moderate Shock, Strong Shock, Very Strong Shock, Intense Shock, Extreme Intensity Shock, Danger: Severe Shock, and XXX.

The learner's responses were pre-recorded. At 75 volts he grunted. At 120 volts he shouted that the shocks were painful. At 150 volts he demanded to be released. At 285 volts he gave only an agonized scream. After 315 volts there was silence. When participants expressed reluctance to continue, the experimenter used four verbal prods in sequence: Please continue; The experiment requires that you continue; It is absolutely essential that you continue; You have no other choice, you must go on.

In the baseline condition at Yale, 65 percent of participants delivered what they believed to be the maximum 450-volt shock, continuing beyond the silence that might indicate a cardiac emergency. Milgram was appalled. He expected perhaps one or two percent of participants would reach the maximum level. The agency of the harm rested with the experimenter, not the participant; Milgram called the state of subordinating one's own moral agency to an authority an agentic state.

Situational Variations and Their Implications

Milgram's systematic variations revealed the architecture of obedience. Proximity was the most powerful variable: obedience dropped from 65 percent in the baseline to 40 percent when the teacher could see the learner, to 30 percent when the teacher had to hold the learner's hand on a shock plate. Institutional authority mattered: moving the experiment from Yale to a commercial building in Bridgeport reduced obedience to 48 percent. The presence of two rebel confederates who refused to continue at 150 volts reduced obedience to 10 percent.

These variations supported Milgram's situationist interpretation: obedience was not a function of individual character but of the structure of the situation. Normal people, in normal situations, behave normally. The same people in an authority structure with diffused responsibility and gradual commitment escalation will perform acts they would never have contemplated without that structure.

The studies have been criticized on ethical grounds, for subjecting participants to severe distress, and on interpretive grounds. David Mandel and others have questioned whether the World War II analogy holds; perpetrators of organized mass violence typically had ideological commitment and social permission, not merely authority compliance. More recently, historian Christopher Browning's study of Reserve Police Battalion 101 found that men who did not want to participate in shootings were excused by their commander, suggesting that obedience was more ideological than Milgram's model implies.

The Stanford Prison Experiment and Its Contested Legacy

Philip Zimbardo's Stanford Prison Experiment (1971) assigned 24 male college students randomly to prisoner or guard roles in a simulated prison in the basement of Stanford's psychology building. The study was scheduled to run two weeks. Zimbardo stopped it after six days, citing the guards' escalating cruelty and the prisoners' deteriorating psychological condition.

The experiment was presented as evidence that situational forces, the roles assigned by the prison structure, were sufficient to produce abusive behavior in normal, carefully screened students. It became one of the most cited studies in psychology, appearing in virtually every introductory textbook as a demonstration of the power of situations over character.

The experiment's reputation has deteriorated significantly. Investigative journalist Thibault Le Texier's 2019 book "Histoire d'un mensonge" (History of a Lie), drawing on previously unexamined archival material, documented that guards had been coached by Zimbardo to behave harshly, that the simulated situation had many ecologically invalid features, and that participants in the prisoner role were largely performing distress rather than experiencing it authentically. Le Texier's analysis suggested that the experiment was far closer to an improvised theatrical production than a controlled scientific study.

This does not eliminate the broader situationist point that roles and institutional structures shape behavior; that point is supported by many better-designed studies. But it substantially undermines the SPE as a piece of scientific evidence.

Attribution Theory: How We Explain Behavior

Attribution theory was developed by Fritz Heider in his 1958 book 'The Psychology of Interpersonal Relations.' Heider proposed that people function as naive scientists, seeking to understand the causes of behavior in order to predict and control their social environment. He distinguished between internal causes (personality, ability, intentions) and external causes (the situation, luck, social pressure).

Edward Jones and Keith Davis formalized the correspondent inference theory in 1965: observers attribute behavior to underlying dispositions when the behavior is freely chosen, violates social norms, is low in social desirability, and has distinctive effects. Harold Kelley's covariation model (1967) proposed that attributions are made by assessing consistency (does the person always behave this way?), distinctiveness (do they only behave this way in this situation?), and consensus (does everyone behave this way?).

The Fundamental Attribution Error

Lee Ross coined the term "fundamental attribution error" in 1977 to describe the systematic tendency to over-attribute behavior to dispositional factors and underweight situational factors. Jones and Harris (1967) demonstrated this in a paradigm where participants read essays advocating for or against Fidel Castro. When told the essay position had been randomly assigned (a clear situational constraint), participants still rated the essay writer's true attitude as corresponding to the essay position. Even knowing that the situation fully determined the behavior, they inferred disposition.

The fundamental attribution error has implications for moral judgment, hiring decisions, legal verdicts, and social policy. When we see poverty, we tend to attribute it to the character of the poor rather than to structural conditions. When a student fails, teachers may attribute it to lack of effort rather than inadequate instruction. The actor-observer asymmetry is the related finding that people explain their own behavior situationally ("I was late because the traffic was terrible") while explaining others' behavior dispositionally ("he is always late because he is disorganized").

Social Identity Theory

Henri Tajfel's experience as a Polish Jewish man who survived the Holocaust partly by passing as French shaped his lifelong research agenda. How did group membership generate prejudice and discrimination? Was it simply a matter of conflicting interests, as realistic conflict theory (Sherif) proposed, or was there something more fundamental in the psychology of group identity itself?

The minimal group paradigm experiments answered this question in a way that surprised even Tajfel. Participants divided into groups on the basis of expressed preference for Klee versus Kandinsky paintings, or even by coin flip, immediately allocated resources so as to favor their own group over the out-group, even at the cost of maximizing absolute in-group gain. No history of conflict, no shared interests, no face-to-face interaction: mere categorical distinction was sufficient to generate in-group favoritism.

Social identity theory, developed with John Turner, proposed that the self-concept has two components: personal identity (individual traits and characteristics) and social identity (derived from group memberships). People seek to maintain positive social identity through positive distinctiveness of their in-groups. This motivation drives comparisons with out-groups and favoring of in-group members.

Self-categorization theory (Turner et al., 1987) extended this by showing that the level of self-categorization that is psychologically salient shifts with context. A person can categorize themselves as an individual, as a member of a particular group, or as a member of the broader human category. Intergroup behavior (stereotyping, discrimination) becomes more pronounced when group-level categorization is salient.

Cialdini's Principles of Persuasion

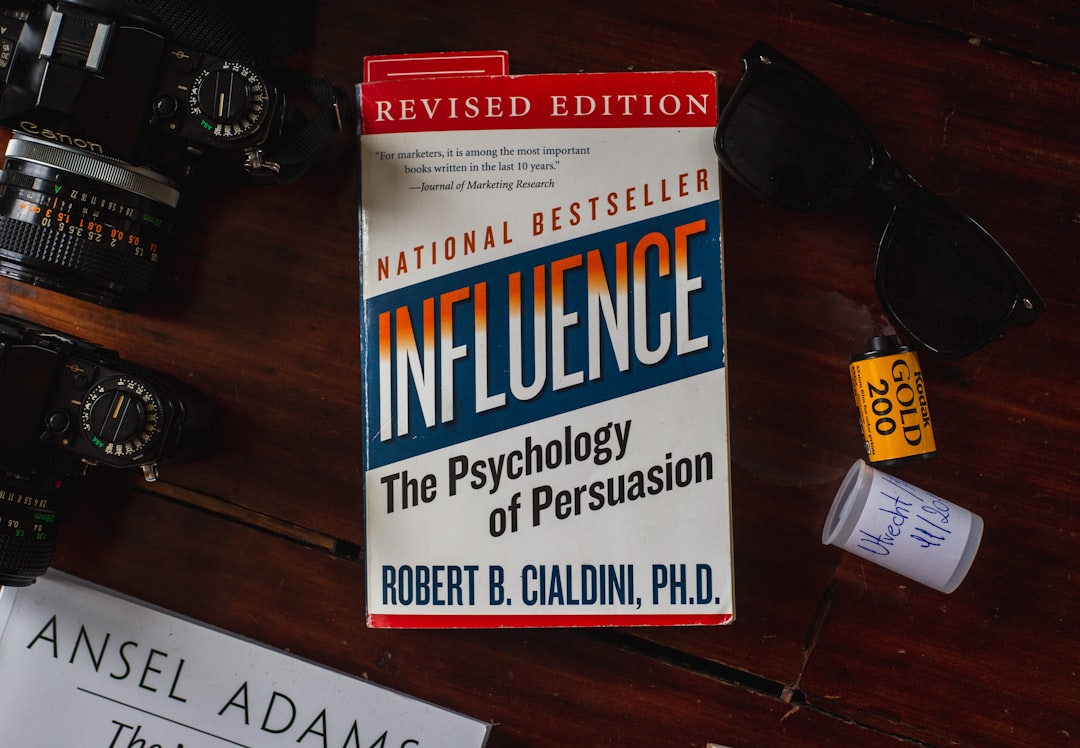

Robert Cialdini spent years studying professional influence practitioners, people whose livelihood depended on changing behavior: car salespeople, direct marketers, compliance professionals, fundraisers. He synthesized his observations into six principles, published in 'Influence: The Psychology of Persuasion' (1984), which has sold over five million copies and is required reading in marketing, sales, and behavioral economics.

Reciprocity: people feel obligated to return favors, gifts, and concessions. Free samples, uninvited favors, and prior concessions in negotiation all trigger this norm. Commitment and consistency: once a position is publicly committed to, people feel internal and external pressure to remain consistent with it, even if the original conditions change. This underlies the foot-in-the-door technique. Social proof: in uncertain situations, people look to others' behavior as evidence of the correct course of action, explaining why reviews, testimonials, and crowd size affect choices. Authority: people defer to legitimate experts, and symbols of authority (titles, uniforms, credentials) trigger this deference even when the actual expertise is uncertain. Liking: people are more easily influenced by those they find attractive, similar to themselves, familiar, or who have paid them compliments. Scarcity: perceived limited availability increases desirability, explaining limited-time offers and artificially restricted supply.

A seventh principle, unity (shared identity), was added in Cialdini's later work 'Pre-Suasion' (2016). Influence is more effective when the influencer and the target share a perceived common identity.

The Bystander Effect

John Darley and Bibb Latane developed their theory of bystander intervention in response to the 1964 murder of Kitty Genovese in Queens, New York, which had been reported as observed passively by 38 neighbors. The story proved substantially inaccurate, but it motivated important research.

Darley and Latane (1968) conducted the seizure experiment: a participant overheard what sounded like a fellow participant having a medical emergency. When the participant believed they were the only person who could help, 85 percent intervened. When they believed five others also had access to the intercom, only 31 percent intervened, and responses were slower. Two mechanisms explain the effect.

Diffusion of responsibility: in a group, each member feels less personally responsible to act because others share the obligation. Pluralistic ignorance: in ambiguous situations, each person looks to others' reactions to interpret the event. If everyone else appears calm, each person infers the situation is probably not an emergency, not knowing that everyone else is engaged in the same inference from their calm appearance.

Subsequent research refined the conditions. Group members who know each other, who have relevant expertise, who feel personal responsibility, or whose attention is explicitly called to the victim are substantially more likely to intervene. The effect is real but context-dependent.

The Replication Crisis

The Reproducibility Project (Open Science Collaboration, 2015) attempted to replicate 100 studies from three top psychology journals. Only 39 percent replicated with statistically significant results in the same direction. Average effect sizes in replications were roughly half those in originals. This finding crystallized years of growing concern about the reliability of published findings.

Several factors contributed. Publication bias means null results are rarely published, so the literature systematically overstates effect sizes. Questionable research practices including collecting data until significance is reached, testing multiple dependent variables and reporting only significant ones, and failing to pre-specify hypotheses inflate false positive rates substantially above the nominal five percent. Small sample sizes mean individual studies are underpowered to detect true effects and highly sensitive to random sampling variation.

Some canonical social psychology findings have held up well under replication, including the Asch conformity effect, the fundamental attribution error paradigm, and many priming effects. Others, including many ego depletion findings and the facial feedback hypothesis in its strong form, have replicated poorly.

Reform has followed. Pre-registration is increasingly required or encouraged by top journals. Registered reports, where peer review and publication commitment happen before data collection, eliminate publication bias for those studies. Sample sizes have increased. Open data and materials requirements improve scrutiny. The field is arguably stronger for the crisis, though the process of revision has been painful.

Cross-References

- For how cognitive biases interact with social influence in economic decisions, see /concepts/decision-making/what-is-keynesian-economics

- For how social development and identity formation unfold across childhood and adolescence, see /concepts/psychology-behavior/what-is-developmental-psychology

- For the anchoring bias and its relationship to social proof, see /concepts/psychology-behavior/anchoring-bias-explained

- For systems-level thinking about social norms as emergent phenomena, see /concepts/systems-complexity/emergence-explained-examples

References

- Asch, S.E. (1956). Studies of independence and conformity: I. A minority of one against a unanimous majority. Psychological Monographs, 70(9), 1-70.

- Milgram, S. (1963). Behavioral study of obedience. Journal of Abnormal and Social Psychology, 67(4), 371-378.

- Milgram, S. (1974). Obedience to Authority: An Experimental View. Harper and Row, New York.

- Festinger, L. (1957). A Theory of Cognitive Dissonance. Stanford University Press, Stanford.

- Festinger, L. and Carlsmith, J.M. (1959). Cognitive consequences of forced compliance. Journal of Abnormal and Social Psychology, 58(2), 203-210.

- Tajfel, H. and Turner, J.C. (1979). An integrative theory of intergroup conflict. In W.G. Austin and S. Worchel (Eds.), The Social Psychology of Intergroup Relations. Brooks/Cole, Monterey.

- Darley, J.M. and Latane, B. (1968). Bystander intervention in emergencies: diffusion of responsibility. Journal of Personality and Social Psychology, 8(4), 377-383.

- Cialdini, R.B. (1984). Influence: The Psychology of Persuasion. William Morrow, New York.

- Ross, L. (1977). The intuitive psychologist and his shortcomings: distortions in the attribution process. Advances in Experimental Social Psychology, 10, 173-220.

- Open Science Collaboration (2015). Estimating the reproducibility of psychological science. Science, 349(6251), aac4716.

- Le Texier, T. (2019). Debunking the Stanford Prison Experiment. American Psychologist, 74(7), 823-839.

- Heider, F. (1958). The Psychology of Interpersonal Relations. Wiley, New York.

Frequently Asked Questions

What did the Asch conformity experiments demonstrate?

Solomon Asch's conformity experiments, conducted at Swarthmore College and published in 1951 and 1956, are among the most influential studies in the history of social psychology. Asch wanted to test how social pressure from a group affects an individual's stated judgments even when the correct answer is objectively obvious.The procedure was elegantly simple. A naive participant sat in a room with several confederates, people posing as participants but secretly working for the experimenter. The group was shown a standard line and three comparison lines of different lengths and asked to identify which comparison line matched the standard. The task was easy: the correct answer was unambiguous, and in control conditions participants answered correctly over 99 percent of the time.In the critical trials, the confederates unanimously gave an obviously wrong answer. The naive participant, who answered last or second to last, had to decide whether to trust their own perception or conform to the group's stated judgment. Across his studies, approximately 75 percent of participants conformed at least once, giving an answer they could plainly see was wrong. The average conformity rate across all critical trials was roughly 37 percent. About 25 percent of participants never conformed at all.Asch followed up with variants that revealed the social dynamics at work. A single dissenter, even if that dissenter gave a different wrong answer, dramatically reduced conformity, sometimes to near zero. This suggested that unanimity was the key factor, not majority size. Participants' post-experiment accounts revealed two distinct processes: some genuinely became uncertain about their perception when the whole group disagreed; others knew they were right but went along to avoid standing out.Asch's experiments established the distinction between informational influence, changing one's beliefs based on others' presumed superior knowledge, and normative influence, conforming to avoid social rejection while privately maintaining one's own view. Both processes operate in everyday judgment about far less clear-cut questions than line lengths.

What did Stanley Milgram's obedience studies reveal about human behavior?

Stanley Milgram's obedience experiments, conducted at Yale University beginning in 1961, produced findings so disturbing that they have been reanalyzed, contested, and debated ever since. Milgram designed the studies partly in response to the trial of Adolf Eichmann, a senior Nazi administrator whose defense was that he had merely been following orders. Milgram wanted to investigate whether ordinary Americans would similarly defer to authority figures.Participants believed they were taking part in a study of learning and memory. They were assigned the role of teacher and told to administer electric shocks of increasing intensity to a learner (a confederate) each time the learner gave a wrong answer. The shock generator had switches labeled from 15 volts to 450 volts, with descriptive labels ranging from Slight Shock to Danger: Severe Shock and finally XXX. In fact no shocks were delivered; the learner's responses were scripted and pre-recorded.As the shock levels increased, the learner's protests escalated: grunts of pain, demands to stop, complaints about a heart condition, and eventually ominous silence. When participants hesitated, the experimenter used standardized prods: Please continue; The experiment requires you to continue; You have no other choice, you must go on. In Milgram's baseline condition, 65 percent of participants delivered what they believed to be the maximum 450-volt shock, continuing despite evident distress.Milgram varied conditions systematically. Proximity mattered enormously: obedience dropped sharply when the teacher could see the learner, and further when they had to physically press the learner's hand onto a shock plate. Institutional authority mattered: moving the experiment from Yale to a run-down commercial building reduced obedience. Having a peer rebel dramatically reduced obedience.Milgram's conclusion was that ordinary people will perform acts that violate their moral principles when embedded in a legitimate authority structure, a situationist insight that challenged the common tendency to explain evil behavior through character defects. His methodological ethics, especially the psychological distress inflicted on participants, permanently changed research ethics standards and made his studies impossible to fully replicate today.

What is cognitive dissonance and how does it affect behavior?

Cognitive dissonance is the psychological discomfort that arises when a person holds two or more conflicting cognitions, or when their behavior conflicts with their beliefs or attitudes. Leon Festinger introduced the theory in his 1957 book 'A Theory of Cognitive Dissonance,' and it became one of the most generative frameworks in social psychology, spawning thousands of studies.Festinger and his colleagues had actually infiltrated a doomsday cult that believed the world would end on a specific date, observing the group's reaction when the prophecy failed. Rather than abandoning their beliefs, the group members who had made public commitments (quit jobs, given away possessions) became more zealous missionaries for their faith after the disconfirmation. Festinger argued that the committed members experienced dissonance between their public commitment and the evidence, and resolved it by increasing their certainty and seeking social validation through proselytizing. These observations were published in 'When Prophecy Fails' (1956).The laboratory demonstration of dissonance reduction came from what is called the induced compliance paradigm. Festinger and Merrill Carlsmith (1959) had participants perform an incredibly boring task and then paid them either one dollar or twenty dollars to tell the next subject that the task was enjoyable. When later asked their true opinion of the task, participants who had been paid only one dollar rated it as more interesting than those paid twenty dollars. The explanation: those paid one dollar had insufficient justification for lying (low external justification), so they experienced dissonance and reduced it by convincing themselves they actually had found the task somewhat interesting. Those paid twenty dollars had sufficient external justification for their lie and experienced less dissonance.Dissonance theory has applications in understanding smoking despite knowing the health risks, justifying harmful behavior toward others, the psychology of sunk cost decisions, and why people who undergo difficult initiations value the groups they join more highly. Elliot Aronson's self-concept version of dissonance theory holds that the distress arises specifically from threats to one's self-image as a competent and moral person, which is why dissonance is strongest after freely chosen behavior that harms others.

What is social identity theory and why does it matter?

Social identity theory, developed by Henri Tajfel and John Turner at Bristol University in the 1970s and 1980s, proposes that a significant part of a person's self-concept derives from their membership in social groups. The theory was motivated by Tajfel's own wartime experience as a Polish Jewish man who survived the Holocaust partly by concealing his identity, and by his lifelong interest in the psychology of prejudice and intergroup conflict.The minimal group paradigm experiments provided the foundation. Tajfel and colleagues divided participants into groups on the most trivial possible basis: preference for paintings by Klee versus Kandinsky, or in some studies, by coin flip. Participants then allocated points (worth real money) between anonymous members of their own group (in-group) and the other group (out-group). The findings were striking: even in these meaningless groups, participants consistently favored in-group members, and often sacrificed absolute in-group gain to maximize the relative advantage over the out-group, choosing allocations that gave their group fewer points in absolute terms but more than the out-group.Social identity theory proposes that people seek to maintain a positive self-concept through positive distinctiveness of their in-group. Belonging to groups seen as better than out-groups bolsters self-esteem. This motivation drives in-group favoritism and, in more extreme forms, prejudice and discrimination. Individuals can respond to a negative group identity by leaving the group (social mobility), by reframing the comparison dimension (arguing that the supposedly inferior trait is actually superior), or by working to improve the group's status through collective action.Self-categorization theory, Turner's extension of the framework, explains how the same individual can behave as a unique personal self in some contexts and as a representative member of a social category in others, depending on which level of self is made psychologically salient by situational cues. Together these theories inform understanding of political polarization, nationalism, organizational culture, and virtually any context where group membership shapes identity and behavior.

What is the bystander effect and what actually happened with Kitty Genovese?

The bystander effect is the finding that individuals are less likely to offer help in an emergency when other people are present, and that the likelihood of help decreases as the number of bystanders increases. The psychological mechanisms underlying this effect were worked out by John Darley and Bibb Latane in a series of experiments conducted after the 1964 murder of Kitty Genovese in New York City.The Genovese case was reported in a famous 1964 New York Times article claiming that 38 witnesses watched the 35-minute attack from their apartment windows without calling the police, a framing that became iconic in social psychology. Later journalism, particularly a 2016 investigation by Genovese's brother William, revealed that the original reporting was substantially inaccurate. The attack took place in two stages not visible to a single witness; some witnesses did call the police; the 38-witness claim was an inflation. The attack was genuinely horrific, but the narrative of passive, heartless New Yorkers observing a prolonged murder was more sensational than accurate.Darley and Latane's experimental work nonetheless demonstrated real and robust psychological mechanisms. In their classic 1968 study, participants believed they were in a discussion with one to five other participants over an intercom. One voice (a confederate) staged a seizure. When the participant believed they were the only person in the network, 85 percent reported the emergency within six minutes. When participants believed five others were also listening, only 31 percent intervened. Diffusion of responsibility explains part of the effect: each person feels less personally obligated to act because others share the obligation. Pluralistic ignorance is a second mechanism: in ambiguous situations, people look to others' calm reactions to define the situation as not an emergency, each drawing the same false inference from everyone else's inaction.Subsequent research has qualified the effect. Bystanders who know the victim, who have relevant skills, or who are in a cohesive group are more likely to help. The effect is real but context-dependent, not a universal feature of human nature as the simple Genovese myth implied.

What is the replication crisis in social psychology and what does it mean for the field?

The replication crisis refers to the finding that a substantial proportion of published findings in social psychology and related fields cannot be reproduced when independent researchers attempt to replicate them under similar conditions. The crisis crystallized with the publication of the Reproducibility Project: Psychology in Science in 2015, a large-scale collaborative effort coordinated by Brian Nosek at the University of Virginia.The project attempted to replicate 100 studies from three leading psychology journals published in 2008. Using the original study designs, materials, and analysis plans, the replication team found that only 39 of the 100 results replicated with statistically significant results in the same direction as the original, a success rate of 39 percent. The average effect size in replications was roughly half that in originals. These findings were alarming to a field that had built cumulative knowledge on the assumption that published results were real.Several factors contributed to the crisis. Publication bias, the tendency for journals to publish positive results and reject null results, means the published literature systematically overstates effect sizes. Questionable research practices, sometimes called p-hacking, include collecting data until significance is reached, selectively reporting conditions or dependent variables, and failing to correct for multiple comparisons. These practices are not necessarily deliberate fraud; they often reflect ambiguity about best practice and unconscious confirmation bias. Small sample sizes leave studies underpowered and produce high rates of false positives even without deliberate manipulation.The crisis has prompted substantial reform in how psychological research is conducted and reported. Pre-registration, where researchers specify hypotheses, methods, and analysis plans before data collection, prevents post-hoc rationalization. Registered Reports, a journal format where peer review happens before data collection and publication is committed regardless of results, directly counteracts publication bias. Open data and materials requirements improve transparency. The replication crisis, painful as it has been, is arguably a sign of a maturing science confronting its own limitations and developing stronger self-corrective mechanisms.