Three months before Google's August 2018 core algorithm update, a team of engineers at the company published a paper in a technical conference titled "Measuring News Quality." It described a framework for evaluating whether news content was trustworthy, accurate, and authoritative - separate from whether it was popular or widely shared. The paper, largely ignored by the SEO community at the time, turned out to be a preview of the company's direction.

When the update rolled out on August 1, 2018, the SEO community named it the "Medic Update" because health and wellness sites took the most visible hits. Hundreds of sites lost between 40% and 80% of their organic traffic within 48 hours. But the pattern was not random.

The sites that lost traffic shared characteristics: unsigned articles, no author credentials, aggressive health claims lacking medical sources, minimal external citation, and no identifiable editorial process. The sites that gained traffic - Mayo Clinic, Healthline, the Cleveland Clinic - shared different characteristics: named authors with medical credentials, rigorous source citation, explicit fact-checking processes, and institutional authority built over years.

Google had not found a new way to evaluate content. It had found a way to encode into its algorithm what careful human readers had always known: that the source of information, the qualifications of the person providing it, and the rigor with which claims are supported determine whether information should be trusted.

The framework that emerged from this period - E-E-A-T - is the most important conceptual lens for understanding how search engines evaluate content quality, and why some sites consistently outrank others regardless of technical optimization.

The E-E-A-T Framework in Depth

Google's Search Quality Rater Guidelines, a document of nearly 170 pages used to train human quality raters who evaluate search results, describes four dimensions of content quality:

Experience - the newest addition, added in December 2022 - refers to the content creator's direct, first-hand engagement with the subject. Expertise refers to formal knowledge, training, or demonstrably deep understanding. Authoritativeness refers to how the creator or publication is regarded by others in the field. Trustworthiness is described in the guidelines as the most foundational dimension - a page that is untrustworthy fails regardless of its other qualities.

These are not separate scores. They interact and reinforce each other. An author who has expertise in a field and a track record of accurate, well-sourced writing accumulates authority over time. That authority becomes a trust signal. The signals are mutually reinforcing.

| E-E-A-T Dimension | What It Measures | Key Signals | Matters Most For |

|---|---|---|---|

| Experience | First-hand engagement with subject | Original photos, granular details, timestamps | Product reviews, travel, personal finance |

| Expertise | Depth of knowledge | Author credentials, methodology, citations | Medical, legal, technical content |

| Authoritativeness | External recognition | Inbound links, media mentions, citations | Competitive informational queries |

| Trustworthiness | Accuracy and honesty | Sources cited, editorial corrections, contact info | YMYL content (health, finance, safety) |

"Google had not found a new way to evaluate content. It had found a way to encode into its algorithm what careful human readers had always known: that the source of information, the qualifications of the person providing it, and the rigor with which claims are supported determine whether information should be trusted." - Search quality researchers

Experience: First-Hand Knowledge That Cannot Be Faked

The December 2022 addition of "Experience" to the original E-A-T framework addressed a problem that became acute with the rise of AI-generated content: expertise and credentialing could be claimed without the grounding in actual lived interaction with a subject that produces genuinely useful knowledge.

A physician can discuss the pharmacology of a drug. A patient who has taken that drug for three years knows something the physician's clinical training does not capture: what the daily experience of side effects is like, how the drug interacts with specific life patterns, what workarounds patients develop for administration inconveniences. Both kinds of knowledge are valuable; they are not interchangeable.

The experience signal matters differently across content types:

For product reviews, experience means owning, using, and testing the actual product. A review of hiking boots written by someone who hiked 200 miles in them knows what the leather does at mile 50 compared to mile 5, whether the ankle support actually matters on specific terrain types, and how the waterproofing holds up after multiple crossings of shallow streams. These details are not in the product specifications and cannot be synthesized from other reviews.

For travel content, experience means having visited the place at the relevant time. The restaurant recommendations, the practical advice about transportation, the realistic assessment of how tourist-friendly an area is - these are grounded in a visit that can be documented.

For financial and investment content, experience means having actually navigated the financial situations being described: having executed the strategy, made the trade, filed the paperwork, dealt with the consequences. This is distinct from academic understanding of the concepts.

The signals Google's quality raters use to assess experience: specific, granular details that require direct interaction to know; original photographs showing personal engagement with the subject; timestamps and dates indicating when the experience occurred; acknowledgment of failures, surprises, or outcomes that differed from expectations; comparison drawn from personal parallel experience with alternatives.

Expertise: Verified Knowledge

The expertise dimension asks whether the content creator has the background - formal or demonstrated - to be a reliable source on the topic.

For YMYL content (Your Money or Your Life - medical, financial, legal, safety, and related topics where poor information could cause real harm), expertise expectations are high and credentials are important. A medical article on drug interactions should involve a physician, pharmacist, or nurse practitioner in its creation or review. A piece on tax law should involve a CPA or tax attorney. The stakes of incorrect information justify this standard.

For non-YMYL content - cooking, travel, home improvement, entertainment, most technology content - expertise can be demonstrated through a track record of accurate, detailed content rather than formal credentials. A food blogger who has published 400 tested recipes with detailed notes on technique, substitutions, and failure modes is demonstrating expertise through output, not degree.

The critical distinction: expertise in the guidelines is about the creator's relationship to the content, not their biography. An author page listing impressive credentials attached to a shallow, poorly-sourced article does not create expertise signals. A well-researched, carefully sourced, nuanced article by an author with modest stated credentials may score better on expertise because the content itself demonstrates the knowledge.

Example: Wirecutter, the product review publication acquired by the New York Times in 2016, built its authority and expertise signals through methodology: for each product review, the testing process is described in detail, including the specific tests conducted, the comparison pool, the duration of testing, and the criteria applied. A headphones review might describe 40 hours of listening across 15 music genres, commute noise testing on specific subway lines, call clarity testing with standardized phrases.

The methodology documentation demonstrates expertise because it shows the reviewers did something that required knowledge and judgment to design.

Authoritativeness: Recognition That Must Be Earned

Authoritativeness cannot be self-declared. The guideline language is explicit: authority comes from how the creator or site is regarded by others in the relevant field or community.

The primary external signals:

Inbound links from authoritative sources. When a page about cardiovascular health is cited by the American Heart Association, Johns Hopkins Medicine, and the New England Journal of Medicine, those citations are authority signals that Google can measure. The quality of linking sources matters more than quantity: one link from a highly authoritative domain is worth more than fifty links from low-authority sites.

Citations and mentions in authoritative publications. An author whose research is cited in academic papers, whose expertise is sought by journalists writing for major publications, or whose work is referenced in industry reports has accumulated external authority that Google's systems can attempt to measure.

Institutional affiliation. Writing associated with well-regarded institutions - hospitals, universities, research organizations, established media outlets - benefits from the institutional authority. This is a trust transfer from the institution to the content, which is why institutional affiliations are worth displaying clearly.

Awards and peer recognition. Professional associations, industry conferences, and peer review processes are external quality signals. An author who has received professional recognition from a relevant association, been invited to speak at industry conferences, or whose work has undergone peer review has external validation of their standing.

Historical performance. Sites that have consistently published accurate, reliable content build authority incrementally. This is why long-established sites in a niche often have authority advantages over newer entrants even when the newer content is objectively better on some dimensions - the track record matters.

Example: The authority differential between NerdWallet (founded 2009, currently one of the most authoritative personal finance sites in the US) and a newer personal finance site is not primarily about content quality in any given article. It is about years of accumulation: thousands of inbound links from financial institutions, government agencies, major media outlets; millions of satisfied users who return; professional recognition; and a track record of updating content as regulations and product offerings change.

A new site can produce excellent individual articles, but cannot rapidly acquire this accumulated authority.

Trustworthiness: The Foundation Layer

The guidelines describe trustworthiness as the single most important dimension of E-E-A-T, the foundation on which the others rest. A site can have apparent experience, expertise, and even authority, but if it is untrustworthy - if its information is inaccurate, its advertising is deceptive, or its motivations are hidden - it fails the quality test.

The trustworthiness signals Google evaluates include:

Accuracy and correction practices. Sites that correct errors promptly and transparently, that acknowledge uncertainty when it exists, and that update content when new information emerges signal trustworthiness. Sites that do not correct errors, that present uncertain information as established fact, or that retroactively delete rather than correct erroneous content signal the opposite.

Transparency about identity. Who runs the site? Who wrote the content? What are their qualifications and potential conflicts of interest? Sites that answer these questions clearly - with author bios, about pages, editorial policies, and contact information - are more trustworthy than those that obscure their identity or present anonymous content.

Disclosure of commercial relationships. Content that recommends products or services without disclosing that the recommendations are paid, or that reviews products while hiding affiliate relationships, fails on trustworthiness even if the underlying recommendations are accurate. The guidelines are explicit that undisclosed commercial influence is a trust negative.

Technical trust signals. HTTPS is baseline. Sites still running HTTP in 2024 are signaling indifference to user data security. Functional links, working contact forms, and the absence of broken experiences all contribute to the low-level trust sense that a site is maintained by people who care about it.

What Comprehensive Content Actually Means

The phrase "comprehensive content" appears constantly in SEO guidance and is rarely defined with enough precision to be useful. Comprehensiveness is query-relative: what is comprehensive for a simple factual query is radically different from what is comprehensive for a complex instructional query.

"What year was the Eiffel Tower built?" has a definitive, short answer. "How do I negotiate a salary increase?" requires covering psychological preparation, research methodology, timing strategy, specific language approaches, common objections and responses, what to do if the request is denied, and how the approach differs by industry and company size.

The useful way to think about comprehensiveness is in terms of query intent satisfaction: does the content fully answer the question the user was trying to answer, plus the closely related questions they would likely have next?

The Subtopic Coverage Test

When Google's systems model what comprehensive coverage of a topic looks like, they do so by analyzing the content of pages that users are satisfied with for related queries. This creates a de facto model of what a comprehensive treatment includes.

The practical application: before writing on a topic, research what established comprehensive resources on the topic cover. What subtopics appear in the top-ranking content? What questions appear in "People Also Ask" boxes? What terms appear in related searches? This research reveals the expected structure of comprehensive coverage, identifying gaps to address.

The goal is not to copy the competitor's structure but to ensure you have addressed the full scope of the topic from your own perspective. Missing a major subtopic that all competitors cover signals incompleteness to both users and search systems.

Semantic Depth and Vocabulary

Language models can assess whether content uses appropriate vocabulary for the level of expertise claimed. A medical article about hypertension that never uses the terms "systolic," "diastolic," "antihypertensive," or "vasodilation" is unlikely to be authored by someone with medical training, even if the article never explicitly claims medical authorship.

The appropriate vocabulary for a domain carries both signal value (it indicates the author knows the field) and practical value (readers with domain knowledge appreciate precision; simplified vocabulary for complex concepts is appropriate for lay audiences but should coexist with domain-appropriate terminology when the audience is mixed).

The semantic richness principle: expert content uses specific, precise vocabulary alongside clear explanation of that vocabulary for non-expert readers. This serves both populations and signals that the author understands both the domain and the audience.

Evidence, Sources, and the Citation Economy

The citation practice of content is one of the clearest indicators of commitment to accuracy. Content that makes factual claims without sources is asking the reader to trust the author's memory and judgment. Content that cites primary sources - original research, official data, documented case studies - provides the reader a path to verify claims and signals that the author's relationship to the information is accountable.

The hierarchy of evidence quality is: primary research > institutional/government data > peer-reviewed analysis > authoritative secondary sources > journalism > opinion. Using the highest quality source available for each claim, and being transparent about where you are drawing on lower-quality sources when higher-quality sources are not available, is a trustworthiness signal.

User Behavior as an Indirect Quality Signal

Search engines cannot directly observe what users think of content. They can observe what users do, and specific behaviors correlate reliably with quality assessment.

The Satisfaction Pattern

When a user searches for something, clicks a result, spends time reading it, and does not return to search results to try a different result, the behavioral pattern suggests the content satisfied the query. When a user searches, clicks a result, immediately returns to results, and tries a different result (called pogo-sticking), the pattern suggests the content failed to satisfy.

These behavioral signals are noisy - a user might return to results to look for additional perspectives even after being satisfied, or might stay on a page for a long time without actually reading it. But at scale, across millions of queries, the aggregate signal is informative. Pages that consistently produce satisfied-user behavioral patterns tend to rank well over time; pages that produce pogo-sticking tend to decline.

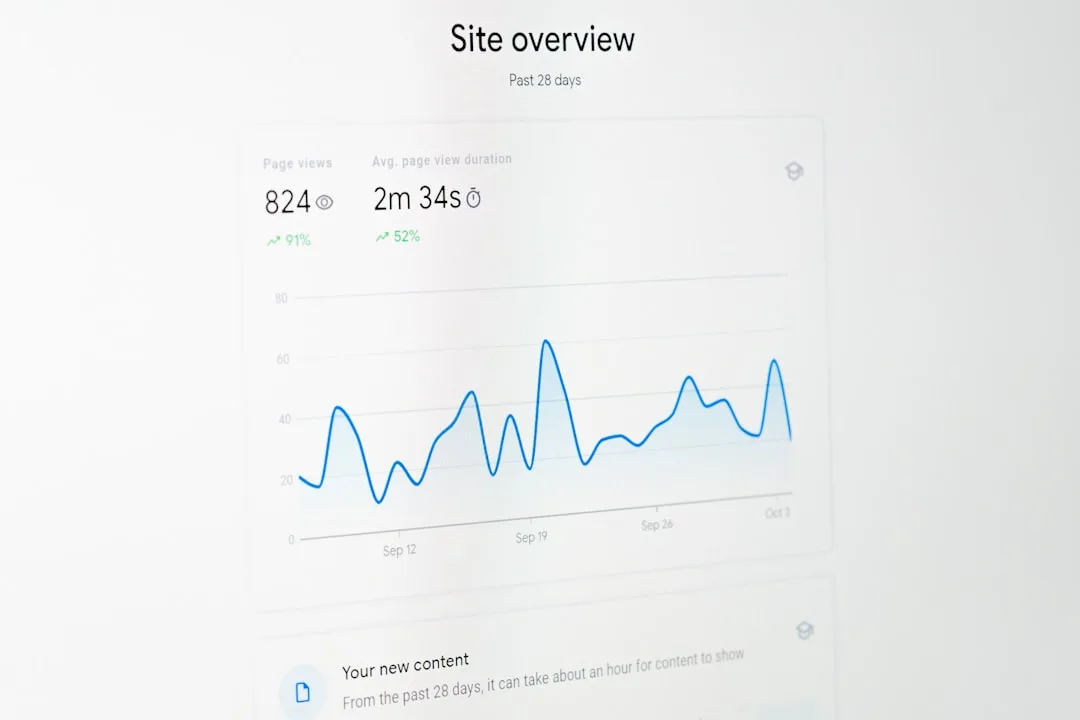

Dwell time - how long a user spends on a page before returning to search results - correlates positively with content quality for informational queries. A user who spends four minutes on a page reading about how to treat a bee sting is likely getting useful information. A user who spends twelve seconds and returns is not.

Click-Through Rate as a Quality Signal

When a page appears in search results and users consistently choose it at higher rates than its position would predict, this suggests the title and description are highly relevant to user intent - which itself reflects alignment between the content and what users are looking for.

CTR is influenced by many factors beyond content quality: brand recognition, result format (whether structured data produces rich snippets), and the competitive set of results on the page. But consistently high or low CTR for given positions and queries is signal that accumulates over time.

Practical Quality Improvement

The Content Audit Process

Quality improvement begins with identifying what exists and where it falls short. A content audit for quality purposes examines:

Traffic-to-satisfaction alignment. Pages with traffic but high bounce rates may have accurate titles that fail to deliver on the promise. Pages with good dwell time but low traffic may have quality content that needs better discoverability signals.

E-E-A-T gaps. Are there author bios? Are they substantive, including credentials relevant to the content topic? Are sources cited? Is there an editorial policy explaining the review process? Are commercial relationships disclosed?

Depth gaps. Which major subtopics of a subject does the content not address? Which related questions are users asking that the content does not answer?

Currency. Is the content still accurate? Has the underlying situation changed (new regulations, updated research, different product availability) in ways the content does not reflect?

Technical quality. Core Web Vitals performance, mobile experience, internal linking structure, structured data implementation.

Authorship as Infrastructure

The most durable quality investment is building real authorship infrastructure: named authors with documented credentials and experience, editorial processes that can be described and displayed, and a track record of accurate, sourced content over time.

This is not a quick fix. It requires hiring or developing writers with genuine expertise, building editorial review processes, and maintaining the discipline to cite sources, disclose relationships, and correct errors consistently. Sites that have built this infrastructure have authority advantages that persist through algorithm updates because the signals they produce are based on real quality, not optimization tricks.

Example: Healthline Media, acquired by Red Ventures in 2019, became the authoritative consumer health information resource in the United States primarily through investment in medical review infrastructure. Every article is reviewed by a licensed healthcare professional relevant to the topic.

Reviewer credentials are displayed prominently. The medical review process is described explicitly on a dedicated page. The result is that Healthline's content carries implicit credibility signals that algorithm updates that target thin or untrustworthy health content reinforce rather than threaten.

The Helpful Content Framework

Google's September 2022 introduction of the "Helpful Content" system made explicit what had been implicit in quality signals: content should be written to genuinely help the reader, not to perform for search engines. Content that is primarily organized around keywords, that satisfies apparent search intent without genuinely addressing the underlying user need, or that represents a thin pass over a topic to capture traffic increasingly faces headwinds.

The useful test, drawn from Google's own public guidance: if search did not exist, would you publish this content anyway? Would it be valuable to an audience that found it through channels other than search? Content that exists solely to capture search traffic and has no other audience value tends to produce the behavioral signals that quality systems devalue.

See also: How Search Engines Work, SEO Measurement Explained, and Internal Linking Strategy Explained.

What Google's Research and Quality Rater Guidelines Reveal

The most authoritative source for understanding how Google evaluates content quality is not third-party speculation but Google's own documentation and the public statements of named Google engineers and researchers.

The Quality Rater Guidelines as a Ranking Blueprint: Google's Search Quality Rater Guidelines - currently 175 pages, publicly available as a PDF through Google's documentation - are not a description of the ranking algorithm but a training document for the human quality raters whose assessments are used to evaluate and improve ranking quality. The distinction matters: the guidelines describe what Google is trying to achieve with its ranking system, which guides what the algorithm is trained toward.

The 2023 version of the guidelines introduced substantial expansion of the "Experience" dimension, with specific guidance that raters should look for "first-hand personal experience with the main content topic" and that "experience is especially important for topics like product reviews, travel, health, and other topics where real-world experience matters." This addition was a direct response to the proliferation of AI-generated content that could imitate expertise signals without the underlying experience.

Pandu Nayak and the Development of the Quality Framework: Pandu Nayak, Google's Vice President of Search, has been the most senior Google executive to discuss content quality evaluation publicly. In a 2018 interview with Search Engine Land following the August core update, Nayak explained that Google's quality evaluation considers whether "content demonstrates expertise, knowledge, and skills needed to author the content," whether the content is "accurate," and whether the page has a "positive reputation." Nayak's framing explicitly connected Google's quality signals to the question of whether a knowledgeable friend would recommend the source - a standard that evaluates the whole information-production system, not just individual articles.

Danny Sullivan and the Helpful Content Framework: Danny Sullivan, Google's Search Liaison and a prominent SEO journalist before joining Google, has provided the most detailed public explanations of the Helpful Content system introduced in September 2022. In a Search Engine Roundtable interview following the update, Sullivan described the system as a site-wide classifier: "It's not about individual articles, it's more about whether the overall site is oriented toward being helpful to users versus primarily oriented toward search traffic." Sullivan's description confirmed what the update's behavior suggested - that a site with a majority of high-quality content could still be affected if a substantial proportion of its content was assessed as unhelpful, making content pruning and quality auditing a strategic priority for content-heavy sites.

The 2022 and 2023 Core Update Patterns: Following the August 2022 helpful content update and subsequent core updates through 2023, Google's Search Liaison account tweeted a series of clarifications (archived in Search Engine Land's algorithm update history) that identified specific categories of content most affected. Pages affected by the 2023 core updates shared characteristics documented by Lily Ray (SEO Director at Amsive Digital, who analyzed hundreds of affected sites): high rates of "staff" bylines rather than named individual authors, thin coverage of claimed topics relative to competing pages, and external link profiles with few citations from domain-relevant authoritative sources.

These observations align precisely with the E-E-A-T framework as described in the Quality Rater Guidelines, providing empirical validation that the guidelines are predictive of algorithm behavior.

Google's "Search Off the Record" Podcast: Google's official podcast, hosted by Googlers John Mueller, Gary Illyes, and Martin Splitt, has addressed content quality signals in multiple episodes. A 2022 episode on content quality included Illyes explaining that Google's systems look for "clear signs of expertise" such as citations from other expert sources, specific technical vocabulary used appropriately, and content depth that matches the complexity of the topic.

Illyes noted that "a page that only covers the surface of a topic when better, more comprehensive resources exist is unlikely to be considered high quality, even if it is technically accurate."

Real-World Content Quality Case Studies

The theory of E-E-A-T becomes concrete through documented histories of sites that gained or lost rankings following changes to their content quality signals.

Healthline's Medical Review Infrastructure: Healthline Media, now one of the most authoritative consumer health information sources in the US, built its E-E-A-T signals systematically after observing the impact of Google's 2018 Medic Update on health content competitors. Every article published on Healthline since 2019 carries a medical review byline - a licensed healthcare professional (physician, pharmacist, registered nurse, or other credentialed provider relevant to the topic) who has reviewed the article's factual claims.

The reviewers' credentials and review process are described in detail on a dedicated "Medical Affairs" page. Healthline's Founder and CEO Nat Williams described the investment in a 2019 Business Insider interview as "significant but non-negotiable" after the competitive landscape shifted following the Medic Update. The result: Healthline overtook WebMD as the most-visited health information site in the US by 2020, a competitive inversion that tracked directly with the quality infrastructure investment.

Forbes Advisor's Recovery from Quality Issues: Forbes Advisor, the financial advice vertical of Forbes Media, provides a case study in the risks of publishing at scale without quality infrastructure. A 2023 analysis by Lily Ray at Amsive identified Forbes Advisor as having a significant proportion of articles authored by "staff" bylines rather than named financial experts, with content that often closely paralleled competitor pages without substantive differentiation.

Following Google's March 2023 core update, Forbes Advisor's organic visibility dropped approximately 20% according to Sistrix visibility data. The pattern is consistent with Google's documented approach of applying sitewide quality signals - high-quality individual articles affected by the lower quality of the surrounding content ecosystem.

Wirecutter's Methodology Documentation and Authority: The New York Times' Wirecutter review site (acquired in 2016 for $30 million) is frequently cited as the model for E-E-A-T implementation in product reviews. Wirecutter's testing methodology is documented in explicit detail on each product category page: the number of products tested, the specific tests conducted, the duration of testing, the criteria applied, and the expertise of the testers.

This methodology documentation serves a dual purpose - it demonstrates expertise and experience to both human readers and quality evaluation systems. Wirecutter's organic search visibility remained stable through multiple core updates that significantly affected review sites, which SEO industry analysts have attributed to their systematic E-E-A-T signals.

An Ahrefs analysis of Wirecutter's link profile found that over 60% of their inbound links came from authoritative publishing domains (major news outlets, consumer organizations, government agencies) - the kind of external authority recognition that the Authoritativeness dimension of E-E-A-T is designed to measure.

The "Thin Content" Penalty Recovery of a Financial Comparison Site: SEO consultant Marie Haynes published a detailed case study in 2022 of a financial comparison client that had lost 70% of organic traffic following successive core updates. The site's content followed a template-driven format: comparison tables with pricing data but minimal explanatory content, no author attribution, and no content addressing why the comparisons mattered to the reader's specific situation.

The recovery involved three components: adding named financial editors with verifiable credentials, substantively expanding the explanatory content around each comparison, and publishing the editorial methodology for how comparisons were constructed. Traffic recovery took eight months, with a 60% recovery relative to pre-penalty levels by the time Haynes published the case study.

The case illustrates both the severity of quality-related penalties and the extended timescale of recovery - algorithm quality assessments update gradually as content improves.

Key Content Quality Metrics That Reflect E-E-A-T Signals

Measuring content quality is inherently harder than measuring technical performance, but several metrics provide useful proxies.

Referring Domain Growth from Editorially-Relevant Sources: The most valuable backlinks for E-E-A-T are those from authoritative sources in the same topical domain. Ahrefs' "Site Explorer" and Semrush's "Backlink Analytics" tools enable filtering referring domains by topical relevance and domain authority.

A content site that earns 50 new referring domains per month from domain-relevant authoritative sources is building Authoritativeness at a rate that competes effectively in most verticals. A site earning 50 referring domains per month from general directories provides minimal E-E-A-T benefit. The metric to track is not total referring domain count but the proportion from topically relevant, high-authority sources.

Search Console's "People Also Ask" Appearance Rate: Pages that appear in "People Also Ask" boxes are being assessed by Google as authoritative answers to specific sub-questions within a topic. Tracking which pages appear in PAA boxes, and for which questions, provides direct evidence of which content Google considers authoritative.

Tools like Ahrefs and Semrush track PAA appearances at scale. A content site appearing in PAA boxes for 30%+ of its primary topic queries is receiving strong comprehensiveness signals from Google's ranking systems.

Average Position for Branded + Topic Queries: When users search for "[your brand] + [topic]" (for example, "Healthline blood pressure"), they are demonstrating brand recognition within a topic category - an indicator of Authoritativeness. Tracking branded + topic query performance in Search Console and monitoring its growth over time measures whether the site is accumulating the kind of topical authority recognition that feeds E-E-A-T signals.

Growth in this metric typically precedes growth in non-branded topic rankings as authority builds.

Content Update Frequency for High-Value Pages: Google's systems assess content freshness as part of quality evaluation for time-sensitive topics. Tracking whether high-value pages are being updated when the underlying information changes - new regulations, updated research, product changes - and measuring the correlation between updates and ranking changes provides evidence of the freshness signal's impact.

The useful benchmark from Backlinko's research: for evergreen content in competitive queries, pages that had been updated within the previous 12 months ranked an average of 1.4 positions higher than equivalent pages that had not been updated, controlling for domain authority.

References

- Google. "Search Quality Rater Guidelines." static.googleusercontent.com. https://static.googleusercontent.com/media/guidelines.raterhub.com/en//searchqualityevaluatorguidelines.pdf

- Google Search Central. "Creating Helpful, Reliable, People-First Content." developers.google.com. https://developers.google.com/search/docs/fundamentals/creating-helpful-content

- Google Search Central. "What Site Owners Should Know About Google's Core Updates." developers.google.com. https://developers.google.com/search/blog/2019/08/core-updates

- Ahrefs. "E-E-A-T: What It Is and How to Demonstrate It for SEO." ahrefs.com. https://ahrefs.com/blog/eat-seo/

- Moz. "What Is E-E-A-T and Why Does It Matter for SEO?" moz.com. https://moz.com/blog/google-e-e-a-t

- Search Engine Journal. "Google E-E-A-T: What It Is and How to Demonstrate It." searchenginejournal.com. https://www.searchenginejournal.com/google-eat/

- Healthline Media. "About Healthline: Expert Knowledge You Can Trust." healthline.com. https://www.healthline.com/about

- NerdWallet. "Our Story and Editorial Approach." nerdwallet.com. https://www.nerdwallet.com/blog/corporate-news/

- Schema.org. "Schema.org - Schema.org." schema.org. https://schema.org/

- Google. "Core Web Vitals." web.dev. https://web.dev/vitals/

- Roth, Danny. "More details about the August 2018 core algorithm update." Google Search Central Blog, 2018. https://developers.google.com/search/blog/2018/08/core-algorithm-update

Frequently Asked Questions

What is E-E-A-T and why does it matter for content quality?

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness—Google’s framework for evaluating content quality, particularly for topics that can impact users’ health, finances, safety, or major life decisions (called YMYL—Your Money or Your Life topics). Experience (added in 2022): Does the content creator have genuine first-hand experience with the topic? This is especially important for product reviews, how-to guides, and practical advice. A restaurant review from someone who ate there carries more weight than one written by someone who never visited. A software tutorial from someone who uses the tool daily is more valuable than one from someone who read the documentation. Expertise: Does the creator have formal qualifications, training, or demonstrated knowledge in the topic? This matters for topics requiring specialized knowledge: medical advice from doctors, financial guidance from certified planners, legal information from attorneys, technical content from experienced practitioners. Authoritativeness: Is the creator or site recognized as a go-to source for this topic? Authority comes from: recognition by peers and industry, citations and mentions from other authoritative sources, awards, credentials, or professional standing, and a track record of accurate, valuable content.Trustworthiness: Can users trust the information and the site providing it? Trust is built through: accurate, well-researched information, transparent sourcing and citations, clear author attribution, secure website (HTTPS), privacy policy and terms of service, no deceptive practices, and positive reputation online. Why E-E-A-T matters: Search engines want to surface content that helps users and doesn’t harm them. Low-quality health advice can endanger people. Poor financial guidance can cause economic harm. Inaccurate information on important topics erodes trust in search results. E-E-A-T helps search engines identify content from credible sources. How Google evaluates E-E-A-T: Google uses human quality raters who evaluate search results using detailed guidelines (Search Quality Rater Guidelines—publicly available). Raters don’t directly affect rankings, but their assessments train machine learning algorithms. The algorithms look for signals that correlate with high E-E-A-T: author credentials mentioned on pages or author bios, external validation (links and mentions from authoritative sources), content depth and accuracy, and site reputation and history. E-E-A-T by topic type: YMYL topics (health, finance, legal, safety): Very high E-E-A-T standards. Content should be from qualified professionals. Clear sourcing and citations required. Misinformation can cause real harm. Expertise topics (technical, professional, academic): High standards, but demonstrated expertise through experience and track record can substitute for formal credentials. General topics (entertainment, hobbies, general interest): Lower E-E-A-T requirements. Passion, experience, and genuine insight matter more than credentials. Even recipe bloggers or hobby writers can rank well if they provide value.Building E-E-A-T: For Experience: Share first-hand stories, photos, and details that only someone with real experience would have. Include specifics (dates, locations, model numbers, personal observations) that demonstrate you’ve actually done what you’re describing. Create content based on your genuine experiences, not just research. For Expertise: Display credentials, certifications, and qualifications prominently. Create comprehensive, technically accurate content that demonstrates deep knowledge. Cite authoritative sources and research. Participate in your professional community (speaking, publishing, awards). For Authoritativeness: Earn backlinks from other authoritative sites in your field. Get mentioned, cited, or featured by industry publications. Build relationships with other authorities. Publish consistently and build a reputation over time. For Trustworthiness: Be accurate—fact-check and correct errors promptly. Cite sources and be transparent about information origins. Display clear contact information and author bios. Use HTTPS and display trust signals (privacy policy, secure checkout, etc.). Build positive reviews and testimonials. Maintain editorial standards. The reality: E-E-A-T isn’t a direct ranking factor you can ‘optimize.’ It’s a framework for quality that search algorithms approximate through hundreds of signals. You can’t fake E-E-A-T—you build it through genuine expertise, experience, and trust over time. For competitive topics, especially YMYL, strong E-E-A-T is table stakes. For less competitive or non-YMYL topics, you can rank with lower E-E-A-T if your content is valuable and comprehensive.

What content depth and comprehensiveness signals indicate quality?

Search engines evaluate whether content thoroughly covers a topic or provides only surface-level information. Depth signals: 1) Topic coverage completeness: Does the content answer the primary question and related sub-questions? For example, an article about ‘how to make bread’ should cover ingredients, equipment, step-by-step process, timing, troubleshooting common problems, variations, and storage—not just list ingredients. Search engines can detect topic coverage by identifying whether related concepts, entities, and questions are addressed. 2) Content length (as a proxy, not a goal): Longer content often correlates with comprehensiveness, but length alone isn’t the goal. A 3,000-word comprehensive guide is better than a 500-word overview for complex topics. A 500-word guide is better than a 3,000-word fluff piece for simple topics. Write as long as necessary to cover the topic thoroughly—no longer, no shorter. 3) Semantic richness: Does the content use varied, sophisticated vocabulary related to the topic? This signals expertise and depth. For example, medical content using precise terminology (alongside plain language explanations) signals expert knowledge. Content using only basic vocabulary might signal surface-level understanding.4) Structured information hierarchy: Clear headings, subheadings, and logical flow indicate organized, comprehensive coverage. Pages with flat structure (no headings, wall of text) signal low effort. Search engines use heading tags (H1-H6) to understand content structure and topic coverage. 5) Supporting elements: Does the content include diagrams, images, videos, charts, or other media that enhance understanding? Multimedia elements often indicate higher effort and more comprehensive coverage. Original images and diagrams signal genuine expertise and effort. 6) Examples and case studies: Concrete examples demonstrate practical application and deeper understanding. Abstract theory without examples suggests surface knowledge. Multiple, varied examples signal comprehensive understanding. Comprehensiveness signals: 1) Subtopic coverage: Does the content address the main facets of a topic? Search engines can identify whether key subtopics are mentioned. For example, content about ‘SEO’ should cover technical SEO, on-page optimization, content strategy, link building, and measurement. Missing major subtopics signals incomplete coverage. 2) Related questions addressed: Does the content answer common related questions users have? This is why ‘People Also Ask’ and FAQ sections are valuable. Addressing related questions signals comprehensive coverage of user needs. 3) Context and background: Does the content provide necessary context for understanding? Foundational concepts, definitions, history, or prerequisites? Content that assumes too much knowledge or provides no context is less comprehensive.4) Nuance and caveats: Does the content acknowledge complexity, tradeoffs, and exceptions? Oversimplified content that presents everything as black-and-white suggests shallow understanding. Expert content acknowledges nuance: ‘This usually works, but in these cases…’ ‘The best approach depends on…’ ‘There are tradeoffs between…’ 5) Alternative perspectives: Does the content present different approaches or viewpoints? Acknowledging that multiple valid approaches exist signals comprehensive understanding. Presenting only one narrow perspective suggests incomplete coverage. 6) Updates and freshness: Is the content current and updated as the topic evolves? Outdated content signals lack of maintenance and commitment to quality. Regular updates signal ongoing expertise and comprehensive coverage of evolving topics. How search engines detect depth and comprehensiveness: Natural language processing (NLP): Algorithms understand topics, entities, and relationships. They can identify whether related concepts are covered. Topic modeling: Machine learning identifies the expected subtopics for a query and checks whether content addresses them. User behavior: If users quickly return to search after visiting a page (high bounce rate, low time on page), it signals the content didn’t comprehensively answer their question. If users stay and don’t return to search, it signals comprehensive, satisfying content. Comparative analysis: Search engines compare content across top-ranking pages to identify common elements. Content missing elements present in most top results may be seen as less comprehensive.Practical optimization: Research the topic thoroughly before writing. Use ‘People Also Ask,’ related searches, and competitor analysis to identify subtopics. Outline before writing to ensure comprehensive coverage. Map out all major subtopics and related questions. Include specific details and examples that demonstrate deep knowledge. Address common objections and questions users might have. Use clear structure (headings, sections) to organize comprehensive content logically. Update content when new information or approaches emerge. Link to authoritative sources to support claims and provide additional depth. Add multimedia elements (images, videos, diagrams) where they enhance understanding. The balance: Comprehensive doesn’t mean exhaustive to the point of overwhelming. Focus on what’s useful for your target audience. Cover the topic thoroughly for that audience’s needs, even if that means not covering every edge case or advanced detail. Depth appropriate for beginners differs from depth appropriate for experts. Match depth to your audience while ensuring you fully address their questions.

How do user engagement metrics signal content quality?

While Google doesn’t directly use specific engagement metrics as ranking factors, user behavior patterns strongly signal content quality and user satisfaction. Key engagement signals: 1) Click-through rate (CTR) from search results: What percentage of users who see your page in search results click on it? What it signals: Compelling titles and meta descriptions (suggests relevance and quality positioning). Brand recognition and trust. Expectation that the content will satisfy the query. How it affects rankings: High CTR suggests users find your result appealing and relevant. Over time, if your result consistently gets higher CTR than others at your position, you may rank higher. Low CTR suggests your title/description doesn’t match user intent or your positioning is weak. Note: CTR varies by position—#1 naturally has higher CTR than #5, so search engines consider CTR relative to position. 2) Dwell time / time on page: How long do users spend on your page before returning to search or navigating elsewhere? What it signals: Short dwell time (seconds): User didn’t find what they needed, content didn’t match their expectation, or page had technical issues. Medium dwell time (1-3 minutes): User might have found a quick answer, or content was thin. Long dwell time (5+ minutes): User is engaging with comprehensive content, suggesting it’s valuable and relevant. Caveat: Very short dwell time isn’t always bad. If a user’s query is simple (‘What year did X happen?’) and your content provides an immediate, clear answer, short dwell time is appropriate. Search engines distinguish between ‘found answer quickly’ and ‘page was useless.’3) Bounce rate vs return to search: Did the user leave your site without exploring further (bounce), and did they return to search results? Good scenario: User bounces but doesn’t return to search. They got their answer and are done. Or they bookmarked or shared your page. Bad scenario: User quickly returns to search and clicks a different result (pogo-sticking). This strongly signals your page didn’t satisfy their query. 4) Pages per session / navigation depth: Do users explore other pages on your site, or leave immediately? What it signals: High pages per session: Users find your content valuable enough to explore more. Your site has good internal linking and related content. Low pages per session: Not necessarily bad if the single page fully satisfied their query. But combined with quick return to search, it signals dissatisfaction. 5) Repeat visits: Do users return to your site over time? What it signals: Repeat visits indicate: Content is valuable enough to warrant returning. Site is bookmarked or remembered as a quality resource. Brand trust is established. 6) Social sharing and external links: Do users share your content or link to it from other sites? What it signals: Content is valuable enough that users want others to see it. Content is authoritative enough to cite as a source. While social shares aren’t direct ranking factors, they amplify reach, increasing the likelihood of earning backlinks (which do affect rankings).How search engines use engagement signals: Not directly as ranking factors: Google has stated they don’t use specific analytics metrics (bounce rate, time on page from Google Analytics) directly. This would be easy to manipulate and unreliable. Indirectly through patterns: Search engines have their own click data from search results. They can see: Which results users click. How long before users return to search. Which result ultimately satisfies the query (users don’t return after clicking it). These patterns, aggregated across thousands of users, strongly signal quality and relevance. Machine learning models: User behavior data helps train ranking algorithms to recognize patterns associated with high-quality, satisfying content. Experimentation and testing: Search engines constantly test result rankings and observe user behavior to validate ranking decisions. Optimizing for engagement: 1) Match user intent precisely: If your content doesn’t answer what users are actually looking for, engagement will be poor. Study the query, understand intent (informational, navigational, transactional), and deliver exactly what’s expected. 2) Deliver value immediately: Don’t bury key information. Users should quickly see that your content addresses their query. Use clear headings, summaries, or jump links for long content. 3) Make content scannable: Use headings, bullet points, bold key terms, short paragraphs, and white space. Users scan before reading deeply—make it easy to find relevant sections. 4) Improve page speed: Slow load times cause users to abandon before engaging. Core Web Vitals directly affect first impressions. 5) Create internal link opportunities: If users found one piece of content valuable, guide them to related content. This increases pages per session and signals your site as a comprehensive resource. 6) Write compelling titles and descriptions: Accurate, benefit-focused titles and descriptions improve CTR. But don’t clickbait—mismatched expectations harm engagement once users land on your page.7) Reduce friction: Avoid intrusive interstitials (pop-ups) that block content. Minimize ads that interfere with reading. Ensure mobile usability. Make text readable (sufficient font size, contrast, line spacing). The virtuous cycle: Good engagement signals quality → Rankings improve → More traffic → More engagement data → Further validation of quality. Poor engagement signals problems → Rankings decline → Less traffic → Less opportunity to improve. Focus on genuine user satisfaction, not gaming metrics. Create content that truly helps users, and engagement naturally follows. Search engines are getting better at distinguishing genuine quality from manipulation.

What technical quality signals do search engines evaluate?

Beyond content, technical implementation signals quality, professionalism, and user experience. Core technical quality signals: 1) Page speed and Core Web Vitals: As covered in dedicated articles, these metrics (LCP, FID/INP, CLS) directly impact rankings and user experience. Fast, responsive, stable pages signal quality and investment in user experience. Slow, janky pages signal low quality or neglect. 2) Mobile-friendliness: With mobile-first indexing, mobile experience is critical. Signals evaluated: Responsive design that adapts to screen sizes. Readable text without zooming. Adequate tap target sizes (buttons, links). No horizontal scrolling required. Content not hidden behind mobile-unfriendly elements. Fast mobile load times. Tools: Google’s Mobile-Friendly Test, Search Console’s Mobile Usability report. 3) HTTPS (secure connection): Sites using HTTPS (encrypted connections) signal security and trustworthiness. HTTPS has been a ranking factor since 2014. Non-HTTPS sites show ‘Not Secure’ warnings in browsers, harming trust. Modern sites must use HTTPS—it’s table stakes, not optional. 4) Site architecture and navigation: Clear, logical structure signals professionalism and user focus. Quality signals: Shallow hierarchy (most pages reachable in 3-4 clicks from homepage). Logical URL structure (/category/subcategory/page-name). Clear navigation menus and breadcrumbs. Internal linking connecting related content. No orphaned pages (pages with zero internal links). Poor signals: Overly deep hierarchies requiring many clicks to reach content. Chaotic URL structures with parameters and session IDs. Broken internal links. Duplicate or near-duplicate URL variations.5) Clean, valid HTML/CSS: While minor HTML errors don’t kill rankings, severe problems signal low quality. Quality signals: Valid HTML structure. Proper use of semantic HTML (heading tags, lists, tables, etc.). Clean code without excessive inline styles or scripts. Proper DOCTYPE declaration. Red flags: Broken HTML that prevents proper rendering. Malformed structured data. Excessive validation errors suggesting automated or low-quality content generation. 6) Proper use of structured data: Schema markup helps search engines understand content types and context. Quality signals: Accurate, complete structured data for content types (articles, products, recipes, events, FAQs, etc.). Proper implementation without errors. Structured data that matches visible content. Benefits: Enables rich results (star ratings, FAQs, recipe cards, etc.) in search. Signals semantic understanding and effort. Can improve CTR even if not directly affecting rankings. Tools: Google’s Rich Results Test, Schema Markup Validator. 7) Accessibility: While not explicitly confirmed as a ranking factor, accessibility correlates with quality and usability. Quality signals: Proper heading hierarchy (H1-H6 used correctly). Alt text on images. Sufficient color contrast. Keyboard navigation support. ARIA labels where appropriate. Accessible forms and interactive elements. Benefits: Better user experience for all users, not just those using assistive technologies. Signals attention to detail and inclusive design. Often correlates with better overall UX.8) No intrusive elements: Google explicitly penalizes intrusive interstitials (certain types of pop-ups). Quality signals: No pop-ups that cover main content immediately on mobile. No interstitials that are difficult to dismiss. Ads that don’t interfere with content consumption. Reasonable ad density (not overwhelming content with ads). Red flags: Full-screen pop-ups on mobile arrival. Countdown timers before allowing content access. Deceptive ads that look like content or navigation. Pages where ads dominate over content. 9) Security and trustworthiness indicators: Beyond HTTPS, other signals indicate site trustworthiness. Quality signals: Clear contact information. Privacy policy and terms of service. About page with author/company information. Professional design and branding. No malware or phishing attempts. Positive reputation (reviews, mentions, etc.). Red flags: No contact information or hidden behind obscure paths. Deceptive practices (misleading titles, cloaking, sneaky redirects). Malware detection. Spammy ads or affiliate schemes. 10) Content freshness and maintenance: Technical staleness signals neglect. Quality signals: Recently updated content (visible update dates). No broken links or outdated references. Functional site features (no broken search, forms, etc.). Regular publishing schedule. Red flags: Many broken links (404s). Outdated copyright dates (© 2010 in 2024). Non-functional features. Content referencing outdated information (‘In 2015…’ written in 2023 without updates).How to audit technical quality: Run automated audits: Google PageSpeed Insights, Lighthouse, Search Console. Tools like Screaming Frog, Sitebulb for comprehensive crawling. Check Search Console reports: Coverage (indexing issues). Core Web Vitals (performance). Mobile Usability (mobile problems). Security Issues (malware, hacking). Manual review: Browse your site on mobile devices. Test forms, search, and interactive features. Check for broken links and images. Review on slow connections to assess real-world performance. Competitive benchmarking: Compare your technical implementation to top-ranking competitors. Identify gaps where competitors have stronger technical foundations. The foundation: Technical quality signals professionalism and user focus. They won’t make up for poor content, but they’re necessary for competitive rankings. Think of technical quality as table stakes—necessary but not sufficient. Focus on fundamentals first (speed, mobile-friendliness, security, structure), then refine advanced elements (structured data, accessibility, rich features).

How can you systematically improve content quality signals?

Improving quality signals requires a strategic, ongoing approach across content, technical, and trust dimensions. Content quality improvement framework: 1) Audit existing content: Use tools and analytics to identify: Low-traffic pages (may lack quality or relevance). High-bounce-rate pages (users aren’t finding value). Thin content (under 500 words without justification). Outdated content (old dates, outdated information). Missing E-E-A-T signals (no author, no credentials, no sources). Prioritize pages with traffic potential but current quality issues. 2) Research user intent deeply: For target topics, analyze: Top-ranking competitors (what do they cover?). ‘People Also Ask’ and related searches (what questions do users have?). Forum discussions and social media (what problems do people actually face?). Keyword variations (different ways users search for the topic). Use this research to ensure comprehensive topic coverage. 3) Enhance depth and comprehensiveness: Add sections addressing common related questions. Include examples, case studies, and practical applications. Add visual elements (diagrams, screenshots, videos) where helpful. Cite authoritative sources and research. Address objections and alternative perspectives. Structure with clear headings for scanability. 4) Build E-E-A-T signals: Add or improve author bios with credentials and experience. Include bylines on articles (who wrote this?). Cite sources and link to authoritative references. Display relevant certifications, awards, or recognition. Get content reviewed by subject matter experts if you lack credentials. Earn backlinks and mentions from authoritative sites. 5) Optimize for user engagement: Front-load value (don’t bury key information). Break up long text with headings, bullets, images. Add table of contents for long articles. Improve page speed for better first impressions. Include clear calls to action or next steps. Add internal links to related, valuable content.Technical quality improvement: 1) Fix Core Web Vitals issues: Optimize images (compression, modern formats, lazy loading). Minimize and defer JavaScript. Implement caching and CDN. Stabilize layout (set image dimensions, reserve space for ads). 2) Ensure mobile excellence: Test on actual mobile devices, not just emulators. Fix tap target sizes, font sizes, viewport issues. Ensure content is accessible without horizontal scrolling. 3) Implement structured data: Add appropriate schema markup (articles, products, FAQs, how-tos, recipes, etc.). Test implementation with Google’s Rich Results Test. Monitor Search Console for structured data errors. 4) Improve site architecture: Flatten hierarchy where possible. Ensure all important pages have internal links. Implement breadcrumbs. Fix orphaned pages. Clean up URL structure (avoid parameters when possible). 5) Address technical errors: Fix broken links (404s). Resolve server errors (500s). Eliminate redirect chains. Ensure proper canonicalization. Fix indexing issues (robots.txt, noindex tags, etc.). Trust and authority building: 1) Build quality backlinks: Create linkable assets (comprehensive guides, original research, tools, infographics). Reach out to relevant sites for guest posting or collaboration. Get featured in industry publications. Engage in digital PR (press releases, expert quotes, HARO). Build relationships with influencers and authorities in your space. 2) Establish brand presence: Consistent branding across channels. Active social media presence (even if not for direct SEO value). Speaking engagements, podcasts, webinars. Awards or recognition programs. 3) Display trust signals: Customer testimonials and reviews. Security badges and certifications. Clear privacy policy and terms. Professional design and branding. Transparent contact information and about page. 4) Monitor and protect reputation: Google your brand regularly. Respond to reviews (positive and negative). Address negative content or misinformation. Build enough positive content/mentions to outweigh negatives.Measurement and iteration: 1) Set baseline metrics: Current rankings for target keywords. Organic traffic levels. Engagement metrics (bounce rate, time on page, pages per session). Backlink profile (quantity and quality). Core Web Vitals scores. 2) Track improvements over time: Monitor rankings (weekly/monthly). Track traffic changes (segment by landing page). Analyze engagement trends. Watch for new backlinks and mentions. Check Search Console for indexing and mobile usability issues. 3) A/B test when possible: For changes to templates or systematic improvements, test impact. Compare performance of improved content vs un-improved. Iterate based on data. 4) Conduct regular content audits: Quarterly or bi-annually review: What’s ranking and driving traffic? What’s stagnant or declining? What needs updates or expansion? What should be consolidated or removed? The ongoing nature of quality: Content quality isn’t a one-time optimization. It requires: Continuous content updates as topics evolve. Technical maintenance to prevent degradation. Ongoing link building and authority development. Regular audits to identify new opportunities and problems. Adaptation to algorithm changes and user behavior shifts. The sites that consistently rank well are those that systematically invest in quality across all dimensions—content, technical, and trust—over time. Quick wins exist (fixing broken stuff, optimizing obvious issues), but sustained success requires treating quality improvement as ongoing strategic work, not a project with an end date.