In 2018, Google published a research paper titled 'Speed Is Now a Landing Page Factor for Google Search and Ads.' It was a declaration that the company was prepared to encode user experience directly into its ranking algorithm. But speed alone turned out to be too blunt an instrument. A page could be fast on a fibre connection and unusable on a mobile network; it could load quickly and then shift all its content around as ads appeared, making users tap the wrong link. Google needed a more precise framework. Core Web Vitals, introduced in 2020 and made a ranking signal in 2021, were the result: three carefully chosen metrics that capture distinct dimensions of how a page actually feels to use.

The significance extends well beyond SEO. Web performance has direct consequences for revenue. Research by Deloitte (2020) found that improving page speed by just 0.1 seconds resulted in an 8% increase in conversions for retail sites and a 10% increase for travel sites. Walmart found that every one-second improvement in page load time corresponded to a 2% increase in conversions. Akamai's research found that 100-millisecond delays in page load time reduce conversion rates by 7%. These numbers have driven a shift in how engineering teams think about performance -- not as a nice-to-have polish item but as a direct revenue driver.

This article explains what each Core Web Vital measures, why Google chose these specific metrics, how to measure and diagnose problems, and the specific technical interventions that move each score. It is aimed at developers, SEO professionals, and product managers who want to understand the metrics deeply enough to act on them rather than simply report on them.

'Performance is not just about speed. It is about making every user feel valued -- that their time is not being wasted and that the product works as it should.' -- Ilya Grigorik, Web Performance Engineer at Google, from 'High Performance Browser Networking' (2013)

Key Definitions

Largest Contentful Paint (LCP): A measurement of loading performance -- specifically, how long it takes for the largest image or text block visible in the viewport to fully render. Represents the point at which a user perceives a page as 'loaded.'

Interaction to Next Paint (INP): A measurement of overall interactivity responsiveness. INP observes the latency of all click, tap, and keyboard interactions over the entire page lifecycle and reports the worst-case (or near-worst-case) value.

Cumulative Layout Shift (CLS): A measurement of visual stability. CLS quantifies how much the visible page content shifts unexpectedly during the loading phase, expressed as a score derived from the size and distance of layout shifts.

Field Data vs. Lab Data: Field data (also called real-user monitoring or RUM) captures metrics from actual users visiting a site via Chrome. Lab data is generated in controlled conditions using tools like Lighthouse. Google uses field data for ranking; lab data is useful for debugging.

Chrome User Experience Report (CrUX): Google's dataset of real-world performance metrics collected from Chrome users who have opted in to syncing browsing history. This is the data source Google uses for Core Web Vitals ranking signals.

The History: Why Google Built Core Web Vitals

Web performance measurement has a long history. In 2007, Steve Souders published 'High Performance Web Sites,' which established the first systematic framework for diagnosing and improving frontend performance. His rules -- minimize HTTP requests, use a content delivery network, add an Expires header -- were grounded in the realities of the browser of that era.

By 2010, Google had begun experimenting with page speed as a ranking signal, initially in a limited way. The challenge was that 'page speed' could be measured in dozens of ways, and different measures led to different optimization strategies. A page might optimize for time-to-first-byte (TTFB) but remain visually blank for seconds. It might load all its CSS quickly but have large images that dominated the above-the-fold viewport.

The Core Web Vitals framework, developed by Google's Chrome team and announced in May 2020, represented a deliberate attempt to identify the smallest set of metrics that best captured user experience. The team, including performance researchers Annie Sullivan, Philipp Walther, and Bryan McQuade, chose metrics based on three criteria: they had to be measurable in the field (not just in lab conditions), they had to be grounded in research on what users actually notice, and they had to be actionable -- something developers could actually improve.

The original three metrics (LCP, FID, CLS) became a Google ranking signal in June 2021 as part of the Page Experience update. In March 2024, FID was replaced by INP, a more comprehensive measure of interactivity that Google had been developing since 2022.

Largest Contentful Paint (LCP): Loading Performance

What It Measures

LCP marks the point in a page's loading timeline when the largest content element visible in the viewport has finished rendering. The element types LCP considers are: image elements, video poster images, elements with a background image, and block-level text elements.

The 'largest' is measured by rendered size -- the area of the element as it appears on screen, not its intrinsic size. An image that is 400x300 pixels but displays at 200x150 pixels is measured at the smaller size.

Thresholds: Good = 2.5 seconds or less. Needs improvement = 2.5 to 4 seconds. Poor = above 4 seconds.

What Causes Poor LCP

Research by Patrick Meenan and other members of the Chrome team identified four primary causes of slow LCP:

Slow server response times: If the HTML document itself takes a long time to arrive (high TTFB), every subsequent resource load is delayed. TTFB above 600 milliseconds is a common LCP killer.

Render-blocking resources: CSS and synchronous JavaScript in the

<head>delay the browser from parsing and rendering the HTML. A page with 5 render-blocking CSS files will not begin rendering until all 5 have downloaded and parsed.Slow resource load times: If the LCP element is an image, its download time directly determines LCP. Large, unoptimized images (often hero images at 2-4MB) are a common culprit.

Client-side rendering: Pages that deliver an empty HTML shell and populate content via JavaScript require the browser to execute JavaScript before any content is visible. React, Angular, and Vue applications without server-side rendering or static generation frequently suffer from this pattern.

Improving LCP

The most impactful LCP improvements, ranked by typical impact:

Preload the LCP image: If the LCP element is an image that is discovered late in the parse (for example, set as a CSS background or loaded by JavaScript), a <link rel="preload"> directive tells the browser to fetch it at the highest priority immediately. Google's own research identified missing LCP preloads as one of the most widespread fixable LCP problems.

Use a CDN: Serving resources from a CDN geographically close to users reduces latency. Cloudflare, Fastly, and AWS CloudFront all provide this capability. CDN usage alone can reduce TTFB by 200-500 milliseconds for global audiences.

Optimize images: WebP images are 25-35% smaller than equivalent JPEG images at comparable quality. AVIF is smaller still (roughly 50% smaller than JPEG) but has slightly less browser support. Next-generation formats combined with responsive images (srcset) can reduce LCP image payload from 2MB to under 200KB.

Eliminate render-blocking CSS: Moving critical styles inline and deferring non-critical CSS is consistently one of the highest-impact LCP interventions. Tools like Critical CSS automate the extraction of above-the-fold styles.

Interaction to Next Paint (INP): Interactivity

Why FID Was Replaced

First Input Delay (FID) measured only the input delay of the first user interaction on a page. This was a limitation. A page could have a fast FID (the first click was responsive) while being deeply sluggish for all subsequent interactions -- expanding an accordion, submitting a form, clicking navigation. Users experience the full lifecycle of interactions, not just the first one.

INP, developed by Google engineer Annie Sullivan and her team, was proposed in 2022 and became a Core Web Vital in March 2024. It measures the latency of all click, tap, and keyboard interactions observed during a page visit. The reported INP value is the highest observed interaction latency, with a small exception for outliers (the worst 3 interactions are excluded to reduce noise from accidental interactions).

Thresholds: Good = 200 milliseconds or less. Needs improvement = 200 to 500 milliseconds. Poor = above 500 milliseconds.

What Causes Poor INP

INP measures the time from when a user initiates an interaction to when the browser can visually update in response. Three phases contribute to this delay:

Input delay: The time between the event being dispatched and the browser beginning to run the event handler. Long input delays are caused by long tasks on the main thread -- JavaScript work that monopolizes the main thread and cannot be interrupted.

Processing time: The time spent executing event handlers. Complex DOM manipulations, heavy data processing, or poorly optimized event handlers drive up processing time.

Presentation delay: The time between event handler completion and the next frame being painted. This phase is often caused by large layout recalculations triggered by DOM changes.

Improving INP

Break up long tasks: The browser's main thread handles JavaScript execution, layout, painting, and user input. Long tasks (tasks taking more than 50 milliseconds) block all of this. Breaking long tasks into smaller chunks using setTimeout, requestIdleCallback, or the newer scheduler.yield() API gives the browser opportunities to respond to user input.

Minimize main-thread JavaScript: Offloading computation to Web Workers removes it from the main thread entirely. Background data processing, image manipulation, and complex calculations are good candidates for workers.

Defer non-critical JavaScript: Third-party scripts -- analytics, advertising, chat widgets, A/B testing tools -- are a frequent source of long tasks. Loading them with async or defer attributes, or using a script loading facade, reduces their impact on interactivity.

A 2023 audit by the HTTP Archive found that the median webpage loaded 21 third-party scripts. These scripts collectively contribute significantly to INP degradation, particularly on mobile devices where CPU performance is more limited.

Cumulative Layout Shift (CLS): Visual Stability

What It Measures

CLS quantifies how much page content moves unexpectedly during loading. The metric is calculated from 'layout shift records' -- each time a visible element changes position, the browser records the shift's impact fraction (what portion of the viewport was affected) and distance fraction (how far the element moved). CLS is the sum of the largest 'session window' of layout shifts.

A CLS score of 0 means no unexpected shifts occurred. A score of 0.1 means the cumulative impact of layout shifts during a session window affected 10% of the viewport area.

Thresholds: Good = 0.1 or less. Needs improvement = 0.1 to 0.25. Poor = above 0.25.

Common Causes and Fixes

Images without dimensions: Historically, the most widespread CLS cause. When an image loads and the browser does not know its dimensions, it renders at 0 height, then expands when the image loads -- pushing all subsequent content down. Fix: always specify width and height attributes on <img> elements, and use CSS aspect-ratio.

Ads and embeds: Third-party ad slots, social embeds (Twitter, YouTube), and iframes often inject content that displaces existing page content. Reserving space for these elements using CSS min-height or placeholder elements prevents layout shifts when the content loads.

Web fonts: Web fonts cause layout shifts when they load and cause text to reflow (a 'flash of unstyled text' followed by a reflow). font-display: optional prevents layout shift at the cost of potentially showing the system font on slow connections. size-adjust and font-metric-override descriptors can match fallback font metrics to the web font, reducing reflow magnitude.

Dynamically injected banners: Cookie consent notices, newsletter signup prompts, and sticky headers that appear after initial render are common sources of high CLS. Loading these elements as part of the initial render (reserving space) rather than injecting them after prevents shifts.

Measuring Core Web Vitals

Field Tools (Real User Data)

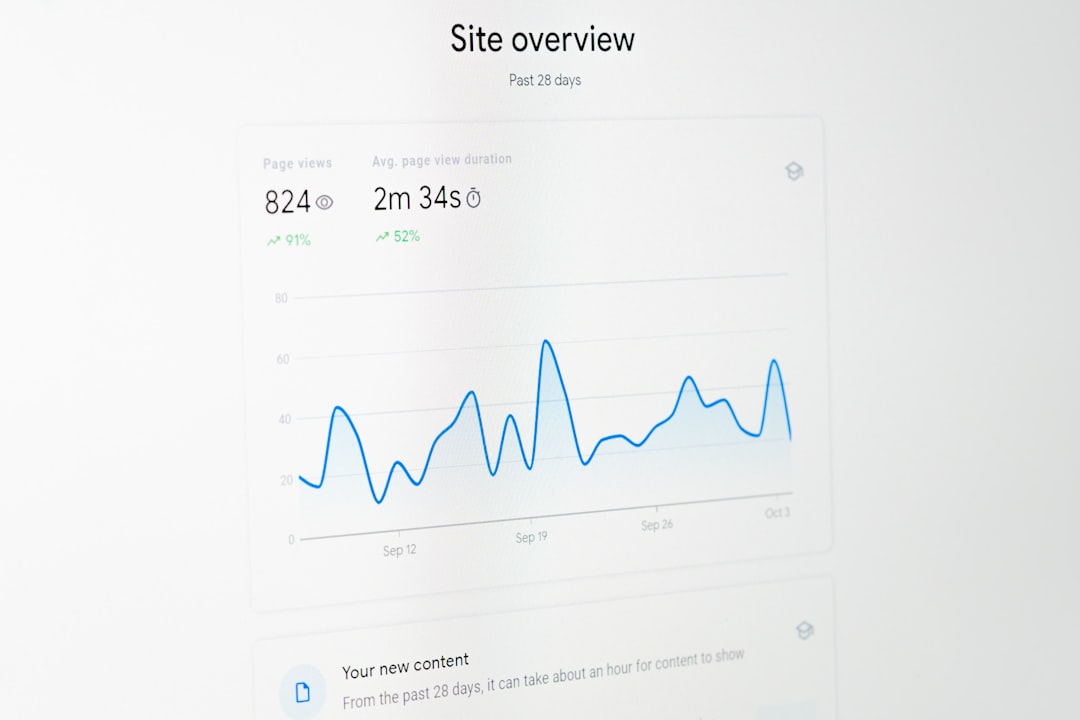

Google Search Console: The Core Web Vitals report in Search Console shows LCP, INP, and CLS performance based on CrUX data, segmented by mobile and desktop, with URL-level granularity for pages with sufficient traffic.

PageSpeed Insights: Combines CrUX field data (when available for the URL or origin) with Lighthouse lab data. The single most accessible tool for checking Core Web Vitals for a specific URL.

CrUX Dashboard: A Looker Studio template that visualizes CrUX data over time for any origin. Useful for tracking trends and comparing performance against historical baselines.

Lab Tools (Controlled Measurement)

Lighthouse: Google's open-source auditing tool, built into Chrome DevTools. Provides simulated load times and CWV estimates alongside actionable recommendations. Lighthouse scores are not directly equivalent to field data -- lab conditions simulate a Moto G4 on a slow 4G connection.

WebPageTest: More powerful than Lighthouse for diagnosing specific performance issues. Supports filmstrip views, waterfall charts, and multiple test locations. The 'Core Web Vitals' tab provides direct measurement of each metric.

web-vitals JavaScript library: Google's official library for measuring Core Web Vitals in the browser, matching the same calculation used in Chrome. Used for custom Real User Monitoring (RUM) implementation.

Core Web Vitals and SEO: The Ranking Signal Reality

Google has been careful to characterize Core Web Vitals as one signal among many, not a dominant ranking factor. Guidance from Google's Gary Illyes and John Mueller has consistently emphasized that relevance and content quality outweigh technical performance for most queries.

The practical implication is that Core Web Vitals function most like a tiebreaker. If two pages compete for the same query with similar content quality and authority, the page with better Core Web Vitals scores will tend to rank higher. For highly competitive queries where dozens of pages have roughly equivalent content quality, CWV become more significant.

Several independent SEO studies have attempted to quantify the ranking correlation. A 2021 study by Searchmetrics found a modest but measurable correlation between Core Web Vitals performance and ranking position, particularly for mobile rankings. A 2022 analysis by Semrush found that pages in the top 10 positions had meaningfully better LCP scores than pages ranking in positions 11-20. However, correlation is not causation: better-resourced sites with stronger domain authority tend to invest more in performance, creating a confound.

The honest assessment: improving Core Web Vitals to 'good' thresholds eliminates a potential negative signal, provides direct user experience benefits, and may provide a marginal ranking boost for competitive queries. It is not a shortcut to ranking high on irrelevant queries.

A Practical Improvement Workflow

The most effective approach to Core Web Vitals improvement follows a structured process:

Step 1: Baseline measurement -- Use PageSpeed Insights and Search Console to identify which metrics are failing, on which URLs, and on which device types. Mobile performance is more likely to fail (and more heavily weighted by Google) than desktop.

Step 2: Identify root causes -- Use Chrome DevTools Performance panel and WebPageTest waterfall charts to understand what is causing each metric to be poor. For LCP: is it TTFB, a render-blocking resource, or image download time? For INP: which interaction triggers the long task?

Step 3: Prioritize interventions -- Focus on the highest-traffic pages first. The improvements with the highest impact per unit of development effort (image optimization, LCP preloads, removing render-blocking CSS) should come before complex JavaScript refactoring.

Step 4: Monitor continuously -- Set up a RUM system using the web-vitals library to track field performance over time. Lighthouse in CI (using tools like Lighthouse CI or Calibre) prevents performance regressions from being shipped undetected.

Step 5: Iterate -- Core Web Vitals improvement is rarely a one-time project. JavaScript bundles grow, third-party scripts are added, content changes. Continuous monitoring and periodic audits maintain gains.

References

- Google Chrome Team. (2020). 'Defining the Core Web Vitals metrics thresholds.' web.dev/defining-core-web-vitals-thresholds.

- Sullivan, A., Walther, P., & McQuade, B. (2020). 'Evolving Cumulative Layout Shift in web tooling.' Chrome Developers Blog.

- Meenan, P. (2016). 'Time to First Byte: What It Is and Why It Matters.' WebPageTest Blog.

- Deloitte Digital. (2020). 'Milliseconds Make Millions.' Deloitte Insights.

- Walmart. (2012). 'Walmart: Performance Matters.' Velocity Conference presentation.

- Souders, S. (2007). 'High Performance Web Sites.' O'Reilly Media.

- Grigorik, I. (2013). 'High Performance Browser Networking.' O'Reilly Media.

- HTTP Archive. (2023). 'State of the Web: Third-party scripts.' httparchive.org/reports/state-of-the-web.

- Searchmetrics. (2021). 'Core Web Vitals Study: The Correlation Between Google Rankings and Performance Metrics.' Searchmetrics Research.

- Google Developers. (2024). 'INP is a Core Web Vital.' web.dev/inp.

- Chrome User Experience Report Documentation. developers.google.com/web/tools/chrome-user-experience-report.

- Semrush. (2022). 'Core Web Vitals and Ranking: What the Data Shows.' Semrush Blog.

Frequently Asked Questions

What are Core Web Vitals?

Core Web Vitals are three metrics Google uses to measure real-world page experience: LCP (loading speed), INP (interactivity), and CLS (visual stability). They became official Google ranking signals in 2021 and are measured using real user data from Chrome.

What is a good LCP score?

Google considers an LCP of 2.5 seconds or faster to be 'good.' Between 2.5 and 4 seconds needs improvement. Anything above 4 seconds is considered poor. LCP measures how long it takes for the largest visible content element to load.

What is INP and why did it replace FID?

INP (Interaction to Next Paint) replaced FID (First Input Delay) as a Core Web Vital in March 2024. INP measures the full responsiveness of a page across all interactions, not just the first one. A good INP score is 200 milliseconds or less.

What causes a high CLS score?

CLS (Cumulative Layout Shift) is typically caused by images or videos without specified dimensions, ads injected above existing content, dynamically injected content, and web fonts that cause text to reflow. A good CLS score is 0.1 or less.

How much do Core Web Vitals affect Google rankings?

Google describes Core Web Vitals as a 'tiebreaker' signal -- they matter most when other ranking factors (relevance, authority) are roughly equal between competing pages. Strong content still outranks technically perfect pages on irrelevant topics.