Obedience — In the spring of 1961, a psychologist at Yale University placed an advertisement in the New Haven Register offering four dollars — plus fifty cents carfare — to men willing to participate in a study of memory and learning. The men who responded were ordinary: factory workers, engineers, salespeople, teachers.

They came to a nondescript laboratory in Linsly-Chittenden Hall, met a mild-mannered experimenter in a gray technician's coat, and were told they would help investigate how punishment affects learning. What happened next would become the most consequential — and most disturbing — experiment in the history of social psychology.

Stanley Milgram's obedience studies, conducted between 1961 and 1962 and first published in the Journal of Abnormal and Social Psychology in 1963, revealed something that virtually no one had predicted and many still struggle to accept: that a substantial majority of normal, psychologically healthy adults would, under the direction of an authority figure, administer what they believed to be severe and potentially lethal electric shocks to a screaming, pleading stranger. Milgram had asked psychiatrists, graduate students, and middle-class adults to predict how many subjects would reach the maximum shock level of 450 volts.

The consensus was that fewer than 2 percent would go all the way — sadists, perhaps, or individuals with some unusual psychopathology. The actual figure was 65 percent.

That number has haunted psychology for more than six decades.

"Ordinary people, simply doing their jobs, and without any particular hostility on their part, can become agents in a terrible destructive process." — Stanley Milgram, 1974

What Obedience to Authority Actually Means

Obedience to authority is the tendency of individuals to comply with the instructions, demands, or commands of a person or institution perceived as having legitimate power, even when those instructions conflict with the individual's own moral judgment or produce harm to others.

The key distinction here is between compliance and obedience. Compliance is behavioral conformity driven by social pressure from peers. Obedience is hierarchical — it flows from a recognized authority downward. Milgram's contribution was to isolate and measure this hierarchical compliance under controlled conditions, stripping away the usual justifications (fear of punishment, personal benefit, ideological agreement) that might otherwise explain why people follow orders.

Destructive Obedience vs. Legitimate Authority

Not all obedience is problematic. Human societies depend on coordinated deference to rules, institutions, and expertise. The moral and psychological problem arises when that deference extends to instructions that cause unjustified harm. The following table maps the key distinctions:

| Dimension | Legitimate Authority | Destructive Obedience |

|---|---|---|

| Basis of authority | Expertise, consent, democratic mandate | Social role, uniform, institutional setting |

| Accountability | Authority accepts responsibility for outcomes | Authority diffuses or deflects responsibility downward |

| Harm to third parties | Minimized or regulated | Central to the instruction being given |

| Dissent permitted | Disagreement is possible without severe penalty | Dissent is actively suppressed or penalized |

| Moral agency of subordinate | Preserved — subordinate retains judgment | Suspended — subordinate enters "agentic state" |

| Transparency | Instructions and their purposes are open | Instructions may be obscured or rationalized |

| Reversibility | Subordinate can withdraw or question | Withdrawal is framed as betrayal or failure |

The concept of the "agentic state" is Milgram's own term from his 1974 book Obedience to Authority: An Experimental View. He proposed that when individuals enter a hierarchical structure, they shift from an autonomous mode — in which they see themselves as responsible for their own actions — to an agentic mode, in which they view themselves as instruments executing the will of a higher authority.

In the agentic state, moral self-regulation is not abolished but redirected: the person feels responsible to the authority, not for the consequences of the actions the authority directs.

The Yale Basement: The Original Experiment in Detail

The experimental setup Milgram devised was both elegant and ethically fraught. A naive subject arrived at the laboratory with a confederate posing as another participant. A rigged drawing always assigned the naive subject to the role of "Teacher" and the confederate to the role of "Learner." The Learner was strapped into a chair in an adjacent room, electrodes attached to his wrist, and told that the study involved learning word pairs.

The Teacher sat before a shock generator — a convincing piece of equipment with thirty switches ranging from 15 volts ("Slight Shock") to 450 volts ("XXX"). For each incorrect answer the Learner gave, the Teacher was instructed to administer a shock and advance to the next level.

The Learner, following a scripted sequence, gave wrong answers and at 75 volts began to grunt audibly. At 150 volts he demanded to be released from the experiment. At 285 volts his response was an agonized scream. At 300 volts he refused to provide any more answers. At 330 volts he fell silent — a silence that many subjects found more disturbing than the screaming, because it suggested unconsciousness or death.

When subjects hesitated or attempted to stop, the experimenter offered one of four scripted prods, delivered in a flat, calm tone:

- "Please continue" or "Please go on."

- "The experiment requires that you continue."

- "It is absolutely essential that you continue."

- "You have no other alternative; you must go on."

If the subject refused after all four prods, the experiment ended. In the baseline condition — where the Learner was in an adjacent room, heard but not seen — 65 percent of subjects (26 of 40) delivered the maximum 450-volt shock. All 40 subjects went to at least 300 volts.

Milgram ran approximately twenty-one variations of the study, each manipulating a different feature of the situation. These variations, published in his 1963 paper and elaborated in his 1974 book, remain among the most informative data in social psychology.

The Proximity Variations

When the Learner was placed in the same room as the Teacher, visible and audible, the compliance rate dropped to 40 percent. When the Teacher was required to physically hold the Learner's hand onto a shock plate, it dropped further to 30 percent. Milgram interpreted this as evidence that psychological distance — the degree to which the harm-doer is buffered from direct sensory contact with the victim — is a critical mediating variable.

The further removed you are from the consequences of your actions, the easier it is to continue.

This finding has direct implications for modern bureaucratic and technological contexts. An air force navigator who releases bombs from 30,000 feet, a debt collector who sends form letters, a social media moderator who processes reports at industrial scale — each operates at a distance that attenuates the feedback loop between action and consequence.

The Legitimacy Variations

When the experiment was moved from Yale University to a nondescript office building in Bridgeport, Connecticut — described only as a "commercial" research entity — the compliance rate fell to 47.5 percent. Institutional prestige, in other words, contributed roughly 17 percentage points to compliance. The Yale setting communicated legitimacy, trustworthiness, and scientific authority. Strip that away and some subjects found it easier to disobey.

More dramatically, when the experimenter left the room and delivered instructions by telephone, compliance dropped to 20.5 percent. Several subjects in this condition administered weaker shocks than instructed while claiming over the phone to be following the protocol. Authority, Milgram concluded, requires physical presence to be maximally effective.

The Peer Pressure Variation

Perhaps the most striking variation involved the introduction of two additional confederates who also played Teachers. When these confederates defied the experimenter at 150 volts and refused to continue, the rate of full compliance (to 450 volts) among the genuine subjects dropped to just 10 percent. A single act of visible dissent by peers dramatically reduced the authority's hold.

This finding anticipates later research on social proof and minority influence — the structural pressure of conformity can be redirected against obedience when a credible alternative is modeled.

Cognitive Science: Mechanisms Underlying Obedience

Moral Disengagement

Albert Bandura, the Stanford psychologist who developed social cognitive theory, proposed the concept of moral disengagement to describe the psychological mechanisms through which people deactivate their internal moral standards when engaging in harmful behavior (Journal of Moral Education, 2002). Milgram's subjects exhibited several of Bandura's mechanisms in real time: diffusion of responsibility ("the experimenter is in charge"), dehumanization of the victim ("he volunteered for this"), euphemistic labeling ("I'm just administering a stimulus"), and displacement of responsibility onto the institution ("Yale wouldn't have approved this if it were dangerous").

Moral disengagement is not rationalization after the fact. Bandura's research demonstrated that it operates proactively, adjusting behavior before and during the harmful act rather than only providing post-hoc justification.

The Role of Situational Framing

Lee Ross and Richard Nisbett, in their 1991 synthesis The Person and the Situation, argued that Milgram's experiments constitute the strongest empirical demonstration of the power of situational forces over dispositional characteristics. The "fundamental attribution error" — people's tendency to explain others' behavior in terms of character rather than context — led almost everyone, including professional psychologists, to dramatically underestimate the situational pull of Milgram's laboratory.

Philip Zimbardo extended this analysis in his concept of the "Lucifer Effect," developed in his 2007 book of the same name. Following his 1971 Stanford Prison Experiment (conducted with Craig Haney and W. Curtis Banks, published in International Journal of Criminology and Penology, 1973), Zimbardo argued that situations do not merely influence behavior — they can transform it so thoroughly that ordinary individuals become capable of systematic cruelty in a matter of days.

The Stanford Prison Experiment, in which student volunteers assigned to "guard" and "prisoner" roles in a simulated prison rapidly adopted their roles with genuine brutality and genuine suffering, provides an important contrast to Milgram's work. Where Milgram's subjects experienced acute distress and visible moral conflict — many trembled, sweated, and begged to stop even as they continued — Zimbardo's guards showed something closer to role absorption without apparent conflict.

The two studies together suggest that obedience can operate through at least two distinct psychological pathways: explicit compliance under pressure, and gradual identity assimilation into a social role.

Neural and Cognitive Load Accounts

More recent neuroimaging research has begun to probe the neural substrates of obedience. Emilie Caspar and colleagues at Ghent University (Current Biology, 2016) found that when participants performed harmful actions under coercion, the neural signatures associated with agency — specifically in the prefrontal cortex regions linked to action ownership — were attenuated compared to when they acted freely.

This suggests that the experience of reduced agency under authority is not merely a post-hoc rationalization but has a measurable neural correlate. Subjects quite literally felt less like the authors of their own actions when ordered to act.

Patrick Haggard and colleagues at University College London have extended this line of inquiry using temporal binding paradigms — measuring the perceived time gap between action and consequence as a proxy for sense of agency. In coercive contexts, the temporal binding effect weakens, indicating that people's implicit sense of causal authorship is genuinely reduced when acting under orders (Cognition, 2016).

The brain, in some measurable sense, partially reassigns authorship to the authority.

Four Case Studies Across Domains

Case Study 1: The Holocaust and the Reserve Police Battalion

Christopher Browning's 1992 historical monograph Ordinary Men examined Reserve Police Battalion 101, a unit of middle-aged German men who between 1942 and 1943 participated in the mass murder of approximately 83,000 Jewish civilians in occupied Poland. Browning's analysis drew on postwar testimonies to reconstruct how these men — not SS ideologues, not ardent Nazis, but ordinary middle-aged workers and tradespeople — came to participate in systematic atrocity.

Battalion commander Major Wilhelm Trapp explicitly told his men at Jozefow that those who felt unable to participate could step aside. Between ten and twenty men out of five hundred did so immediately. The rest complied — not because they were ordered under threat of their own death (a common post-hoc rationalization), but because of career concerns, conformity pressure, unwillingness to appear weak before peers, and the gradual normalization of each successive step.

Browning's analysis aligns closely with Milgram's proximity findings: the men who were assigned to the outer cordon — rounding up victims but not shooting them directly — found it psychologically easier to continue than those forced into direct killing.

Case Study 2: The My Lai Massacre, 1968

On March 16, 1968, soldiers of Charlie Company, 1st Battalion, 20th Infantry Regiment, United States Army, killed between 347 and 504 unarmed South Vietnamese civilians in the hamlet of My Lai. The troops had been briefed that the village was a Viet Cong stronghold. They encountered no enemy combatants.

Under the leadership of Lieutenant William Calley, and in a context where superiors had communicated an expectation of aggressive action, soldiers killed men, women, children, and infants over approximately four hours.

The subsequent Army investigation (the Peers Commission, 1970) documented not a breakdown of command authority but an excess of it: soldiers followed orders — or their interpretation of the tacit permissions embedded in orders — in a context where the hierarchical pressure to perform and the enemy-frame dehumanization of Vietnamese civilians had combined to disable normal moral inhibition. Crucially, Chief Warrant Officer Hugh Thompson, who landed his helicopter between soldiers and fleeing civilians and radioed for assistance, demonstrated that individual moral resistance was possible.

Thompson's act of defiance mirrors the dissenting confederate in Milgram's peer-pressure variation: visible disobedience by one person can interrupt the authority's grip.

Case Study 3: Enron and Corporate Obedience

The collapse of Enron Corporation in 2001 offers a high-stakes case study in organizational obedience within a corporate hierarchy. Subsequent investigations and the work of journalists Bethany McLean and Peter Elkind (The Smartest Guys in the Room, 2003) documented that numerous mid-level executives, accountants, and analysts knew, or had strong reason to suspect, that the company's financial reporting was fraudulent.

Many complied nonetheless — approving accounting treatments they doubted, signing documents they knew to be misleading, suppressing concerns in meetings where the authority of senior leaders like CEO Jeffrey Skilling was absolute.

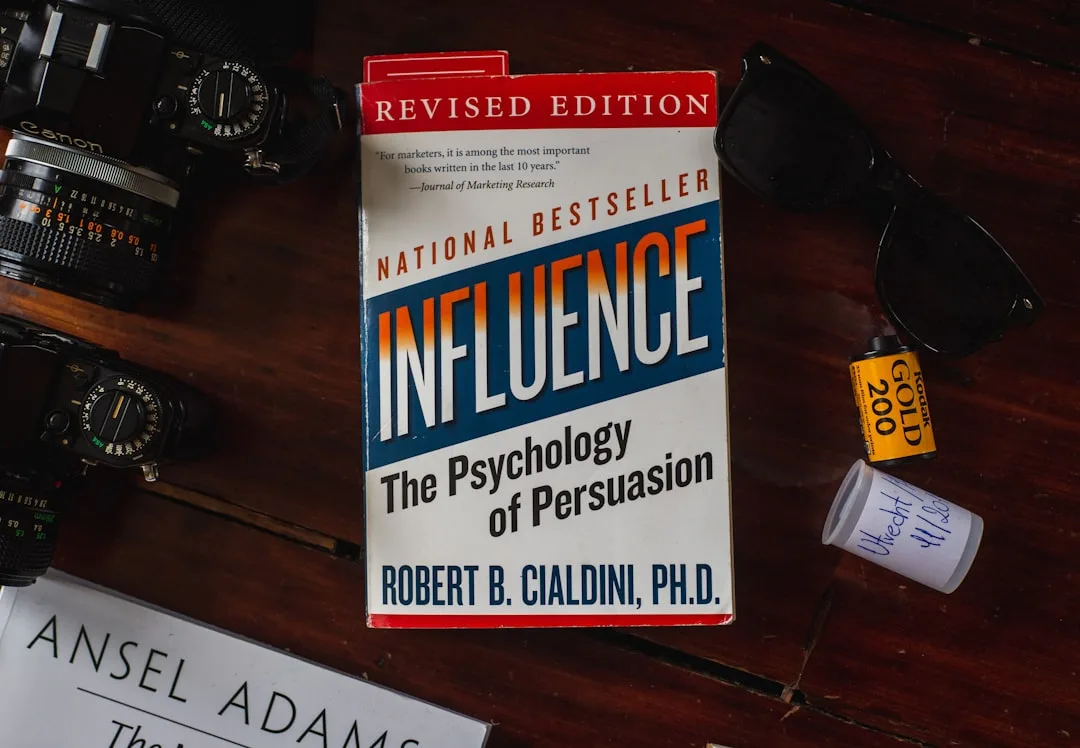

Psychologist Robert Cialdini's framework of authority as a principle of influence (Influence: The Psychology of Persuasion, 1984) helps explain this: in organizational contexts, authority signals are carried not just by formal rank but by confidence, expertise-display, and institutional momentum. Skilling's aggressive self-certainty, combined with Enron's celebrated status and the financial rewards of compliance, created a powerful obedience context that suppressed dissent at every level.

The Enron case is particularly instructive because the harm was diffuse and temporally distant — not a screaming victim in the next room, but future shareholders, employees, and pension holders who would suffer years later. Milgram's proximity finding predicts exactly this: greater distance from the harm makes compliance far easier to sustain.

Case Study 4: Medical Compliance — The JCAHO Drug Studies

In a 1966 study published in the New England Journal of Medicine, Charles Hofling and colleagues conducted a naturalistic experiment in which a nurse received a telephone call from an unknown "doctor" asking her to administer twice the maximum safe dose of a fictitious drug called Astroten to a patient. The drug was not on the hospital's approved list.

The order violated multiple hospital protocols. Twenty-one of twenty-two nurses (95 percent) proceeded to follow the instruction, stopped only by a researcher before actual administration.

Hofling's study extends Milgram's findings to a real-world professional context: medical training creates a strong authority hierarchy in which physicians occupy a dominant position and nurses are socialized to defer. The nurses in Hofling's study knew the protocol violations; they followed the order anyway.

Replications and extensions by Rank and Jacobson (Nursing Research, 1977) complicated this picture somewhat — nurses with greater professional experience and collegial support were more likely to question questionable orders — but the baseline deference rate in Hofling's original study remains a striking datum.

Intellectual Lineage: Arendt, Eichmann, and the Banality of Evil

Milgram's experiments did not emerge in a theoretical vacuum. They were directly inspired by the trial of Adolf Eichmann, the Nazi SS officer responsible for the logistics of the Holocaust's deportation system, who was captured by Israeli intelligence, tried in Jerusalem, and executed in 1962. Hannah Arendt, the political philosopher, attended the trial and published her analysis as Eichmann in Jerusalem: A Report on the Banality of Evil in 1963 — the same year as Milgram's first paper.

Arendt's central and deeply controversial argument was that Eichmann was not a monster. He was not driven by ideological fanaticism or sadistic pleasure. He was, she wrote, "terribly and terrifyingly normal" — a bureaucrat whose defining characteristic was thoughtlessness, a failure to think from the standpoint of anyone else, a complete absorption in the procedural demands of his role.

He had organized the deportation of millions without, she argued, ever confronting what deportation meant for the people in the trains.

The phrase "banality of evil" has frequently been misunderstood as a claim that Eichmann was ordinary or that his crimes were minor. Arendt meant something more precise: that great evil does not require malevolent psychology. It can be produced by the suspension of moral thinking — by the replacement of judgment with role performance. Eichmann was banal not because his crimes were small but because the man who committed them had made himself functionally thoughtless.

Milgram was explicit about the connection. In Obedience to Authority (1974), he wrote: "I must conclude that Arendt's conception of the banality of evil comes closer to the truth than one might dare imagine." The psychological mechanism Milgram proposed — the agentic state, in which individuals cease to act as moral agents and become instruments of institutional will — is the experimental correlate of Arendt's philosophical observation.

The intellectual lineage extends further back. Max Weber's analysis of authority types (Economy and Society, 1922) distinguished between traditional authority (rooted in custom), charismatic authority (rooted in exceptional personal qualities), and rational-legal authority (rooted in formal rules and roles). Milgram's laboratory exploited rational-legal authority — the authority of the scientist, the institution, the research protocol — in its purest form.

Weber had observed that rational-legal authority, precisely because it is impersonal and procedural, has a uniquely powerful capacity to extract compliance, since it frames obedience as adherence to a system rather than submission to a person.

Theodor Adorno and his colleagues at the University of California, Berkeley published The Authoritarian Personality in 1950, arguing that obedience to authority was partly a function of a stable personality configuration — the "authoritarian" character — marked by submission to in-group authority, aggression toward out-groups, and rigid adherence to conventional values. Milgram's work challenged this dispositional model: his subjects were not selected for authoritarianism, and yet the majority obeyed.

The situation, not the personality, was doing most of the work.

Empirical Research: Replications, Extensions, and the Meta-Analytic Record

Blass (1999): Cross-Cultural and Longitudinal Meta-Analysis

Thomas Blass at the University of Maryland reviewed all published replications of Milgram's paradigm across multiple decades and countries in a 1999 meta-analysis published in the Journal of Applied Social Psychology. His synthesis covered studies conducted in the United States, Germany, Italy, Austria, Spain, Jordan, and Australia between 1963 and 1985.

The core finding: obedience rates ranged from 28 to 91 percent across studies, with most clustering between 61 and 66 percent — closely replicating Milgram's original baseline. Neither time period nor country of origin produced a consistent moderating effect. The structural features of the authority situation, not cultural particularities, appear to be primary drivers.

Burger (2009): The Partial Replication

Jerry Burger at Santa Clara University published a partial replication in the American Psychologist in 2009, designed to satisfy contemporary ethical standards by stopping the procedure at 150 volts — the point at which the Learner first demands to be released. This point was chosen because in Milgram's original data, nearly all subjects who passed 150 volts continued to the maximum.

Burger found that 70 percent of his sample (compared to Milgram's 82.5 percent at the same voltage) continued past the 150-volt point when the experimenter prompted them to do so. The similarity to Milgram's figures, nearly five decades later, in a different cultural moment and with contemporary American participants, strongly suggests the robustness of the effect.

Burger also included a condition in which a dissenting confederate was introduced — a partial replication of Milgram's "peer rebel" variation. When participants witnessed another person refuse to continue at 90 volts, the continuation rate at 150 volts dropped to 63 percent. The peer effect replicated directionally, though the magnitude was smaller than in Milgram's original variation.

Dambrun and Vatine (2010): The Immersive Video Replication

Michael Dambrun and Elise Vatine at the Université Blaise Pascal published a replication using immersive virtual reality (European Journal of Social Psychology, 2010), replacing the real confederate Learner with a virtual human avatar. Subjects administered shocks to an avatar that displayed realistic distress responses.

Despite the obvious artificiality — participants knew the victim was virtual — compliance rates were substantial, and physiological measures (skin conductance, heart rate) indicated genuine stress responses. The virtual paradigm demonstrated that even when subjects have no rational ground for believing they are causing harm, the authority situation generates behavioral compliance and somatic distress.

Meeus and Raaijmakers (1986): Bureaucratic Harm Without Physical Violence

W.H.J. Meeus and Q.A.W. Raaijmakers at Utrecht University designed an obedience paradigm that replaced physical shock with administrative harm — subjects were instructed to make increasingly disparaging remarks to a job applicant who was trying to complete a test, comments that the experimenter told subjects would likely cause the applicant to fail and thereby lose a job opportunity. Ninety-two percent of subjects followed through to the maximum level of harassment (Journal of Social Issues, 1986).

The study demonstrated that physical violence is not required to produce high obedience rates; the paradigm generalizes to social and institutional harm delivered at bureaucratic remove.

Limits, Nuances, and Critiques

Ecological Validity

Milgram's critics, including Diana Baumrind writing in the American Psychologist in 1964, raised concerns about ecological validity — whether behavior in an artificial laboratory setting generalizes to real-world contexts. The criticism has merit: in Milgram's laboratory, subjects had strong reasons to trust that the institution would not actually harm anyone.

Real-world obedience contexts involve different mixtures of genuine risk, ideological justification, career stakes, and gradual normalization that may produce different dynamics.

However, the natural experiments described in the case studies above — My Lai, Reserve Police Battalion 101, Hofling's hospital study — suggest that the laboratory findings generalize with disturbing fidelity. The directional validity of Milgram's results appears secure even if the exact percentages may not translate.

Gina Perry's Archival Research

Gina Perry's 2013 book Behind the Shock Machine examined Milgram's original data archives and conducted interviews with surviving participants. Perry raised important methodological concerns: some experimenters deviated from the scripted prods, applying more personalized pressure than Milgram's protocol specified.

This would mean that some of the compliance recorded was driven by experimenter improvisation rather than the formal situational structure — potentially inflating obedience rates. Perry's work does not invalidate Milgram's core findings but demands greater attention to procedural fidelity in interpreting the data.

Personality as a Moderator

While situational factors explain the majority of variance in obedience behavior, personality is not irrelevant. Blass's 1991 review (Personality and Social Psychology Bulletin) found that measures of authoritarianism, locus of control, and moral development moderated obedience rates within studies, even if they could not account for the cross-situational pattern. Individuals with higher internal locus of control — those who believe their actions determine outcomes — were somewhat more likely to disobey.

Individuals scoring higher on Kohlberg's stages of moral development showed modestly lower compliance. The effect sizes were small relative to situational factors but were reliably present.

Gender

Milgram's original studies used only male subjects. He later ran a female sample and found identical rates of obedience — 65 percent — suggesting that the effect is not modulated by gender, at least as a simple main effect. Female subjects, however, reported higher levels of distress during the procedure. The behavioral outcome was the same; the affective experience differed.

The Conditions for Disobedience

Perhaps the most practically important insight from the full body of Milgram's research is not what produces obedience but what disrupts it. Four variables consistently reduced compliance:

- Increased proximity to the victim — physical or sensory contact with the person being harmed.

- Visible dissent by peers — watching another person refuse to comply reduced obedience to 10 percent.

- Conflicting authorities — when two experimenters disagreed with each other, compliance dropped to near zero; subjects exploited the ambiguity to exit.

- Reduced institutional legitimacy — moving the experiment from Yale to a commercial office building reduced compliance by 17 percentage points.

These findings are not merely academic. They suggest concrete structural interventions: building dissent into organizational cultures, reducing the psychological distance between decision-makers and affected populations, ensuring that authority structures contain internal checks and visible expressions of disagreement.

What Milgram's Data Actually Mean

The 65 percent figure is frequently cited as evidence for a pessimistic view of human nature. But Milgram himself resisted this interpretation, and the full body of his variations supports a more nuanced conclusion: the capacity for destructive obedience is situationally produced, and the same situational logic that generates it can be modified to reduce it.

The subjects who obeyed were not indifferent. They sweated, trembled, laughed nervously, and pleaded with the experimenter. Many later described the experience as the most disturbing of their lives. Their bodies knew something was wrong. What they lacked was the structural permission and the social model for refusal. When those were provided — through peer dissent, through reduced institutional authority, through physical proximity to the victim — compliance dropped dramatically.

This points toward a conclusion that is sobering but not hopeless: the problem of destructive obedience is primarily a problem of institutional and social design, not of individual depravity. The question is not only what kind of people obey, but what kind of institutions make disobedience impossible, costly, or unthinkable.

Organizations that suppress dissent, that create vast psychological distance between decision-makers and consequences, that concentrate authority without accountability, that reward compliance and penalize doubt — such organizations are, by their structure, obedience machines.

Milgram wrote in 1974: "The disappearance of a sense of responsibility is the most far-reaching consequence of submission to authority." Six decades of replication, extension, and historical evidence have not substantially revised that judgment. The question his research leaves us with is not whether ordinary people can be made to follow harmful orders. We know they can, reliably, under the right conditions. The question is what we choose to do with that knowledge.

References

Milgram, S. (1963). Behavioral study of obedience. Journal of Abnormal and Social Psychology, 67(4), 371-378.

Milgram, S. (1974). Obedience to Authority: An Experimental View. Harper & Row.

Arendt, H. (1963). Eichmann in Jerusalem: A Report on the Banality of Evil. Viking Press.

Blass, T. (1999). The Milgram paradigm after 35 years: Some things we now know about obedience to authority. Journal of Applied Social Psychology, 29(5), 955-978.

Burger, J. M. (2009). Replicating Milgram: Would people still obey today? American Psychologist, 64(1), 1-11.

Haney, C., Banks, C., & Zimbardo, P. (1973). Interpersonal dynamics in a simulated prison. International Journal of Criminology and Penology, 1(1), 69-97.

Bandura, A. (2002). Selective moral disengagement in the exercise of moral agency. Journal of Moral Education, 31(2), 101-119.

Caspar, E. A., Christensen, J. F., Cleeremans, A., & Haggard, P. (2016). Coercion changes the sense of agency in the human brain. Current Biology, 26(5), 585-592.

Hofling, C. K., Brotzman, E., Dalrymple, S., Graves, N., & Pierce, C. M. (1966). An experimental study in nurse-physician relationships. Journal of Nervous and Mental Disease, 143(2), 171-180.

Meeus, W. H. J., & Raaijmakers, Q. A. W. (1986). Administrative obedience: Carrying out orders to use psychological-administrative violence. Journal of Social Issues, 62(4), 311-324.

Browning, C. R. (1992). Ordinary Men: Reserve Police Battalion 101 and the Final Solution in Poland. HarperCollins.

Dambrun, M., & Vatine, E. (2010). Reopening the study of extreme social behaviors: Obedience to authority within an immersive video environment. European Journal of Social Psychology, 40(5), 760-773.

Frequently Asked Questions

What did Milgram's obedience experiments find?

Stanley Milgram's 1963 Journal of Abnormal and Social Psychology paper reported that 65% of subjects — ordinary New Haven adults recruited through newspaper ads — administered what they believed were 450-volt electric shocks to another person when instructed to do so by an authority figure (an experimenter in a lab coat). Subjects heard the 'learner' (a confederate) scream in pain, demand to be released, and eventually fall silent. Most subjects showed visible distress but continued when the experimenter said 'Please continue' or 'The experiment requires that you continue.' Before conducting the study, Milgram had polled psychiatrists who predicted fewer than 1% would administer the maximum shock. The actual rate was 65%.

What conditions reduced obedience in Milgram's variations?

Milgram ran 19 experimental variations that systematically identified factors modulating obedience. Physical proximity of the victim reduced obedience: when subjects were in the same room as the learner (touch proximity condition), obedience dropped to 40%. When subjects administered shocks by pressing the learner's hand onto a shock plate, it fell to 30%. Social support for disobedience proved most powerful: when two confederate 'teachers' refused to continue, obedience among naive subjects dropped to 10%. Institutional legitimacy mattered: moving the experiment from Yale to a run-down commercial building in Bridgeport reduced obedience to 47.5%. Distance from the authority figure also helped: when the experimenter gave orders by phone, obedience fell to 20.5%.

What is Hannah Arendt's 'banality of evil'?

Hannah Arendt's 1963 book 'Eichmann in Jerusalem,' based on her reporting at the trial of Nazi war criminal Adolf Eichmann, argued that Eichmann was not a monster or fanatic but a bureaucrat — a man who had simply stopped thinking about the moral implications of his actions and focused on career advancement and efficient job performance within a system that had normalized mass murder. Arendt called this the 'banality of evil': extreme harm can be produced not by exceptional malevolence but by ordinary conformity, bureaucratic role-following, and the suspension of moral judgment under authority. Milgram had read Arendt before designing his experiments and saw his results as laboratory confirmation of her thesis.

Has Milgram's experiment been replicated?

Thomas Blass's 1999 meta-analysis of obedience studies across multiple countries and decades found that obedience rates ranged from 28% to 91%, with most studies clustering around 60-66%, similar to Milgram's original findings. Jerry Burger's 2009 American Psychologist partial replication — stopping at 150 volts for ethical reasons — found obedience rates of 70% among subjects who continued past that point, comparable to Milgram's results at the same juncture. Virtual reality replications by Dambrun and Vatine (2010) using simulated electric shocks produced similar compliance rates. The core finding — that a substantial majority of ordinary people will harm others under authority pressure — has proven robust across contexts.

What does obedience research mean for organizations?

Milgram's situationist findings imply that harmful organizational behavior arises less from the presence of bad individuals and more from structures that distribute responsibility, normalize incremental harm, and insulate decision-makers from consequences. Christopher Browning's 1992 historical analysis of Reserve Police Battalion 101 — ordinary middle-aged German men who chose to participate in mass killings despite being offered the option to withdraw — showed that peer pressure, role socialization, and incremental escalation produced compliance from people who had not been selected for ideology. Organizational design conclusions: visible accountability, diffuse authority over harm decisions, direct contact with those affected by decisions, and institutional channels for dissent all reduce obedience to harmful directives.