Analytics — "In God we trust; all others must bring data." — W. Edwards Deming

In 2011, Netflix was paying roughly $100 million for a single show. The company had committed this sum to House of Cards before a single episode had been filmed, before any audience had seen a trailer, and before any traditional network pilot had been tested. By conventional entertainment industry standards, this was a reckless bet. By Netflix's standards, it was a calculated one.

Netflix had spent years building one of the most detailed audience analytics systems in the entertainment industry. It knew which of its 33 million subscribers at the time had watched films directed by David Fincher. It knew which users had watched the British version of House of Cards to completion.

It knew which audiences had sought out films starring Kevin Spacey. The intersection of those three audiences was large, engaged, and represented the precise demographic profile that House of Cards would need to succeed. Netflix did not guarantee that the show would be good.

What it did was dramatically reduce the uncertainty around whether an audience existed for it.

This is what data analytics actually does: it converts uncertainty into structured understanding, enabling decisions that are better calibrated to reality than decisions made on intuition alone.

"The goal is to turn data into information, and information into insight." — Carly Fiorina

What Data Analytics Actually Is

Data analytics is the practice of examining raw data — numbers, text, timestamps, behavioral logs, survey responses, transaction records — to identify patterns, draw conclusions, and support decisions. It is not a single technique but a family of approaches that span basic arithmetic and descriptive statistics all the way to machine learning models with millions of parameters.

What distinguishes analytics from simply having data is the deliberate application of method. An organization might collect millions of rows of customer transaction data and have no analytics capability at all — the data exists but no one is asking it questions or equipped to do so. Analytics requires both the data and the people, tools, and processes to examine it purposefully.

The word "analytics" is used loosely enough that it covers everything from a spreadsheet pivot table to a real-time machine learning pipeline running on petabytes of data. That breadth sometimes obscures a useful conceptual framework: four distinct types of analytics, each answering a different kind of question.

The Four Types of Data Analytics

| Analytics Type | Question It Answers | Example | Tools Used |

|---|---|---|---|

| Descriptive | What happened? | Monthly sales report, traffic dashboard | SQL, Tableau, Power BI |

| Diagnostic | Why did it happen? | Investigating a 15% Q3 sales drop | Drill-down analysis, correlation, segmentation |

| Predictive | What will happen? | Credit scoring, churn prediction | Machine learning, time series models, R/Python |

| Prescriptive | What should we do? | Dynamic pricing, supply chain optimization | Optimization algorithms, simulation |

Descriptive Analytics: What Happened

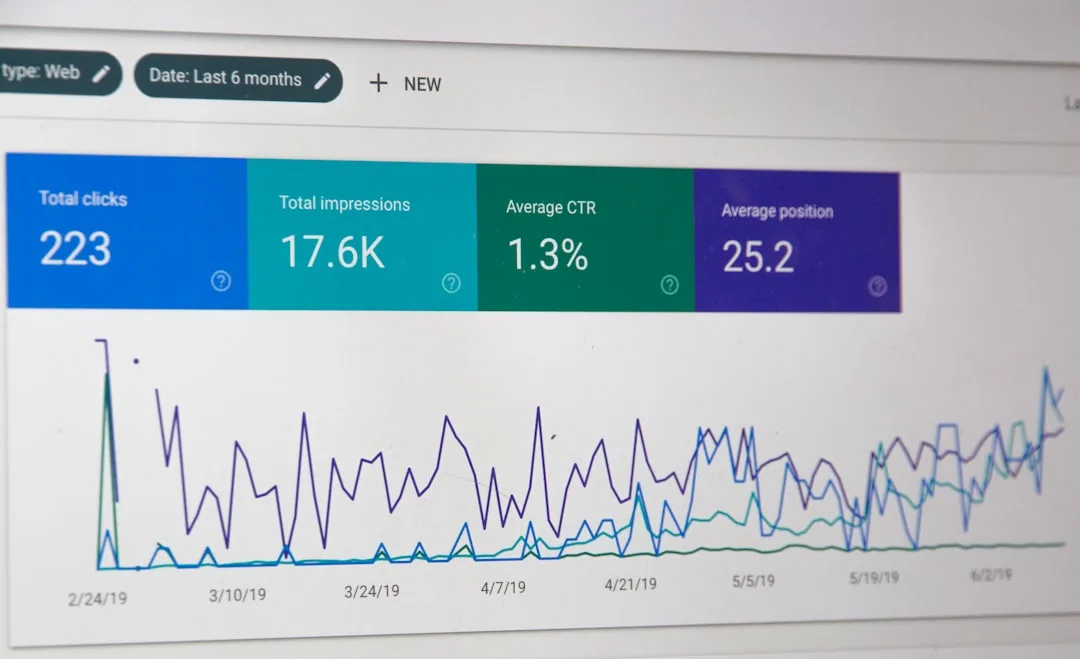

Descriptive analytics summarizes historical data to answer the question "what happened?" It is the most common form of analytics and the foundation upon which all more advanced forms are built. Monthly sales reports, website traffic dashboards, customer satisfaction score summaries, and inventory status reports are all descriptive analytics.

Descriptive analytics does not explain or predict anything on its own. It is a rearview mirror: accurate and necessary, but only showing you where you have been. Its value lies in establishing baselines, identifying anomalies that warrant investigation, and creating a shared understanding of current performance across an organization.

A company that cannot describe its own operations — that cannot answer "how many customers did we acquire last month and how does that compare to the prior month" — is not ready for any more sophisticated form of analytics.

Diagnostic Analytics: Why It Happened

Diagnostic analytics goes one level deeper, asking "why did this happen?" When descriptive analytics reveals that sales dropped 15 percent in Q3, diagnostic analytics investigates the cause. Was it a specific product line? A specific region? Was it correlated with a competitor's price change? A shift in search rankings? A supply chain disruption that affected availability?

Diagnostic analytics involves techniques like drill-down analysis, correlation analysis, and segmentation — breaking the aggregate number into components to find where the anomaly lives. It is often the most labor-intensive form of analytics because it requires exploring hypotheses rather than simply producing a pre-defined report.

A skilled data analyst doing diagnostic work is part statistician, part detective: following threads in the data, testing alternative explanations, and ruling out confounds.

Predictive Analytics: What Will Happen

Predictive analytics uses historical patterns to forecast future events. Statistical models, machine learning algorithms, and time series analysis are the primary tools. Credit scoring is one of the oldest applications: banks use historical repayment data to predict the probability that a given applicant will default.

Insurance companies use predictive models to estimate the likelihood of claims. E-commerce companies predict which customers are likely to churn so retention campaigns can be deployed before those customers leave.

The limitation of predictive analytics is that it predicts from patterns — it assumes the future will resemble the past in the relevant dimensions. Predictive models trained on pre-pandemic consumer behavior often performed poorly in 2020 precisely because the fundamental patterns they learned no longer held. All predictive models carry this caveat: they are extrapolations, not certainties, and their accuracy degrades as conditions diverge from the training environment.

Prescriptive Analytics: What Should We Do

Prescriptive analytics is the most advanced and least common form. It goes beyond predicting what will happen to recommending what action to take, often through optimization algorithms or simulation. Supply chain optimization — computing the ideal inventory level across hundreds of warehouses to minimize cost while meeting service level targets — is a classic prescriptive analytics application.

Dynamic pricing at airlines, where prices shift in real time to maximize revenue across available seats, is another.

Amazon's pricing engine reportedly adjusts prices on millions of products tens of millions of times per day, responding to competitor prices, demand signals, stock levels, and margin targets simultaneously. That is prescriptive analytics operating at scale: not just describing or predicting but continuously computing and executing the optimal action.

"Data scientist is the sexiest job of the 21st century." — DJ Patil

Data Analytics vs. Data Science vs. Business Intelligence

These three terms are often used interchangeably and they do overlap, but understanding the distinctions helps clarify what kind of capability an organization is building.

Business intelligence (BI) is the older category. BI tools — Crystal Reports in the 1990s, Cognos, MicroStrategy, and eventually Tableau and Power BI — are designed to give business users access to structured, pre-defined reports and dashboards. BI is primarily about descriptive analytics: giving the right people access to the right metrics without requiring them to write code.

A finance team using a Power BI dashboard to track monthly actuals against budget is doing business intelligence.

Data analytics sits above BI in analytical sophistication. Data analysts go beyond pre-defined reports to explore data, test hypotheses, and answer questions that require custom analysis. They combine data from multiple sources, apply statistical methods, and often need to write SQL queries or Python code to answer questions that no pre-built report covers. Analytics is investigative where BI is observational.

Data science is broader and more experimental. Data scientists build new models, develop novel algorithms, and explore data to discover patterns that were not known to exist. A data scientist might spend weeks exploring whether customer support ticket language can predict which customers are about to churn — an open-ended, research-style question with an uncertain answer.

Data science work often involves more original code, more statistical sophistication, and more tolerance for inconclusive results than analytics.

In practice, the boundaries are fuzzy. Many organizations use these titles interchangeably. The useful question is not which label applies but what kind of work is needed: Is the organization primarily trying to describe and monitor (BI)? Investigate causes and test hypotheses (analytics)? Build novel predictive capability (data science)? The answer determines the skills, tools, and processes to invest in.

The Analytics Stack

Modern data analytics infrastructure is often described as a stack — layers of technology that collectively move data from its source to useful insights.

Data collection sits at the bottom. Data enters from operational systems — CRM platforms, e-commerce databases, product event logging, financial systems, third-party data providers — and needs to be brought together into an environment where it can be analyzed. This movement of data is called Extract, Transform, Load (ETL) or, increasingly, Extract, Load, Transform (ELT), where raw data is loaded first and transformed in the analytics environment.

Data storage in the analytics context typically means a data warehouse or data lake. A data warehouse is a structured repository optimized for analytical queries — Snowflake, Google BigQuery, and Amazon Redshift are the dominant modern options. A data lake stores raw, unstructured, or semi-structured data and allows transformation on read.

The lines between these approaches have blurred considerably as modern warehouses handle semi-structured data and data lake platforms have added SQL query capabilities.

Data transformation takes raw loaded data and shapes it into reliable, consistent tables and views that analysts can trust. The open-source tool dbt (data build tool) has become the standard for this layer, allowing analytics engineers to write SQL-based transformations as version-controlled code with automated testing. Before dbt normalized this practice, transformation logic was often scattered across ad hoc scripts, making it difficult to know whether any given number was reliable.

Data processing and analysis is where analysts work: writing SQL queries, building Python notebooks, running statistical tests. This is the layer where questions get answered.

Data visualization and presentation takes the results and communicates them. Tableau, Power BI, and Looker are the dominant business intelligence and visualization tools. Jupyter Notebooks and Streamlit serve the analyst and data science community. The output might be an executive dashboard, an automated report, or a one-time analysis shared in a presentation.

Data Quality: The Hidden Problem

"What gets measured gets managed." — Peter Drucker

The most important and least glamorous aspect of data analytics is data quality. The principle is old and often stated: garbage in, garbage out. It is consistently underestimated because the problems are invisible until they are not.

Dirty data — data with errors, inconsistencies, duplicates, missing values, or ambiguous definitions — produces analyses that are technically rigorous but factually wrong. A company might discover that its customer count dashboard shows 150,000 customers while its finance team's database shows 140,000.

Both numbers were derived from data with slightly different cleaning logic applied to the same source. Which is right? Neither team knows. The analysis is not a technical failure; the tools are working correctly.

The failure is in the underlying data.

Common data quality problems include duplicate records (the same customer appearing multiple times under slightly different names or email addresses), null values in critical fields (orders with no associated customer ID), definition mismatches (one team's "active customer" means anyone who purchased in the last 90 days; another's means anyone who purchased in the last 12 months), and silent data pipeline failures where a data source stops updating but the dashboard shows stale data that looks current.

McKinsey research estimated that companies spend as much as 60 to 80 percent of analytics time on data preparation and cleaning rather than actual analysis. Gartner has estimated that poor data quality costs organizations an average of $12.9 million per year. These costs are largely invisible because bad data does not announce itself — it just produces decisions that are made on wrong assumptions.

Data quality requires deliberate investment: automated data tests that catch problems before they reach analysts, clear data ownership where someone is accountable for each data source, documented definitions of key metrics, and regular audits. The dbt framework has popularized automated data testing in transformation pipelines, allowing teams to specify expectations about their data (this field should never be null; this metric should never be negative; the count here should approximately match this count elsewhere) and get alerted when those expectations are violated.

How Companies Actually Use Analytics

The Moneyball story — how Billy Beane's Oakland Athletics used statistical analysis of player performance metrics like on-base percentage to find undervalued players that traditional scouting dismissed — is probably the best-known example of analytics changing an industry. The 2011 film and Michael Lewis's book brought the concept of data-driven decision making to a general audience.

What made the A's strategy effective was not that their data was better than other teams' data; it was that they were asking different questions of data that existed but was being underused.

Netflix's content strategy has already been mentioned, but the company uses analytics throughout its product, not just in commissioning decisions. Its recommendation algorithm — which drives 80 percent of viewer activity, according to the company's own data — uses watch history, browse behavior, search patterns, ratings, and device type to surface content.

Netflix has also used analytics to determine optimal thumbnail images for each title based on which thumbnails different user segments click more often, sometimes showing different images for the same show to different users.

Amazon uses prescriptive analytics in its pricing engine, described earlier, but also in its supply chain. The company's "anticipatory shipping" patent describes shipping products to regional fulfillment centers before customers have ordered them, based on predictive models of likely demand. The goal is to reduce delivery time by pre-positioning inventory closer to where predicted orders will originate.

Google's advertising business is fundamentally an analytics product: the real-time auction system that determines which ad to show each user relies on prediction models that estimate the probability a given user will click a given ad, updated with every new signal in real time.

Roles and Career Paths

The data field has differentiated into several distinct roles as organizations have built more sophisticated analytics functions.

Data analysts are the most common entry point into the field. They focus on answering business questions with existing data, writing SQL queries, building dashboards, and communicating findings to business stakeholders. Strong SQL skills, proficiency with a BI tool, and the ability to translate analytical findings into business language are the core competencies.

Entry-level data analyst roles are accessible with a solid grounding in statistics, SQL, and one visualization tool, without requiring programming beyond basic Python or R.

Analytics engineers bridge the gap between data engineers (who build infrastructure) and data analysts (who use it). They own the data transformation layer — building the reliable, well-tested data models that analysts query. dbt has created this role, and it requires stronger technical skills than a traditional data analyst but less infrastructure engineering expertise than a data engineer.

Data scientists build predictive and prescriptive models. Strong programming skills in Python or R, fluency in machine learning concepts, and statistical depth are typical requirements. Data science roles tend to require graduate-level education more often than analytics roles, though this is changing as the field matures and online education has improved.

Data engineers build and maintain the pipelines, databases, and infrastructure that move data from sources to the analytics environment. This is a software engineering role with a data specialization, typically requiring strong programming skills and knowledge of distributed systems.

Common Organizational Mistakes

Analysis paralysis is the phenomenon where access to more data slows decision-making rather than accelerating it. Organizations that have invested in analytics capability sometimes find that every decision triggers a request for more analysis, more segmentation, more confidence intervals, until the window for action has closed. The antidote is setting analytical standards in advance — defining what level of confidence justifies a decision of a given size — rather than pursuing certainty as an open-ended goal.

Vanity metrics are measurements that look impressive and feel good but do not actually inform decisions. Total registered users (rather than active users) is a classic example. Page views (rather than time-on-page or conversion rate) is another. An organization tracking vanity metrics may feel data-driven while systematically avoiding the numbers that would challenge comfortable narratives.

The most consequential mistake is not acting on insights. Many organizations invest in analytics capability, generate findings, and then fail to connect those findings to people with decision-making authority or to a process that translates findings into action. An analysis that concludes with a presentation deck and no decision is not analytics; it is reporting theater.

Building the organizational processes that route findings to decisions is often harder than building the analytics capability itself.

How to Build an Analytics Culture

An analytics culture is one in which decisions are consistently tested against evidence, where data fluency is expected of managers not just analysts, and where disagreements are resolved by identifying what data would answer the question rather than by seniority.

Google's internal culture, as described by former VP of People Analytics Laszlo Bock in his book Work Rules!, used experimentation extensively — running controlled trials on HR policies like performance review formats or manager coaching programs to measure actual effects. This brought scientific rigor to decisions that most organizations make on tradition and intuition.

The prerequisites for an analytics culture are fewer than organizations typically assume. Leadership needs to visibly use data when making decisions — not as decoration after decisions made intuitively, but as genuine inputs. Metrics need to be clearly defined and consistently calculated across teams. Analysts need access to decision-makers, not just the ability to post dashboards on a portal no one visits.

And there needs to be organizational tolerance for findings that challenge existing strategies, because an analytics culture that punishes inconvenient findings quickly produces findings that are convenient and useless.

Practical Takeaways

Starting with a question rather than data is the single most important principle for organizations building analytics capability. The question "what data do we have and what can we analyze?" produces dashboards full of numbers without action. The question "what are the three decisions we make most often that we most wish we had better information for?" produces analytics capability that drives actual choices.

Data quality investment always returns more than additional analytical sophistication applied to poor-quality data. An organization running simple descriptive analytics on clean, well-defined, reliably updated data will outperform one running sophisticated machine learning on dirty data.

The organizational and process work around analytics — connecting findings to decision-makers, establishing clear metric definitions, building habits of evidence-based discussion — is typically harder and more valuable than the technical work. The tools are commoditized; the culture is the scarce resource.

Analytics capability is built incrementally. Few organizations begin with real-time prescriptive systems. Most begin with descriptive dashboards, develop diagnostic competence, and add predictive capability as data maturity grows. The progression is not a race; it is a sequence, and trying to skip stages typically produces expensive failures.

What Research Shows About Data-Driven Decision Making

Academic research into analytics ROI has produced consistent, quantifiable findings over the past two decades. A landmark 2011 study by Erik Brynjolfsson, Lorin Hitt, and Heekyung Kim at MIT Sloan School of Management found that companies in the top third of their industry for data-driven decision making were, on average, 5 percent more productive and 6 percent more profitable than their competitors.

The study, published in Management Science, examined 179 large publicly traded firms and controlled for industry, capital investment, and other factors - establishing one of the first rigorous causal links between analytics investment and financial performance.

Thomas Davenport, who coined the phrase "competing on analytics" in his 2006 Harvard Business Review article, followed up with longitudinal research tracking "analytics competitors" - companies that systematically invest in analytics capability. He found that organizations he classified as analytical leaders outperformed their peers on revenue growth, profitability, and shareholder returns over sustained periods.

His 2007 book with Jeanne Harris, Competing on Analytics, documented firms like Capital One, Harrah's Entertainment, and UPS that built analytics as a core competency - not a supporting function.

DJ Patil, who served as the first U.S. Chief Data Scientist under President Obama from 2015 to 2017, has argued that the most underappreciated finding in analytics research is how much organizational culture matters relative to technical tooling. In his work at the White House Office of Science and Technology Policy, Patil found that government agencies with a culture of data literacy and evidence-based policymaking produced better outcomes regardless of budget or technology sophistication.

His assessment echoes W. Edwards Deming's foundational principle - "In God we trust; all others must bring data" - which Deming applied not merely as a slogan but as an operating doctrine at organizations including Ford Motor Company in the 1980s, where his data-driven quality methods contributed to Ford reversing a $3.3 billion loss in 1980 to profitability by 1983.

McKinsey's 2016 report The Age of Analytics found that sectors making the most aggressive analytics investments - finance, insurance, and retail - were realizing the largest productivity gains, with the gap between analytics leaders and laggards widening year over year. The report specifically noted that talent constraints, not technology, were the primary bottleneck: qualified data analysts and scientists were scarcer than the infrastructure to employ them.

Real-World Case Studies in Analytics

Netflix: Content Investment as Analytics Product. The House of Cards commissioning story, recounted at the top of this article, represents only one layer of Netflix's analytics infrastructure. A 2016 presentation by Netflix's product and innovation team revealed that the company runs approximately 250 A/B tests simultaneously at any given time, using analytics to optimize everything from streaming bitrate algorithms to the color contrast of play buttons.

Xavier Amatriain, who led recommendation algorithms at Netflix from 2010 to 2013, published research showing that their collaborative filtering approach reduced subscriber churn measurably - Netflix estimated the recommendation system's annual value at over $1 billion by 2016, primarily through reduced cancellation rates driven by keeping subscribers engaged with relevant content.

UPS: ORION and the Analytics of Routing. UPS's On-Road Integrated Optimization and Navigation (ORION) system, deployed beginning in 2012, represents one of the largest applied analytics projects in logistics history. The system analyzes over 250 million address data points and optimizes delivery routes in real time.

According to UPS's published figures, ORION saves approximately 100 million miles of driving per year, reducing fuel consumption by roughly 10 million gallons annually. Jack Levis, the mathematician who led ORION's development, has described the project as requiring more than a decade of foundational analytics work before the optimization system could be deployed - specifically noting that descriptive and diagnostic analytics had to be mature before prescriptive optimization was viable.

Target: Predictive Analytics and the Pregnancy Prediction Model. Target's pregnancy prediction model, developed by statistician Andrew Pole around 2002 and made public through Charles Duhigg's 2012 New York Times investigation, assigned each customer a "pregnancy prediction score" based on purchasing patterns - increased purchases of unscented lotion, certain vitamin supplements, and specific food items correlated with early pregnancy. Target used these predictions to send targeted coupons timed to high-spending life moments.

The model reportedly increased baby-product sales substantially before the story became public. The case is now taught in business schools as both a analytics success story and an ethical cautionary tale about the gap between analytical capability and social license.

Amazon: The Flywheel Powered by Data. Amazon's analytics operation spans pricing (which adjusts tens of millions of product prices daily based on competitor data, demand signals, and margin targets), inventory placement (pre-positioning stock based on demand prediction models), and fulfillment routing (selecting among its now-hundreds of fulfillment centers to minimize delivery time). Werner Vogels, Amazon's CTO, has described their approach as "metrics-driven everything" - the company measures not only customer-facing outcomes but internal process metrics at extraordinary granularity.

Amazon's anticipatory shipping patent (US Patent 8,615,473, granted 2013) describes shipping products to regional hubs before customers have ordered them, based on predictive confidence thresholds - a prescriptive analytics application operating at commercial scale.

Common Analytics Mistakes and What Evidence Shows

Mistake 1: Measuring What Is Easy Rather Than What Matters. W. Edwards Deming was blunt about this failure: "It is wrong to suppose that if you can't measure it, you can't manage it - a costly myth." Organizations frequently default to measuring whatever is easy to count rather than what actually drives outcomes. Page views, follower counts, number of features shipped, and number of employees trained are all easy to count and frequently tracked as if they were performance metrics.

The research on this is consistent: organizations that define metrics starting from desired business outcomes and work backward to what to measure outperform those that start with available data. Avinash Kaushik, Google's former digital marketing evangelist, popularized the concept of "vanity metrics vs. actionable metrics" - the former make dashboards look impressive without informing decisions.

Mistake 2: The HIPPO Problem. Research by Gary Wolf and Kevin Kelly at Wired, later popularized in data science circles, documented what they called the HIPPO effect: the Highest-Paid Person's Opinion wins over data when results are inconvenient. Studies of A/B testing at technology companies found that teams with senior executives who routinely override negative experimental results abandon data-driven processes within 18 months.

Ron Kohavi, who ran experimentation programs at Amazon and Microsoft, documented in his book Trustworthy Online Controlled Experiments that approximately two-thirds of product changes that executives were confident would improve metrics actually showed no improvement or negative results in controlled tests. Companies that institutionalize executive override of data see A/B testing programs atrophy.

Mistake 3: Building Analytics Before Defining Questions. A survey by NewVantage Partners published annually tracks Fortune 500 executives on their data and AI investment outcomes. In 2023, 74 percent of executives reported that data initiatives had not met their stated goals. The most common cited reason was building infrastructure and tooling before establishing the specific decisions the organization needed to make.

This sequence - build first, ask questions later - produces what data practitioners call "data swamps": large, expensive repositories of data that nobody is asking useful questions of. The corrective, advocated by DJ Patil and consistently echoed in the academic literature, is question-first analytics: identify the three to five most consequential decisions the organization makes repeatedly and design analytics capability specifically to inform those decisions.

References

- Davenport, T. H. & Harris, J. G. (2007). Competing on Analytics: The New Science of Winning. Harvard Business School Press.

- Provost, F. & Fawcett, T. (2013). Data Science for Business: What You Need to Know about Data Mining and Data-Analytic Thinking. O'Reilly Media.

- Gartner. (2023). "Poor data quality costs organizations an average $12.9 million annually." Gartner Press Release. Gartner, Inc.

- Lewis, M. (2003). Moneyball: The Art of Winning an Unfair Game. W. W. Norton & Company.

- McKinsey Global Institute. (2011). Big Data: The Next Frontier for Innovation, Competition, and Productivity. McKinsey & Company.

- DAMA International. (2017). DAMA-DMBOK: Data Management Body of Knowledge (2nd ed.). Technics Publications.

Frequently Asked Questions

What is data analytics in simple terms?

Data analytics is the practice of examining raw data to find patterns, draw conclusions, and help make better decisions. It involves collecting data, cleaning and organizing it, applying statistical and computational techniques, and presenting the results in a way that guides action. Organizations use data analytics to understand what happened in the past, diagnose why it happened, predict what will happen next, and determine what actions to take. At its core, data analytics is about turning numbers into insight and insight into decisions.

What are the four types of data analytics?

Descriptive analytics examines historical data to summarize what happened, like last quarter’s sales figures or website traffic trends. Diagnostic analytics goes deeper to explain why something happened, like identifying which factors caused a sales decline. Predictive analytics uses statistical models and machine learning to forecast what is likely to happen next, like predicting customer churn. Prescriptive analytics recommends actions to take based on predictive models, like suggesting which customers to target with retention offers. Complexity and sophistication increase from descriptive to prescriptive, and most organizations start with descriptive analytics before building toward more advanced capabilities.

What is the difference between data analytics and data science?

Data analytics focuses on examining existing data to answer specific business questions, typically using established statistical methods and business intelligence tools. Data science is broader and more research-oriented, involving building new models, developing algorithms, and exploring data to discover novel insights that were not previously anticipated. Data scientists often write more original code and apply more advanced machine learning. In practice the boundaries blur, but data analytics tends to be more operationally focused while data science is more experimental. Both roles are valuable and organizations of different maturities need different mixes of each.

What tools do data analysts use?

Excel remains widely used for basic analysis despite its limitations with large datasets. SQL is essential for querying databases and is one of the most valuable skills a data analyst can have. Python and R are the dominant programming languages for statistical analysis and machine learning. Tableau, Power BI, and Looker are popular business intelligence tools for creating dashboards and visualizations. Google Analytics and Mixpanel handle web and product analytics. Jupyter Notebooks provide an interactive environment for data exploration. The right tools depend on the organization’s data infrastructure, the analyst’s focus area, and the complexity of the questions being answered.

Why is data quality so important in analytics?

The quality of analytical outputs is entirely dependent on the quality of the underlying data. Dirty data, meaning data with errors, duplicates, missing values, or inconsistent formatting, produces misleading conclusions even when the analytical methods applied are perfectly correct. A famous principle in computing summarizes this: garbage in, garbage out. Organizations that rely on poor-quality data make confident-sounding decisions based on faulty foundations. Data cleaning and validation typically consume the majority of a data analyst’s time and are essential prerequisites for any meaningful analysis.

What roles exist in the data analytics field?

Data analysts focus on examining existing data to answer business questions, creating reports, and building dashboards. Data scientists develop predictive models, apply machine learning, and explore data to find novel patterns. Data engineers build and maintain the pipelines, databases, and infrastructure that make data available for analysis. Business intelligence analysts specialize in reporting and visualization for business stakeholders. Analytics engineers bridge engineering and analytics by building reliable data transformations. These roles overlap in smaller organizations, where one person may cover multiple functions, and become more specialized as data teams grow.

How do businesses use data analytics in practice?

Retail companies analyze customer purchase history to optimize inventory and personalize marketing. Healthcare organizations analyze patient data to identify high-risk populations and improve treatment outcomes. Financial institutions analyze transaction patterns to detect fraud and assess credit risk. Marketing teams analyze campaign performance to optimize spending across channels. Operations teams analyze supply chain data to reduce costs and improve delivery times. Human resources teams analyze workforce data to reduce turnover and improve hiring. The applications span every industry and function, and the competitive pressure to develop analytics capabilities has made it a core business competency.

What are the most common mistakes organizations make with data analytics?

Collecting data without a clear question to answer is the most common mistake, resulting in dashboards full of numbers that nobody acts on. Confusing correlation with causation leads to strategies built on spurious relationships in the data. Neglecting data quality means analysis that looks rigorous but rests on flawed inputs. Analyzing the past without developing predictive capabilities means organizations are always reacting rather than anticipating. Failing to connect analytical findings to decision-makers who can act on them leaves insights unused. The organizational and process failures around analytics are typically more consequential than the technical ones.

Can small businesses benefit from data analytics?

Absolutely. Small businesses often have access to more actionable data than they realize, from sales records and customer emails to website traffic and social media engagement. Even basic analytics, like identifying which products generate the most margin or which marketing channels bring the most customers, can drive significant decisions. Google Analytics, Shopify analytics, and simple spreadsheet analysis give small business owners powerful insights without requiring data science expertise or expensive tooling. The key is to start with specific questions you want to answer rather than collecting data for its own sake.

What is the return on investment of data analytics?

Research consistently shows that data-driven organizations outperform their peers on key financial metrics. McKinsey research has found that top-quartile data and analytics adopters generate two to three times higher returns on their analytics investments than lower-quartile adopters. ROI from analytics comes through multiple channels: better marketing spend allocation, reduced operational waste, improved pricing decisions, faster identification of product issues, and more effective targeting of high-value customers. The challenge is that ROI from analytics is often diffuse and takes time to materialize, making it harder to attribute than a single project investment.