Imagine writing a long document and hitting save over the same file every time you make a change. If you accidentally delete several pages, or if you want to see what the document looked like three weeks ago, or if a colleague overwrites your work while you are both editing at the same time -- you have no recourse. The history is gone.

This is how software was once developed. Version control is the practice and tooling that solved this problem, and it is now so fundamental to professional software development that a developer who does not use version control is effectively not practicing the craft.

But version control matters far beyond individual developers working alone. It is the foundation of collaboration, the audit trail of software history, the safety net that makes experimentation safe, and the infrastructure that enables modern deployment practices.

According to the 2023 Stack Overflow Developer Survey -- the largest annual survey of software professionals, covering over 90,000 respondents -- 97.6 percent of developers use Git as their version control system. This near-universal adoption reflects not just professional convention but genuine utility: version control is one of the clearest examples in technology of a tool that became indispensable because it solved real, pervasive problems.

What Version Control Is

Version control (also called source control or revision control) is a system for tracking changes to files over time. Every change -- what changed, when, by whom, and why -- is recorded. Any previous state of the codebase can be retrieved. Multiple people can work on the same codebase without destroying each other's work.

Modern version control systems also enable branching: creating separate lines of development that diverge from a common starting point and can later be merged back together. This makes it possible to work on a new feature without disrupting the stable codebase, or to fix a bug in production code without disturbing in-progress development work.

What Version Control Enables

- History: See the complete timeline of every change ever made to the codebase

- Rollback: Revert any file or the entire codebase to any previous state

- Collaboration: Multiple developers working on the same codebase without destructive conflicts

- Branching: Parallel lines of development for features, bug fixes, and experiments

- Attribution: Know who changed what and when

- Context: Commit messages explain why changes were made, not just what changed

- Code review: Review changes before they are incorporated into the main codebase

- Continuous integration: Automated testing triggered by code changes

- Audit trail: Compliance and security audits that require knowing what changed when, and who authorized it

A Brief History: From RCS to Git

The history of version control follows the broader evolution of software development practices.

RCS (Revision Control System), developed by Walter Tichy at Purdue University in the early 1980s, tracked changes to individual files locally -- useful for a single developer but inadequate for team work. RCS introduced the concept of storing deltas (differences) rather than complete file copies, an efficiency innovation that influenced all subsequent systems.

CVS (Concurrent Versions System), introduced in 1986 by Dick Grune, was the first widely used system that allowed multiple developers to work on the same code simultaneously, though its merge and branching capabilities were limited and fragile. CVS became the dominant tool for open-source development through the 1990s; the Linux kernel used CVS until 2002.

SVN (Apache Subversion), released in 2000, improved significantly on CVS with atomic commits, better branching, and improved performance. Collabnet originally developed it specifically to replace CVS; it succeeded, and SVN became the dominant system through the mid-2000s and remains in use in some organizations.

Git, created by Linus Torvalds in 2005, represented a fundamental rethinking of version control architecture. Torvalds wrote Git to replace BitKeeper (a proprietary system the Linux kernel project had been using) after BitKeeper revoked its free license. His stated goal was a system that was "the opposite in every way of CVS." Git is distributed rather than centralized, meaning every developer has a complete copy of the entire repository history on their own machine. Git's branching model is dramatically faster and more flexible than previous systems. It is now the overwhelmingly dominant version control system in professional software development.

How Git Works

Understanding Git requires understanding a handful of core concepts.

The Repository

A repository (or repo) is the container for all the code, history, and metadata of a project. Every repository contains the full history of every change ever made.

With Git's distributed architecture, every developer has a complete local copy of the repository. There is no single "master" copy on a server -- the repository on the server is architecturally identical to the one on any developer's laptop. The server version is treated as authoritative by convention, not by technical requirement.

This design has a practical consequence often underappreciated by new Git users: the vast majority of Git operations -- viewing history, creating branches, committing code, computing diffs -- happen entirely locally, with no network access required. The speed advantage over centralized systems is dramatic.

Commits

A commit is a snapshot of the codebase at a specific point in time. Each commit records:

- The changes made to files

- The author of the changes

- The timestamp

- A commit message describing why the change was made

- A unique identifier (a SHA-1 hash)

- A reference to the parent commit(s) it follows

Commits are immutable -- once created, they cannot be modified (only replaced by new commits). The chain of commits forms the history of the project.

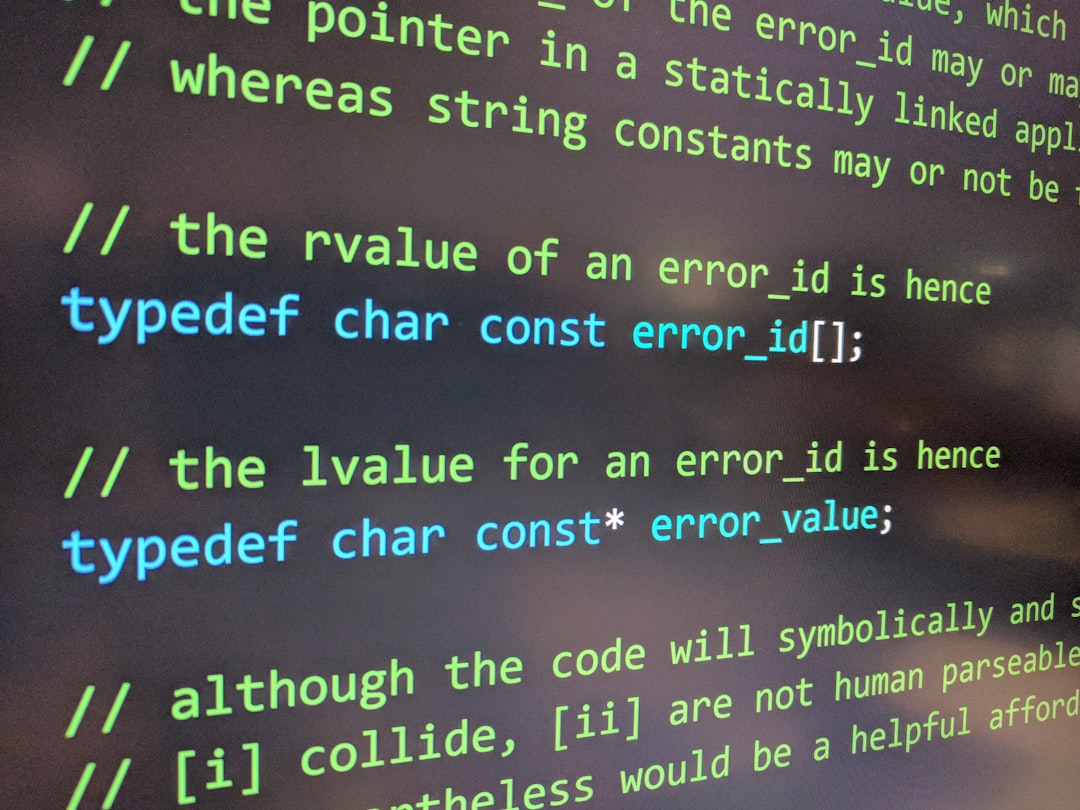

A good commit message is one of the most undervalued practices in software development. Future developers -- including your future self -- will read commit messages to understand why code was written the way it was. The format that has become standard in many organizations: a 50-character or fewer summary line, a blank line, then a more detailed explanation of the why. Chris Beams's widely read essay "How to Write a Git Commit Message" (2014) articulated seven rules that have become informal industry standards, including: use the imperative mood ("Fix bug" not "Fixed bug"), limit the subject line to 50 characters, and explain the problem being solved rather than describing what the code does.

Branches

A branch is a lightweight, movable pointer to a commit. Creating a branch creates a new line of development that starts from the current commit and can diverge independently.

In Git, branching is extremely cheap -- creating a branch takes milliseconds regardless of repository size, because a branch is just a pointer (a file containing a SHA-1 hash). This makes it practical to create a branch for every feature, bug fix, or experiment, and to discard branches that don't pan out without any consequence to the main codebase.

The default branch is typically named main (formerly master). Best practice is to keep this branch always in a working, deployable state.

Merging and Rebasing

When work on a branch is complete, it needs to be integrated back into the main branch. Git provides two primary approaches:

Merging combines the histories of two branches, creating a new "merge commit" that has two parent commits. This preserves the full branching history.

Rebasing replays commits from one branch onto another, creating new commits with the same changes but different parent histories. This produces a linear history but rewrites commit hashes, which can cause problems when work has been shared with others.

Both approaches have legitimate uses and tradeoffs; teams typically standardize on one for consistency.

The Staging Area

Git includes a concept that distinguishes it from many other systems: the staging area (also called the index). Before creating a commit, changes are staged -- explicitly added to the collection that will form the next commit. This allows developers to group related changes into a single coherent commit even when those changes were made over time.

| Git Concept | What It Does | Analogy |

|---|---|---|

| Repository | Contains all code and history | Project folder with full time machine |

| Commit | Snapshot of codebase at a moment | Save point with description |

| Branch | Independent line of development | Parallel timeline |

| Merge | Combine two branch histories | Converge two timelines |

| Rebase | Replay commits onto a new base | Rewrite history linearly |

| Remote | Copy of repo on another machine or server | Shared copy |

| Pull | Fetch changes from remote and merge | Sync incoming changes |

| Push | Send local commits to remote | Sync outgoing changes |

| Clone | Copy entire repository | Duplicate with full history |

| Stash | Temporarily shelve uncommitted changes | Set aside without committing |

Centralized vs. Distributed Version Control

The fundamental architectural difference in modern version control is between centralized and distributed systems.

Centralized systems (CVS, SVN) have a single authoritative server. Developers check out working copies, make changes, and commit to the central server. There is one history. Every operation that involves history (commits, logs, diffs) requires network access.

Distributed systems (Git, Mercurial) give every developer a complete local copy of the entire repository. Developers can commit, branch, view history, and create tags entirely offline. Synchronization with shared repositories happens explicitly through push and pull operations.

The distributed model has several advantages:

- Works offline and performs faster for history operations

- More resilient -- there is no single point of failure

- More flexible branching and merging

- Multiple remote repositories possible (fork model)

Git's distributed architecture also enabled the pull request model that has become central to collaborative software development: a developer pushes their branch to a shared repository and requests that their changes be reviewed and merged, enabling structured code review before changes reach the main branch.

"In open source development, the pull request workflow transformed how thousands of contributors could safely collaborate on the same codebase -- without any central gatekeeper beyond code review itself." -- Widely attributed to the Git/GitHub era of open source development

GitHub, GitLab, and Bitbucket

Git itself is open-source command-line software. The major platforms built on top of it -- GitHub, GitLab, and Bitbucket -- add web interfaces, collaboration tools, and integrated development workflows.

GitHub is the dominant platform, hosting the majority of open-source software and a large portion of professional development work. Microsoft acquired it in 2018 for $7.5 billion. By 2023, GitHub hosted over 420 million repositories and more than 100 million developers. GitHub's pull request workflow and issues system have become the standard model for collaborative development.

GitLab competes primarily in the enterprise space, offering a more complete DevOps platform that includes CI/CD pipelines, issue tracking, and project management in a single product. GitLab offers a self-hosted option that some organizations prefer for data sovereignty reasons -- particularly common in regulated industries (financial services, healthcare) and in countries with data localization requirements. GitLab's 2023 Global DevSecOps Survey found that 57 percent of its enterprise customers cited self-hosting capability as a significant factor in their choice.

Bitbucket (owned by Atlassian) integrates tightly with Jira and other Atlassian products, making it popular in organizations already using the Atlassian ecosystem. Bitbucket's tight Jira integration allows automatic linking of commits to tickets, creating an audit trail that connects code changes to the requirements and decisions that drove them.

The platforms differ in features and pricing, but they all implement the same underlying Git concepts. Skills developed on one platform transfer readily to others.

Git Workflow Strategies

How teams use Git matters as much as the fact that they use it. Several workflow strategies have emerged as standards.

GitFlow

Developed by Vincent Driessen in 2010, GitFlow is a branching model using multiple long-lived branch types:

main: Production-ready code onlydevelop: Integration branch for completed featuresfeature/*: Individual feature developmentrelease/*: Release preparationhotfix/*: Emergency production fixes

GitFlow works well for teams doing infrequent, batched releases with explicit version numbers. It is common in organizations with formal release management processes.

The criticism of GitFlow is that its complexity can slow down development: many branches to manage, complex merge sequences, and a develop branch that can become a bottleneck. Driessen himself noted in a 2020 addendum to the original article that GitFlow is "not the right workflow for all teams" and suggested that simpler workflows may be more appropriate for teams practicing continuous delivery.

GitHub Flow

A simpler alternative: there is one main branch that is always deployable, and all new work happens on short-lived feature branches that are merged directly to main via pull requests. This works well for teams deploying continuously.

Trunk-Based Development

Trunk-based development is the practice of all developers committing to a single main branch (the "trunk") continuously -- multiple times per day -- rather than working on long-lived branches. Features that are not ready to expose are hidden behind feature flags: configuration that controls whether users see a feature.

Research from the DevOps Research and Assessment (DORA) program, published in the annual State of DevOps reports (2019, 2021, 2022), consistently identifies trunk-based development as a practice associated with high-performing development teams. Teams practicing trunk-based development deploy 208 times more frequently and have a 2,604 times faster mean time to restore service than low-performing teams, according to the 2019 DORA report. It forces continuous integration, reduces merge complexity, and enables continuous deployment.

The tradeoff is that it requires strong automated testing and feature flag discipline to maintain stability when everyone is committing to the same branch simultaneously.

| Workflow | Best For | Branch Lifespan | Deployment Cadence |

|---|---|---|---|

| GitFlow | Versioned releases, many parallel features | Weeks-months | Periodic |

| GitHub Flow | Teams deploying frequently | Days | Frequent |

| Trunk-Based Development | High-frequency deployment teams | Hours | Continuous |

| Release Branching | Products with long support windows | Months-years | Per-version |

Version Control Beyond Code

Version control principles apply to any text-based files that change over time.

Infrastructure as Code

The practice of defining servers, networks, and cloud resources in code (Terraform, AWS CloudFormation, Pulumi) means that infrastructure configuration is stored in version-controlled repositories. Changes to infrastructure go through the same review and history processes as application code -- a significant improvement over manually configured servers with no change history.

A 2022 survey by HashiCorp (makers of Terraform) found that 85 percent of infrastructure-as-code practitioners version-controlled their infrastructure code, with 71 percent using pull-request-based review workflows for infrastructure changes -- the same discipline applied to application code, applied to the systems that run it.

Documentation

Documentation written in plain text formats (Markdown, AsciiDoc) can be version controlled alongside the code it describes. This keeps documentation synchronized with code changes and provides the same rollback and collaboration benefits. The practice of treating documentation as code -- subject to the same review and history tracking -- has grown significantly with the rise of developer portal platforms like Backstage and documentation-as-code tools.

Data Pipelines and Analysis

Data science and analytics workflows increasingly use version control for data transformation scripts, model configurations, and analysis notebooks. The reproducibility benefits -- being able to know exactly what code produced what results -- are as valuable in data analysis as in software development.

Tools like DVC (Data Version Control) extend Git concepts to large data files and machine learning models, enabling teams to version not just the code but the data and artifacts that produce a given result. This is increasingly important in regulated industries where model reproducibility is a compliance requirement.

Configuration Files

Application configuration stored in version control provides an audit trail of configuration changes, which is valuable for debugging production issues and understanding system evolution. The practice of GitOps -- using Git pull requests as the mechanism for triggering deployment and configuration changes -- takes this further, making the Git repository the single source of truth for system state.

Common Version Control Mistakes and How to Avoid Them

Learning version control is straightforward; using it well is a professional skill that takes time to develop. Several common mistakes trip up developers at all levels.

Committing Too Infrequently

The instinct to commit only when something is "complete" produces large, hard-to-understand commits that are difficult to review, impossible to bisect when debugging, and painful to revert if something goes wrong. Commits should be small, logical units of change. A useful rule: if the commit message requires "and" to describe what changed, the commit should probably be two commits.

git bisect -- Git's binary search tool for finding which commit introduced a bug -- works dramatically better with many small commits than with a few large ones. A bisect through 30 small commits narrows to the culprit in 5 steps; a bisect through 5 large commits finds the right commit but still requires understanding thousands of lines of changes to find the specific problem.

Poor Commit Messages

"Fixed stuff," "WIP," and "misc changes" are useless to future developers trying to understand the history of a codebase. A good commit message has a concise first line describing what changed and a body (where needed) explaining why. The why is the most valuable part: the code shows what changed; the message explains the reasoning that future developers need to evaluate whether the change is still appropriate.

A study by Dyer, Nguyen, Rajan, and Nguyen (2013), analyzing 23,000 open-source Git repositories, found that commit message quality varied enormously across projects, and that projects with more informative commit messages had measurably lower long-term defect rates -- suggesting that the discipline of explaining changes creates cognitive pressure that improves the quality of those changes.

Committing Secrets to Version Control

API keys, passwords, database credentials, and other secrets accidentally committed to a repository -- especially a public one -- are a serious security incident. Once committed, a secret exists in the repository history even after deletion from the current code. The correct response is to treat the secret as compromised and rotate it immediately. The best prevention is using environment variables and .gitignore to keep secrets out of version control entirely.

GitGuardian's 2023 "State of Secrets Sprawl" report found over 10 million secrets exposed in public GitHub commits in 2022 -- a 67 percent increase year over year. The most common secrets found: Google API keys, AWS keys, and database connection strings. Tools like git-secrets, truffleHog, and built-in GitHub secret scanning help detect and prevent this class of error.

Force-Pushing to Shared Branches

Rewriting history on a branch that other developers are working from causes significant problems: their local histories diverge from the remote, merge conflicts multiply, and team trust erodes. Force-pushing should be reserved for personal branches and handled with caution even there.

Most platforms allow "branch protection rules" that prevent force-pushing to important branches (main, develop, release branches). Enabling these protections is a standard security and collaboration practice.

Avoiding Branches for Fear of Complexity

Some developers, particularly those new to Git, work directly on the main branch because branching feels complex. This fear has less basis in Git than it did in older version control systems -- in Git, branching is cheap and fast. Working without branches forfeits the ability to keep work-in-progress isolated from the stable codebase, makes code review harder, and eliminates the flexibility to switch between tasks without losing work.

The Security Dimension of Version Control

Version control systems are increasingly part of the software supply chain security conversation. Several high-profile incidents have demonstrated the risks:

The SolarWinds attack (2020) involved attackers compromising the build pipeline that compiled software from source code. The attack was sophisticated partly because version control and build processes are trusted by default -- code that passes through version control with proper approvals appears legitimate.

Dependency confusion attacks (documented by Alex Birsan in 2021) exploit the way package managers resolve dependencies from public registries, but understanding and auditing these risks requires inspecting package.json, requirements.txt, and similar files in version-controlled repositories.

Malicious commits to open-source repositories -- including the 2024 XZ Utils backdoor, which was introduced through a sustained social engineering campaign against a project maintainer -- have raised awareness of the need to treat version control history not just as a development tool but as a security artifact.

Best practices include: requiring signed commits (GPG signatures that verify the author's identity), enabling commit verification requirements on important branches, auditing access controls to repositories regularly, and using CODEOWNERS files to require approval from specific individuals for changes to sensitive files.

Why Version Control Matters for Non-Developers

If you work in technology but do not write code, understanding version control is valuable for several reasons:

It shapes how software is built. Conversations about release timelines, feature flags, code reviews, and deployment processes are all grounded in version control concepts.

Incident investigation. When something breaks in production, version control history is often the first place engineers look. "What changed?" is answered by looking at recent commits.

Project understanding. The commit history of a project tells the story of its development in a way that the current codebase alone cannot.

Documentation practices. The version-control-everything mindset that engineers apply to code is increasingly applied to documentation, configuration, and even business logic.

Compliance and audit. Regulated industries require evidence of who approved changes, what was changed, and when. Version control provides this audit trail naturally.

Getting Started

For developers not yet using version control systematically, the starting point is simple:

- Install Git (git-scm.com)

- Learn the essential commands:

git init,git add,git commit,git push,git pull,git branch,git merge,git log - Create an account on GitHub or GitLab

- Apply version control to every project, even personal ones

- Practice branching: create a branch for every new feature or bug fix, even when working alone

The overhead of version control is real but front-loaded. The habit of committing work, writing clear commit messages, and working in branches becomes second nature quickly. The cost of not using version control -- lost work, inability to understand change history, collaboration chaos -- is paid continuously.

Summary

Version control is the practice of systematically recording the history of changes to files, enabling rollback, collaboration, and audit. Git has become the near-universal tool for this practice in professional software development, with 97.6 percent adoption among developers surveyed in 2023. Understanding it requires understanding repositories, commits, branches, and merge strategies.

Beyond the mechanics, version control represents a professional discipline: treating code as something valuable enough to track carefully, documenting the reasoning behind changes, and building software in ways that are understandable and maintainable by future developers.

The best time to start using version control was the first day you wrote code. The second best time is now.

Frequently Asked Questions

What is version control?

Version control is a system that records changes to files over time, allowing developers to track what changed, when, and by whom, and to revert to earlier versions if needed. It is the foundational practice of professional software development, enabling teams to collaborate on code without overwriting each other's work and providing a complete audit trail of every modification.

What is Git and how does it differ from other version control systems?

Git is a distributed version control system created by Linus Torvalds in 2005. Unlike centralized systems like SVN or CVS where all history lives on a central server, Git gives every developer a complete copy of the repository history on their own machine. This means developers can work offline, commit locally, and push changes to shared repositories when ready. Git's branching model is also significantly more flexible and lightweight than older systems.

What is a branch in version control?

A branch is an independent line of development within a repository. Creating a branch lets a developer make changes without affecting the main codebase, work on a feature or bug fix in isolation, and then merge those changes back when ready. Branching is cheap and fast in Git, making it practical to create a new branch for every feature or fix, which is now considered a standard professional practice.

What is the difference between GitFlow and trunk-based development?

GitFlow is a branching strategy using long-lived branches for features, releases, and hotfixes, providing structure for teams doing infrequent, batched releases. Trunk-based development has all developers commit to a single main branch (trunk) continuously, with features hidden behind feature flags, enabling continuous integration and deployment. Most modern high-performing teams have shifted toward trunk-based development as it reduces merge complexity.

Does version control apply to things other than code?

Yes. Version control principles apply to any text-based files that change over time, including configuration files, infrastructure-as-code definitions, documentation, data pipelines, and even written content. The practice of storing these in version-controlled repositories — sometimes called 'everything as code' — has become standard in DevOps and infrastructure management, extending the benefits of change tracking, rollback, and collaboration beyond software source code.