What Is the Architecture of the Mind?

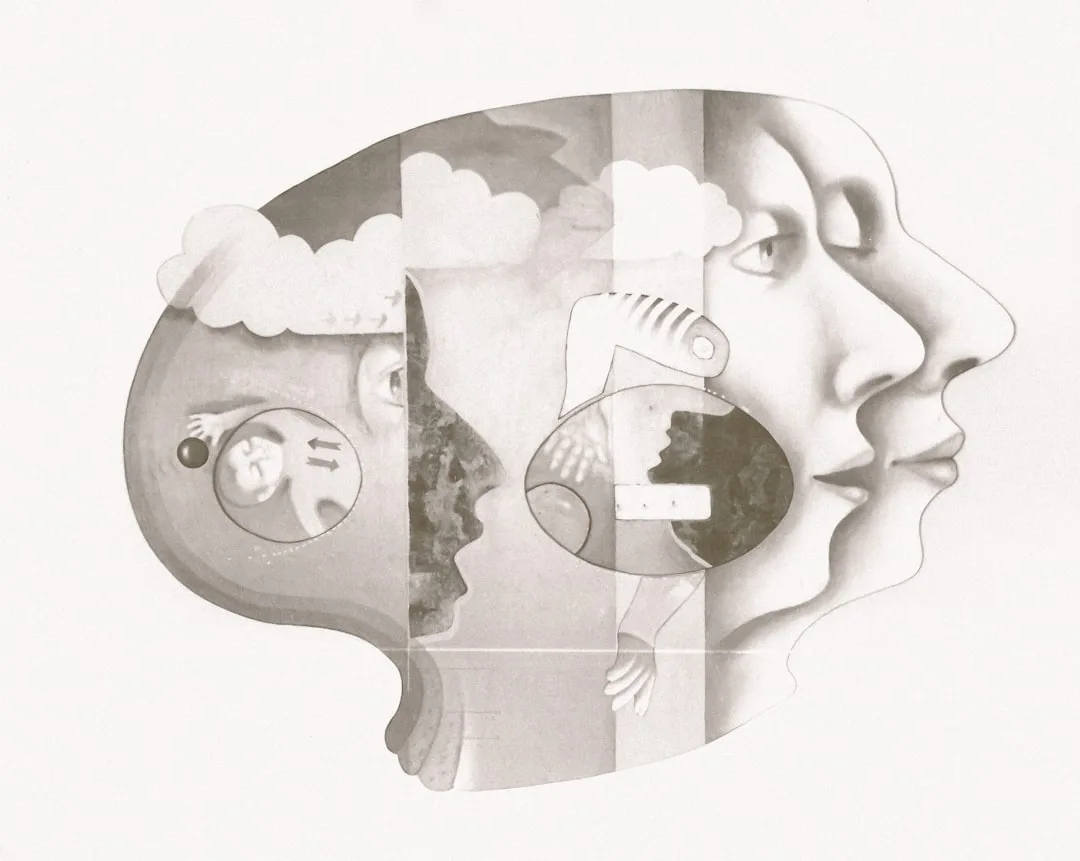

Actually — The mind is not a single general-purpose processor but a collection of specialized cognitive systems - including automatic and deliberate processing modes, working memory, long-term memory, attentional systems, and emotional circuits - that operate in parallel and interact in ways only partially accessible to conscious introspection. Contemporary cognitive science, drawing on decades of research in cognitive psychology, neuroscience, and behavioral economics, has replaced the simple "mind as computer" metaphor with a more accurate picture of a biological system shaped by evolution, subject to systematic constraints, and capable of both remarkable insight and predictable error.

Understanding this architecture is the foundation of applied cognitive science and explains why human behavior so often diverges from what deliberate reasoning alone would predict.

In 1956, George Miller published a paper in Psychological Review titled "The Magical Number Seven, Plus or Minus Two: Some Limits on Our Capacity for Processing Information." It was a review of experiments measuring how much information people could hold in immediate awareness - how many tones they could distinguish, how many numbers they could remember in sequence, how many objects they could identify simultaneously without counting. The consistent answer was approximately seven.

Miller's paper launched the information-processing framework that dominated cognitive psychology for the next fifty years: the mind as a computing system with definable capacities, processing stages, and architectural constraints. But the information-processing framework has since been substantially revised by decades of research in cognitive neuroscience, evolutionary psychology, and behavioral economics.

The revised picture is considerably more interesting than the computer metaphor suggests - and considerably more relevant to understanding why people think and behave as they do.

The mind is not a general-purpose calculator with specific memory limitations. It is a collection of specialized systems, evolved for different purposes, operating on different timescales, with different information inputs and outputs, coordinated in ways that are only partially accessible to conscious introspection. Understanding this architecture - how the systems interact, where they conflict, and what the architecture implies for behavior - is the foundation of applied cognitive science.

"The mind is not a single thing but a collection of systems, each with its own logic, each evolved for different purposes." — Steven Pinker, 1997

The Two-Speed Architecture

The most influential framework in contemporary cognitive psychology is dual-process theory: the distinction between two modes of cognitive processing that operate in parallel and interact in complex ways.

System 1 (also called the automatic system, the experiential system, or Type 1 processing) is fast, automatic, parallel, and largely unconscious. It runs continuously, processes multiple inputs simultaneously, draws on emotional associations and pattern recognition, and generates outputs - impressions, intuitions, behavioral impulses - that enter consciousness without the process that generated them being accessible to introspection.

| Cognitive System | Processing Style | Capacity | Typical Error |

|---|---|---|---|

| System 1 (Automatic) | Fast, unconscious, associative | Effectively unlimited | Generates biased intuitions; responds to irrelevant cues |

| System 2 (Deliberate) | Slow, effortful, logical | ~4 chunks in working memory | Lazy — often endorses System 1 output without checking |

| Working memory | Active, conscious | ~4 chunks | Overloaded by complex multi-step problems |

| Long-term memory | Reconstructive, associative | Very large | Distortion and false memories |

| Attentional spotlight | Selective, serial | One focal task at a time | Inattentional blindness; misses non-attended information |

System 1 includes:

- Perceptual processing (recognizing faces, words, objects)

- Emotional processing (immediate affective responses to stimuli)

- Learned automaticity (driving, typing, skilled performance)

- Pattern recognition based on accumulated experience

- Social inference (reading intentions, emotions, status from behavior and appearance)

- Heuristic judgment (rapid evaluation of options and situations)

System 2 (the deliberative system, the reflective system, or Type 2 processing) is slow, deliberate, sequential, and conscious. It requires attention and effort, processes information step by step, can override System 1 outputs when deployed with sufficient effort, but is limited in capacity and tires with extended use.

System 2 includes:

- Step-by-step logical reasoning

- Mathematical calculation beyond simple arithmetic

- Planning and complex decision-making

- Following complex instructions

- Monitoring social behavior for consistency with norms

- Learning new skills (before they become automatic)

The interaction between these systems is where much interesting cognitive behavior resides. System 1 generates an immediate response; System 2 may endorse it, modify it, or override it - but only if it is engaged. System 2 is lazy in a technical sense: it does the minimum necessary, endorsing System 1's outputs unless there is a specific reason to override.

*Example*: The bat-and-ball problem: "A bat and a ball together cost $1.10. The bat costs $1.00 more than the ball. How much does the ball cost?" System 1 immediately generates "10 cents." System 2, if engaged, identifies this as wrong (if the ball costs 10 cents and the bat costs $1.00 more, the bat costs $1.10, total $1.20, not $1.10).

The correct answer is 5 cents. In studies with college students, roughly 50% answer 10 cents - indicating that System 2 was not engaged to check the immediate output.

Memory: Not a Storage System but a Reconstruction System

The popular conception of memory as a storage system - like a hard drive where experiences are saved and retrieved - is substantially incorrect. Research on memory, initiated by Frederic Bartlett's 1932 studies and developed through decades of experimental work, shows that memory is a reconstructive process: memories are rebuilt from fragments at retrieval, not played back from recordings.

The implications are significant:

Working Memory

Working memory is the system that holds information in active, conscious use for immediate processing. It corresponds roughly to what Miller measured: it holds approximately 4 items (later research by Nelson Cowan revised Miller's estimate downward from 7), with each item being a chunk - an organized unit of information that can be complex.

The limits of working memory are the limits of conscious thought. Complex reasoning, planning, and analysis must all be conducted within these constraints. This is why external representations (notes, diagrams, models, lists) dramatically extend cognitive performance: they offload the working memory burden onto the environment, allowing more complex processing than working memory alone supports.

Long-Term Memory

Long-term memory is organized not as a filing system but as a network of associations: memories are stored through their connections to other memories, to emotional states, to contexts, and to patterns. Retrieval is not lookup by location but reconstruction from associative cues. This is why context dramatically affects recall - the same information recalled in the context where it was learned is retrieved more accurately than when recalled out of context.

Long-term memory includes:

Episodic memory: Memory for personal experiences, with associated temporal and contextual information ("I remember when..."). Episodic memories are particularly susceptible to distortion and confabulation.

Semantic memory: Memory for general knowledge, facts, and concepts, without the personal episodic context. More stable than episodic memory and less susceptible to emotional distortion.

Procedural memory: Memory for how to do things - skills, routines, and automated procedures. Procedural memory is stored differently from declarative memory (episodic and semantic) and is more robust to brain damage in many conditions. A patient with severe amnesia for episodic and semantic information can still learn new motor skills.

Implicit memory: Memory that influences behavior without conscious access - priming effects, conditioned responses, learned associations. People who have been repeatedly exposed to a stimulus respond differently to it than naive subjects, even when they cannot consciously recall the prior exposure.

The Reconstructive Nature and False Memory

Elizabeth Loftus's decades of research on eyewitness memory demonstrated that memory reconstruction is highly malleable. In her landmark 1974 studies with John Palmer, subjects who watched videos of car accidents and were asked how fast the cars were going when they "smashed" versus "contacted" gave substantially different speed estimates - and were more likely to falsely remember broken glass (not present in the video) in the "smashed" condition.

The implications for law, policy, and everyday reliance on remembered information are profound. Eyewitness testimony is among the least reliable forms of evidence, yet it is among the most persuasive to juries. The certainty with which a memory is held is not a reliable indicator of its accuracy.

Attention: The Bottleneck

Attention is the process of selectively allocating cognitive resources to a subset of available information. It is the bottleneck between the vast information processing capacity of unconscious systems and the limited conscious working memory.

Selective Attention and Inattentional Blindness

The mind does not process all available information; it processes a selected subset, with unattended information largely failing to enter conscious awareness. Daniel Simons and Christopher Chabris's 1999 "gorilla experiment" demonstrated this dramatically: subjects asked to count basketball passes among players in white shirts largely failed to notice a person in a gorilla suit walking through the scene. The gorilla was plainly visible; it was not attended to.

Inattentional blindness - the failure to perceive clearly visible events due to attentional engagement elsewhere - has real-world consequences. Pilots who are focused on a system malfunction may miss clear visual indicators outside the cockpit; surgeons focused on a specific procedure may miss complications in adjacent areas; drivers engaged in conversation (even hands-free) are less able to perceive and respond to road hazards than drivers who are not.

Divided Attention and Multitasking

The popular concept of multitasking - performing multiple cognitive tasks simultaneously - is substantially a myth for tasks that require attention. What people call multitasking is rapid task-switching: quickly alternating between tasks rather than simultaneously performing them. Each switch incurs a task-switching cost: a period of performance degradation as the cognitive system reorients to the new task.

Research consistently shows that task-switching reduces performance on all tasks relative to sequential single-tasking.

*Example*: A 2006 study by Glenn Wilson for Hewlett-Packard found that workers distracted by emails and phone calls showed temporary IQ drops of 10 points - more than twice the decrement from smoking marijuana. The distraction itself was not the problem; the attentional cost of interruption and reorientation was.

Emotion's Role in Cognition

One of the most important revisions to the information-processing framework has been the recognition that emotion is not separate from cognition but integral to it. Antonio Damasio's work with patients who had suffered damage to the ventromedial prefrontal cortex - which links emotional processing to decision-making - showed that emotionless reasoning is ineffective reasoning.

Patients who could analyze situations perfectly but who lacked emotional responses were unable to make effective real-world decisions.

Emotions serve specific cognitive functions:

Value marking: Emotional responses rapidly mark stimuli and options as good or bad, worth approaching or avoiding, important or unimportant. Without this marking, all options have equal cognitive salience - making even simple choices computationally intractable.

Attention direction: Strong emotional responses (fear, curiosity, disgust) direct attention toward the emotional stimulus, prioritizing it for processing. This attentional effect is automatic and fast, operating before conscious evaluation.

Memory consolidation: Emotionally significant events are remembered more robustly than emotionally neutral ones. The amygdala, the brain region central to emotional processing, enhances memory consolidation for emotional events - ensuring that significant experiences are retained.

Motivational activation: Emotions produce the motivational states that drive behavior. Without the drive produced by desire, fear, interest, or discomfort, behavior loses direction.

The practical implication: emotional states are not noise to be filtered from reasoning; they are essential inputs. But they need to be interpreted, not just felt, and the relationship between emotional signals and rational analysis requires careful management.

Metacognition: Thinking About Thinking

Metacognition is the capacity to monitor and control one's own cognitive processes - to think about thinking. It includes awareness of what you know and do not know, the ability to evaluate the quality of your own reasoning, the capacity to detect errors, and the ability to select and deploy cognitive strategies appropriate to the task.

Metacognitive accuracy - how well your confidence in your judgments correlates with their actual accuracy - is highly variable across domains, individuals, and cognitive states. Dunning and Kruger's research showed that metacognitive ability is domain-specific: people who are incompetent in a domain typically lack the metacognitive skill to recognize their incompetence, because the competence required to perform well and the competence required to evaluate performance are related.

This produces the paradox that the least competent performers are often the most confident.

High metacognitive ability - accurate self-assessment of knowledge, clear awareness of the limits of one's understanding, reliable detection of reasoning errors - is a characteristic of expert reasoners and is distinct from raw cognitive ability. It can be developed through deliberate practice, particularly through experience in domains where feedback is rapid and accurate enough to calibrate self-assessment.

The Limits of Introspection

A critical feature of the mind's architecture is that conscious introspection provides limited and often inaccurate access to the processes that generate behavior. People report reasons for their choices, describe their decision-making processes, and explain their attitudes and behavior - and these reports are often confabulation: plausible explanations constructed after the fact to account for behavior that was actually driven by processes the person has no conscious access to.

Richard Nisbett and Timothy Wilson's landmark 1977 paper "Telling More Than We Can Know" documented this systematically: people's verbal reports about the mental processes underlying their choices often do not match the actual processes responsible for those choices, as revealed by experimental manipulation. Subjects reported reasons for preferring one option over another that had no actual causal role in their choice; the actual cause (subtle experimental manipulation of presentation) was not mentioned.

This has direct implications for how much weight to place on self-report in understanding behavior, and for how much to trust one's own explanations for one's choices and reactions. The mind generates behavior through processes that are largely unconscious; it then generates explanations that feel accurate but often reflect narrative construction rather than causal reporting.

Understanding this architecture - the automatic and deliberative systems, the reconstructive memory, the attentional bottleneck, the essential role of emotion, the limits of introspective access - is not merely theoretically interesting. It is practically necessary for understanding why people behave inconsistently with their intentions, why intelligent people make predictable errors, and what interventions can improve decision-making by working with cognitive architecture rather than against it.

Neuroscience Findings That Revised the Cognitive Model

Advances in brain imaging and cognitive neuroscience since the 1990s have substantially revised the information-processing model of the mind, confirming some elements of the computational metaphor while challenging others.

Functional MRI research by John-Dylan Haynes at the Max Planck Institute for Human Cognitive and Brain Sciences produced a striking 2008 finding: the neural correlates of a decision to press a button with the left or right hand could be detected in prefrontal and parietal cortex up to 10 seconds before the participant reported consciously choosing which hand to use. The finding, published in Nature Neuroscience, suggested that conscious awareness of a decision follows its neural initiation rather than preceding it.

The research has been interpreted as evidence that conscious intention may be a post-hoc narrative about processes that have already been initiated by System 1 - consistent with Nisbett and Wilson's 1977 finding that verbal reports of decision processes often do not match the actual causal mechanisms.

Antonio Damasio's somatic marker research with patients who had suffered ventromedial prefrontal cortex damage generated direct evidence for emotion's role in cognition. In the Iowa Gambling Task, developed by Damasio and Antoine Bechara in 1994, participants chose cards from four decks - two "bad" decks that led to net losses over time, two "good" decks that led to net gains.

Normal participants developed a skin conductance response (a physiological anxiety marker) when reaching toward the bad decks before they could consciously articulate why those decks were disadvantageous. Patients with vmPFC damage showed no such anticipatory anxiety response and continued choosing from the bad decks even after consciously recognizing their disadvantage.

The finding demonstrated that emotional systems provide anticipatory signals that guide decision-making below the threshold of conscious reasoning - and that damage to these systems produces characteristic decision-making failures despite preserved analytical intelligence.

Working memory neuroscience refined Miller's "magical number seven" estimate significantly. Nelson Cowan at the University of Missouri synthesized evidence from multiple paradigms in a 2001 review and concluded that true working memory capacity - the number of discrete chunks held simultaneously in the focus of attention - is approximately four, not seven. Miller's estimate conflated working memory with the additional contributions of rehearsal strategies (subvocal repetition extends the apparent capacity of auditory memory) and chunking (organizing information into meaningful units).

The distinction matters practically: four chunks represents a severe constraint on complex reasoning, and it explains why cognitive offloading to external representations (notes, diagrams, structured frameworks) dramatically improves the quality of analytical thinking.

Metacognition research by Stephen Fleming at University College London used neuroimaging to identify the neural basis of metacognitive accuracy. Fleming's 2010 study in Science found that the volume of gray matter in the anterior prefrontal cortex predicted individual differences in metacognitive accuracy - how well participants' confidence in their perceptual judgments matched their actual accuracy.

The structural finding suggests that metacognitive ability has a neurological substrate and varies across individuals in ways that are somewhat independent of general cognitive ability. People with the same perceptual accuracy can differ substantially in how accurately they monitor that accuracy, with real consequences for how they update beliefs, seek additional information, and assess when they need help.

Memory Distortion: Research With Practical Consequences

Elizabeth Loftus's research on memory malleability has been extended into domains with significant social and legal implications, producing findings that have changed practices in criminal justice, clinical psychology, and organizational management.

The lost-in-the-mall study by Loftus and Jacqueline Pickrell (1995) demonstrated that entirely false memories could be implanted through suggestion. Participants were given descriptions of three true childhood events (verified with family members) and one false event (being lost in a shopping mall as a child). After several interview sessions with suggestive questioning, 25% of participants recalled the false event in some detail, with some generating elaborate descriptions of the experience.

The finding has been replicated with a variety of false memories, including memories of committing crimes. A 2015 study by Julia Shaw and Stephen Porter at the University of British Columbia found that 70% of participants could be led to believe they had committed a crime (assault, theft, or assault with a weapon) as a teenager through three structured interview sessions using suggestive questioning.

The legal implications of memory research have been systematically quantified. The Innocence Project, founded by Barry Scheck and Peter Neufeld in 1992 at Cardozo School of Law, has used DNA evidence to exonerate over 375 wrongfully convicted individuals in the United States. Analysis of these cases found that mistaken eyewitness identification contributed to approximately 69% of wrongful convictions - making it the single largest contributor to wrongful conviction.

The National Academy of Sciences commissioned a comprehensive review in 2014, led by Thomas Albright at the Salk Institute, which concluded that eyewitness memory is fundamentally reconstructive and unreliable as a primary evidence source when properly understood, and recommended procedural reforms including blind administration of lineups and mandatory recording of eyewitness confidence immediately after identification.

Organizational memory has been studied as a parallel phenomenon. Karl Weick at the University of Michigan documented how organizations reconstruct memories of decisions and events in ways that fit current narratives rather than accurately preserving historical records. His case study of the Mann Gulch fire disaster (1949) showed how survivors' memories of the sequence of events - what orders were given, who moved where, when the fire overtook them - diverged substantially from contemporaneous records.

Weick argued that organizational "sensemaking" - the ongoing process of constructing shared narratives about what has happened and why - systematically rewrites organizational memory in ways that protect current assumptions and authority structures, impeding genuine learning from failure.

Quantified Research on Working Memory, Attention, and Cognitive Limits

Decades of experimental work have translated the theoretical architecture of the mind into measurable limits, with direct implications for how people design work environments, educational curricula, and decision processes.

Nelson Cowan's 2001 review at the University of Missouri, published in Behavioral and Brain Sciences, synthesized evidence from multiple experimental paradigms to conclude that the true capacity of working memory - the items held simultaneously in the focus of attention - is approximately four chunks, not the seven Miller proposed in 1956. The distinction matters practically.

Miller's estimate included contributions from subvocal rehearsal (silently repeating information extends auditory memory) and chunking (grouping items into meaningful units). When these strategies are controlled for, the residual capacity is closer to four. Cowan's estimate has been replicated in studies of visual change detection, verbal recall, and spatial memory across cultures, suggesting it reflects an architectural constraint rather than a learned limit.

The practical consequence: complex reasoning that requires more than four independent considerations to be held simultaneously degrades unless external representations (notes, diagrams, structured frameworks) are used to offload the excess.

John Sweller's cognitive load theory, developed at the University of New South Wales beginning in 1988, built directly on working memory constraints to explain why some learning approaches work and others fail. Sweller distinguished three types of cognitive load: intrinsic (complexity inherent in the material), extraneous (complexity imposed by poor presentation or task design), and germane (cognitive effort devoted to schema formation and learning).

His central finding, supported across more than 200 empirical studies in educational psychology, was that extraneous cognitive load - introduced by cluttered instructional materials, poorly sequenced information, or unnecessary simultaneous demands on working memory - directly reduces learning by consuming working memory resources needed for germane processing. Applied to education: worked examples outperform problem-solving for novices because they reduce extraneous load, freeing working memory for schema formation.

Applied to product design and communication: the same principle explains why simplified interfaces, progressive disclosure, and clear sequencing improve both comprehension and decision quality in ways that feel like common sense but have precise cognitive explanations.

David Strayer and Frank Drews at the University of Utah published a series of studies between 2001 and 2006 establishing that hands-free mobile phone use while driving produced impairment comparable to blood alcohol concentrations of 0.08% - the legal intoxication limit in most US states. Using driving simulators, they measured reaction times, following distances, and accident rates in participants who were sober and undistracted, sober and using a hands-free phone, or intoxicated at 0.08% BAC.

The hands-free phone condition showed worse performance than the intoxicated condition on several measures. The mechanism was attentional: phone conversations require maintaining an active mental representation of an absent person and an unfolding narrative, consuming working memory and attentional resources that would otherwise support driving. The study directly contradicted the intuitive belief that hands-free devices were substantially safer than handheld ones, and influenced subsequent traffic safety regulations in multiple countries.

The relationship between emotional arousal and cognitive performance was quantified by Sonia Lupien at McGill University and colleagues across multiple studies of cortisol (the primary stress hormone) and working memory. Lupien's research found an inverted-U relationship: moderate cortisol levels enhanced memory consolidation and attention, while high cortisol levels - associated with sustained stress - impaired working memory performance, reduced hippocampal neurogenesis, and interfered with the prefrontal cortex function underlying deliberate System 2 reasoning.

In a 2009 study of 4,000 participants, Lupien found that individuals with a history of early-life stress showed measurably reduced working memory capacity in adulthood, with effect sizes comparable to several years of aging. The finding extended cognitive architecture research into organizational design: chronic workplace stress does not merely reduce subjective wellbeing.

It directly reduces the cognitive capacity for the complex reasoning that knowledge work requires.

Inattentional blindness has been quantified in professional settings by Chabris and Simons beyond their original gorilla experiment. A 2010 follow-up study found that approximately 35% of radiologists failed to notice a small image of a gorilla superimposed on a chest CT scan when they were searching for lung nodules - even when the gorilla was 48 times larger than the average nodule and directly in the scan area.

The radiologists who missed it were not distracted; they were intensely focused on their primary task. The finding illustrates the depth of attentional tunneling in expert professional performance: expert focus, while improving performance within the attended domain, can blind experts to unexpected but consequential information outside that domain. The practical implication for medical, aviation, and industrial safety is that expert attention itself is a source of systematic missed signals, not a guarantee of comprehensive awareness.

The Science of Memory Distortion in Consequential Settings

Research on memory distortion has expanded from laboratory demonstrations into institutional settings where its consequences are measurable in wrongful convictions, organizational failures, and clinical errors.

Elizabeth Loftus at the University of California, Irvine has continued her foundational work on memory malleability across four decades. Her 1994 lost-in-the-mall study with Jacqueline Pickrell demonstrated that entirely fabricated childhood memories could be implanted through suggestive interviewing: 25% of participants came to "remember" in detail an event that never occurred.

A 2015 replication and extension by Julia Shaw and Stephen Porter at the University of British Columbia used three structured interview sessions to lead 70% of participants to develop detailed false memories of having committed crimes (theft, assault, or assault with a weapon) as teenagers. Participants who had committed no such crimes produced rich autobiographical narratives with sensory detail, emotional content, and moral self-assessment - exactly the characteristics that jurors and clinicians use to judge memory credibility.

The study has profound implications for criminal justice systems that continue to rely on confident, detailed witness accounts as primary evidence.

The Innocence Project, founded in 1992 by attorneys Barry Scheck and Peter Neufeld at Cardozo School of Law, has used post-conviction DNA testing to exonerate more than 375 wrongfully convicted individuals as of 2024. The Project's systematic case analysis found that eyewitness misidentification contributed to approximately 69% of these wrongful convictions - making it the single largest contributor.

Misidentifications were not correlated with lower witness confidence: many misidentifying witnesses reported high certainty. A 2020 analysis published in Psychological Science in the Public Interest by Gary Wells, Elizabeth Loftus, and Brandon Garrett synthesized 100 years of eyewitness research and documented that standard police lineup procedures as of 2000 contained structural features - non-blind administration, inadequate witness instructions, simultaneous rather than sequential presentation - that reliably inflated misidentification rates.

Police departments that implemented evidence-based reforms (blind administration, sequential lineups, immediate confidence documentation) reduced misidentification rates in field studies by approximately 40% without reducing accurate identification rates, demonstrating that the procedural reforms were not merely cautious but were genuinely improving accuracy.

Organizational memory distortion was studied systematically by Kathleen Eisenhardt at Stanford and Mark Zbaracki at the University of Western Ontario across studies of strategic decision-making in high-technology firms. Their research documented that organizations routinely misremember the causal logic of past decisions - attributing successes to deliberate strategy that was actually improvised, and attributing failures to external factors that internal analysis had identified as controllable.

This "retrospective rationalization" was not motivated dishonesty but was consistent with Loftus's laboratory findings: memories are reconstructed to fit current needs and frameworks, and current frameworks are shaped by outcomes that were not known at the time of the original decision. The practical consequence is that organizational learning from experience is systematically distorted: companies mislearn from their successes (attributing them to the wrong causes) and from their failures (exculpating the decision processes that contributed to them).

Post-mortems conducted immediately after project completion, before the outcome's meaning has been absorbed and retrofitted to memory, produce significantly more accurate and actionable retrospective analysis than those conducted months or years later.

Sleep deprivation's effects on memory and decision quality have been measured with precision by Matthew Walker at the University of California, Berkeley and David Dinges at the University of Pennsylvania. Walker's 2017 research, synthesizing imaging studies and behavioral experiments, documented that a single night of total sleep deprivation reduced working memory capacity by approximately 40%, impaired prefrontal cortex function (the region most responsible for System 2 deliberate reasoning), and increased amygdala reactivity by 60% - making sleep-deprived individuals significantly more reactive to emotional stimuli and less able to regulate emotional responses through deliberate cognition.

Dinges's research on partial sleep deprivation found that sleeping six hours per night for two weeks - a pattern common among knowledge workers - produced cognitive impairment equivalent to 24 hours of total sleep deprivation, while participants reported only mild sleepiness. The subjective experience of adequate functioning diverged systematically from objective performance impairment: people working on six hours of sleep felt nearly fine and were performing substantially below their rested capacity.

The finding directly undermines the assumption that individuals are reliable judges of their own cognitive impairment, and has influenced recommendations from the American Academy of Sleep Medicine and the CDC on workplace scheduling and safety-sensitive job requirements.

References

- Kahneman, D. Thinking, Fast and Slow. Farrar, Straus and Giroux, 2011. https://us.macmillan.com/books/9780374533557/thinkingfastandslow

- Miller, G.A. "The Magical Number Seven, Plus or Minus Two." Psychological Review, 63(2), 81-97, 1956. https://doi.org/10.1037/h0043158

- Cowan, N. "The Magical Number 4 in Short-Term Memory." Behavioral and Brain Sciences, 24(1), 87-114, 2001. https://doi.org/10.1017/s0140525x01003922

- Damasio, A. Descartes' Error: Emotion, Reason, and the Human Brain. Putnam, 1994. https://www.penguinrandomhouse.com/books/288104/descartes-error-by-antonio-damasio/

- Simons, D. & Chabris, C. "Gorillas in Our Midst: Sustained Inattentional Blindness for Dynamic Events." Perception, 28(9), 1059-1074, 1999. https://doi.org/10.1068/p281059

- Loftus, E. & Palmer, J. "Reconstruction of Automobile Destruction." Journal of Verbal Learning and Verbal Behavior, 13(5), 585-589, 1974. https://doi.org/10.1016/S0022-5371(74)80011-3

- Nisbett, R. & Wilson, T. "Telling More Than We Can Know: Verbal Reports on Mental Processes." Psychological Review, 84(3), 231-259, 1977. https://doi.org/10.1037/0033-295X.84.3.231

- Bartlett, F.C. Remembering: A Study in Experimental and Social Psychology. Cambridge University Press, 1932. https://www.cambridge.org/core/books/remembering/0AEDBB4D2CDBE6D0FBE024975FA0C640

- Evans, J. & Stanovich, K. "Dual-Process Theories of Higher Cognition: Advancing the Debate." Perspectives on Psychological Science, 8(3), 223-241, 2013. https://doi.org/10.1177/1745691612460685

- Dunning, D. & Kruger, J. "Unskilled and Unaware of It." Journal of Personality and Social Psychology, 77(6), 1121-1134, 1999. https://doi.org/10.1037/0022-3514.77.6.1121

Frequently Asked Questions

How does the mind process information?

Through perception, attention filtering, working memory processing, pattern matching, and storage in long-term memory networks.

Is the mind rational?

Partially. The mind uses shortcuts (heuristics) that work well usually but create systematic biases in certain situations.

What is System 1 vs System 2 thinking?

System 1 is fast, automatic, intuitive; System 2 is slow, deliberate, analytical. Most thinking is System 1.

Why is attention limited?

Consciousness can only process a tiny fraction of sensory input. Attention filtering is necessary to prevent overwhelm.

How does the mind form beliefs?

Through pattern recognition, social learning, emotional associations, and confirmation of existing frameworks—not purely rational evidence evaluation.

Can you change how your mind works?

You can train attention, build better mental models, develop skills, but fundamental cognitive architecture has limits.

Why does the mind take shortcuts?

Heuristics evolved because speed and efficiency often beat perfect accuracy in real-world survival and decision situations.

What role do emotions play in thinking?

Emotions provide rapid value assessments, motivation, priority setting, and often encode important information analysis misses.